There’s something very strange going on at Anthropic. Day after day I see evidence of what can only be described as what I used to study in the Cold War: closed systems of cooked intelligence.

When people or companies intentionally make false claims about the work they’re doing or the products they’re selling, we call it fraud. What is it when one overlooks LLM mistakes?

An integrity breach I just stumbled upon might be the most egregious so far.

Anthropic published an economics paper on April 22, 2026 called What 81,000 people told us about the economics of AI. Right away my suspicion went up, because it’s framed as “told us” rather than what was said, or what is known. Story telling. Like a “once upon a time” yarn, instead of a report, is a disinformation tell.

Lead author Maxim Massenkoff, coauthor Saffron Huang. Who? Anthropic staff. Second tell. I’m not seeing independence, yet this is supposedly about what people say about Anthropic. “Everyone says they love us”. Uh-huh.

The findings are positioned oddly as well. That’s a third tell and I’m barely getting started. What is going on here? The vendor wants the public to believe something, which is usually called marketing. Users feel empowered. Productivity gains are real. Job-displacement anxiety correlates with usage intensity in exactly the way Anthropic’s own task-exposure measure predicts.

The architecture of this economics paper is shockingly awful. Fourth tell. At this point, might as well just say we’re up to our eyeballs in ethics issues.

Respondents are self-selected Claude.ai personal-account users who chose to answer a survey. The survey runs inside Claude.ai through an in-product tool called Anthropic Interviewer, built by Grace Yun, AJ Alt, and Thomas Millar. Occupation labels come from Claude inferring from free-form text. Career stage comes from Claude inferring from free-form text. The productivity rating is Claude reading the respondent’s own words and scoring them on a 1-to-7 scale. Job-threat concern is Claude reading the same words and coding them as present or absent.

The analysis? Internal.

The review? Internal.

A subject group talking to the product, about the product, scored by the product, reported by the product.

What is it about closed, controlled, cold environments that keep showing up at Anthropic like this? Would it have bothered them so much to open it up?

Missing Reviewers

The same lead author a month ago also published a different paper. March 5, 2026. Labor market impacts of AI: A new measure and early evidence. Coauthor Peter McCrory. Notably, that paper thanked Martha Gimbel at the Yale Budget Lab, Anders Humlum at Chicago Booth, Evan Rose, and Nathan Wilmers at MIT Sloan for feedback on earlier versions. That reads normal to me.

Four external labor scholars working in exactly the relevant field. Credentialed, independent, field-adjacent.

The April 22 piece drops everyone. The external-feedback slot goes to Miriam Chaum, Ankur Rathi, Santi Ruiz, and David Saunders.

Four Anthropic insiders. Santi Ruiz announced joining Anthropic’s editorial team roughly three weeks before publication, leading their economics and policy editorial work and working with the new Anthropic Institute (his own LinkedIn post). David Saunders already appears in the March labor-market-impacts paper’s Anthropic-internal acknowledgments list. Miriam Chaum and Ankur Rathi appear in the March Economic Index “Learning curves” report’s internal list. Three of the four were thanked as internal staff one month earlier. Ruiz was still a journalist then. By the time the paper shipped, he was leading Anthropic’s economics editorial work. First Anthropic byline acknowledgment: this paper. In April they got moved to a separate “Additionally, we thank” paragraph that visually mirrors an external-review slot. Same people, same company, different paragraph. This is deliberate staging.

Economists? Zero.

Independent field expertise? Zero.

Same team. Same topic. Same lead author. One month later, the external review present in March is mysteriously absent in April.

Why?

Let me tell you. Humlum’s own published finding contradicts the April narrative. Anders Humlum (March acknowledgments, dropped from April) published in February 2026 with Emilie Vestergaard: a Denmark-wide linked employer-employee study, 25,000 workers, finding “no significant changes in earnings or hours worked, with confidence intervals ruling out even small effects.”

The actual labor economist in the March thank-you list produced the exact finding the April paper needed to suppress. One month later he was off the list and his findings were being contradicted by Anthropic, cooking a fairy tale.

That shows a pretty clear and concrete motive.

Tilt! Tilt!

This game is tilted. Stop the pinballs. Every decision in the study tilts one direction. The entire thing only passes favorable findings. Have a look for yourself.

| Stage |

Choice |

Tilt |

| Recruit |

Claude.ai personal-account users who opted in |

Satisfied users overrepresented |

| Instrument |

Anthropic Interviewer, inside Claude.ai |

Subject evaluates the product while using it |

| Occupation label |

61% missing, 28% Claude guessed, 11% explicit |

Figure 1 rests on Claude’s guesses |

| Career stage |

About half the sample gets no career-stage label; the rest are Claude’s inference. |

Half the N is manufactured |

| Productivity rating |

Claude scores free text on 1-to-7 |

Interviewer grading its own interview |

| Scale calibration |

Rebuilt after original Likert yielded almost entirely 6s and 7s |

Ceiling effect hidden inside a wider range |

| Scale anchor |

A 2x speedup scores 5 of 7, “substantially more productive” |

Positive range starts at a doubling |

| Productivity denominator |

42 percent dropped for “no clear indication” |

Reported mean conditional on positive disclosure |

| Beneficiary finding |

About 25 percent of the sample named a recipient at all |

“Benefits flow to self” headline is roughly 18 percent of total N |

| Review |

Internal only |

Classifier, scale, pipeline all unchecked |

Each step is maybe something to discuss on its own without noticing the whole. The caveats section lists most of them. A negative finding would have to clear self-selection, then Claude’s inference, then a recalibrated scale, then internal review with no outside economist in the room. That seems like a rather convenient filter by construction.

Sudden Scale Replacement

Footnote 3 admits the productivity scale was rebuilt. The original Likert “yielded almost entirely 6s and 7s.” The authors then reparameterized the 1-to-7 range so that 2 means “no change,” 5 means “substantially more productive,” and 7 means “transformatively more productive.” Under the new anchor, a two-hour task compressed to one hour earns a 5. A doubling of throughput scores the middle of the positive range, with two further steps of intensity above it before the ceiling.

Call it a rescaling. The ceiling effect stays. It is hidden inside a wider scoring interval.

Punch the Confusion Button

The deeper error here actually is epistemological. The lead author has a background in creating a classroom confusion button: students press it during lecture when they are confused, the instructor reads the heat map and adjusts pacing.

That is a shockingly bad design, at least fifty years out of date.

A button obviously captures a single instant, a press. The cognition it claims to measure is instead a thing of trajectory. Confusion at second N that resolves at N plus three through the next sentence registers incorrectly as a press. Confusion still beneath the student’s awareness, the kind that surfaces later on the problem set or the midterm, stays invisible to the instrument. Mid-processing uncertainty, the state where a claim sits in working memory and the student is still checking it against prior knowledge, gets forced to premature resolution at the button.

Bjork’s desirable-difficulties literature has argued for decades that exactly this productive confusion is where learning happens. I’ll say it again, the state of confusion is the learning. The button punishes it instead. Nisbett and Wilson settled the broader problem in 1977. Subjects have limited introspective access to their own cognitive processes. Self-report instruments designed around button-presses and scalar ratings produce artifacts of the instrument, falsely standing in for the cognition they claim to measure.

It’s basically the foundation of disinformation, a lie looking for a greater story to tell. Saying how many confusion buttons were pressed in a period is pretending to be about learning, but it’s actually about the obstruction of it. Would students have been less confused had they waited to press the button? Would the rate of confusion go down the less a button is pressed?

The 81,000-user study carries the same error into labor economics. Rate your productivity gain on a 1-to-7 scale flattens a cognitive trajectory to a scalar, captured at a moment when the subject is talking to the product under evaluation, scored by the product itself.

Confusion-button logic scaled to the labor market is reporting productivity when it’s obstructing it.

Better instruments exist. Post-task think-aloud protocols. Delayed retrieval tests. Longitudinal productivity panels anchored in objective task output. Slower, harder, more expensive, resistant to the headline finding. They don’t seem like the sort of thing Anthropic would allow.

Integrity Breach

The stated commitments to rigor, transparency, external feedback, and caveats are decoupled from this paper.

Right?

I mean I could understand a product manager writing a product marketing paper that called the product the best shit in town.

But this is supposedly a trained academic, an economist? What? With a PhD?

A closed pipeline. A classifier scoring its own classifier. A scale recalibrated to spread a ceiling effect across a wider axis. External reviewers present in one paper and gone from the next. The footnotes acknowledge each problem in turn. The headline numbers treat the footnotes as cosmetic.

A Berkeley PhD with a Steven Pinker coauthorship knows what a self-selection bias is. A team with access to four outside labor scholars one month earlier knows what external review looks like. The decisions that shaped the April 22 piece read as deliberate. The work was shipped with full awareness of what the design would produce.

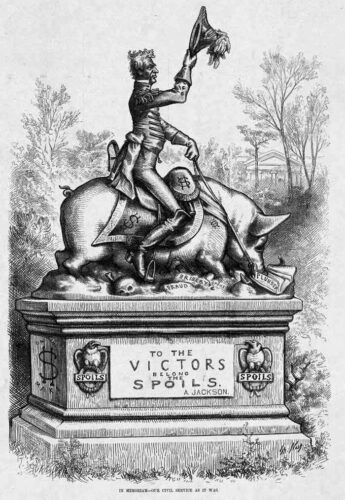

Anthropic owns the subject pool, the instrument, the classifier, the scale, the analysis, the review, the distribution channel, and the language in which the finding gets repeated to policymakers and journalists.

The vendor is the source, the scribe, and the arbiter.

This is some seriously disappointing writing.

The paper does not report what 81,000 people told Anthropic about the economics of AI. It reports what Anthropic’s product told Anthropic about itself, at a moment when the vendor needed that story to pump value.