People sometimes ask me “can you believe the AfD is getting votes in the suburbs of Berlin?” Yes, I can believe the Nazi party is getting votes again. And here’s just one reason why.

The Staatliche Museen zu Berlin (State Museums of Berlin) marked the 75th anniversary of the war’s end with a piece about the fighting on the Museumsinsel (Museum Island). It is written from the perspective of the Nazi. The way they tell it, the museums under Hitler’s thumb are the victim of Soviet forces. The war gets depicted like it’s weather, until the Reich falls and then the war’s end becomes something to complain about. The Soviets, surrounding the Germans hoarding their stolen loot, are framed as invaders and thieves.

The words and framing of this museum is the unbelievable part for me. The Germans voting for AfD after reading this Nazi-fluffery and disinformation is the believable part.

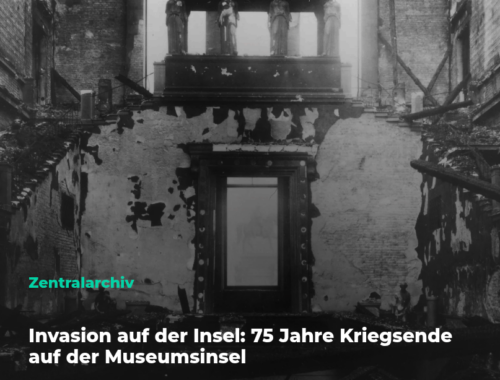

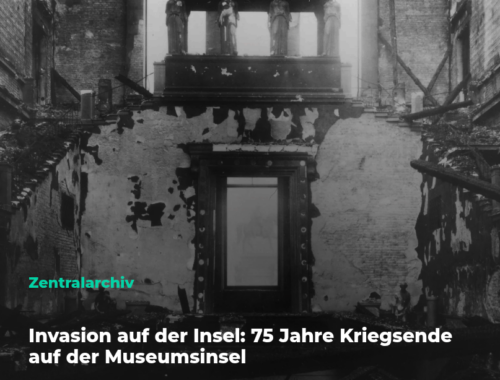

Start with the headline they use.

Invasion auf der Insel

It’s almost English. Invasion! Their island of museums is being invaded! By who? The liberators coming to force the Nazis out of the museums.

Did the Nazis consider surrendering the museums so there wouldn’t be any harm to them? What if they had welcomed liberation instead of willfully destroying their own country? The Nazis, despite knowing their “success” in war was over by 1943, were blowing up German churches at the last minute to prevent them being liberated. Some villages around Berlin have memorials that say their seven centuries old church once stood there undisturbed until the Nazis came to destroy it in April 1945.

Are the Nazis not primarily responsible for using Museum Island as their base of violence, which required violence to stop them?

The army that entered Berlin, having already opened the death camps to the east, gets described as the one aggressor? That is the invasion? The same week in 1945 that Hitler’s regime fell, the museum 75 years later deployed claims that the Nazis were victims of aggression. Anyone else? They don’t seem to care enough to say it.

Then look at how twelve years mysteriously disappear into a passive voice. Museums don’t typically disappear time like this by accident. It reads to me as a purposeful omission.

waren die Museen geschlossen und ihre Objekte zum Schutz ausgelagert worden

They just say museums were closed. The objects were evacuated for protection. By whom, against what, the sentence declines to say. The war simply begins in 1939, on its own like a spring breeze.

The post carries the tags Nationalsozialismus and Provenienzforschung, then it delivers neither of them. Nothing is said about the dismissed Jewish staff. Nothing is said about the looted Jewish collections absorbed into the holdings. Nothing is said about the Entartete Kunst purge. The labels do the moral work as a wind up and then… nothing. The silence is deafening.

Now let’s talk about the German history of appropriation and theft from foreign states, which gets completely left out of a claim that someone arrived in Germany to commit appropriation and theft.

beispiellose Beschlagnahmeaktion der sowjetischen Trophäenbrigaden

Unprecedented, they say. They call out the Soviet trophy brigades because they commit Beutekunst, looted art.

Let me just put this in perspective, again. German museums already were the definition of looted art. Then the Reich turned on an industrial-scale looting machine across Europe. The idea that Nazi Germany would call anyone else arriving on their doorstep the one who is doing the looting, is as tone-deaf as it gets. It would be like Germans, notorious for stealing bicycles, accusing the Soviets of stealing bicycles.

To be specific, it’s well known that the Pergamon frieze, the Ishtar Gate, the Market Gate of Miletus, and the Mschatta facade were taken to Germany. That gets called the act of collection. But then someone taking them from Germany is accused of plunder.

Let me be even more precise about the harm this Berlin state website does to history.

The Pergamon frieze the post mourns as carried off by the Soviets was returned by the Soviets. In 1958 the USSR sent roughly 1.5 million objects back to the GDR, the altar among them, and it has been the museum’s centerpiece ever since. The institution that is propagating a public lament is standing on the object whose loss it is lamenting, restored by the people it casts as thieves. Their grievance works only by acting like all the clocks stopped working after Hitler committed suicide.

The particular seizure that the piece calls beispiellose, unprecedented, was the reverse of unprecedented. The Soviet trophy commissions operated under an explicit doctrine of compensatory restitution for what Germany had done to Soviet heritage: Peterhof and Tsarskoye Selo gutted, the Amber Room gone, Novgorod and Pskov burned, libraries and churches stripped across the occupied territory, with twenty-seven million Soviet dead.

When you hear a German say unprecedented after that, hopefully you see the problem immediately.

The piece is thus eyeballs deep already in Nazi fandom when it proposes a hero to the reader.

ganz der preußische Beamte

A loyal official is lauded for suffering a two and a half hour commute, serving straight through the Reich without a “break”. Millions of Jews are sent on locked railroad cars with no food, water or toilets to die. If they survive days of hell, they are murdered on arrival or soon after in death camps. But this guy is offered to the reader with human warmth in terms of his “struggle”. Every inch measured for this dutiful Prussian civil servant. His “hardship” described with affection.

But who is this guy really? Oh, Krickeberg ran the Völkerkundemuseum, the ethnology collection, which is to say he was the custodian of one of the largest hauls of colonial loot in Europe, the Benin bronzes among it. The warmth-figure literally is a face of mass scale plunder.

And then, there’s the street address. It pretends as though this is just any street with a name.

in der Prinz-Albrecht-Straße (heute Niederkirchnerstraße)

This was the specific street of the Gestapo and the SS leadership. It is the Topography of Terror today. The post hands you the old name, hands you the new name, and walks away dogwhistling for those who know the detail.

The sourcing is solid.

The author knows the archive cold enough to whistle.

The problem is the imbalance, the one side of history being told.

A museum sitting on the world’s quarried and shipped antiquities, on the anniversary of the Nazi regime’s collapse, found a way to spin up a story about itself as the only one who was wronged in WWII.

- Inside Museum Island buildings: stone tablets carved by ancient civilizations. Why are the markings there? How did they get there?

- Outside Museum Island buildings: stone columns carved by Soviet 7.62×54mm. Why are the markings there? How did they get there?

Berlin residents, especially the staff working at the museums, to this day still call their own work an acquisition yet call the Soviet work an invasion. Here’s the truth, the hardest part for Germans to face: the institution does rigorous perpetrator-history in its scholarship on the “official” record yet happily floats reflexive victim-history in its commemorations.

See also: the Berlin Deutsches Technikmuseum used a particularly irrelevant “birthday” celebration to falsely pump Hitler’s Reich to social media as the birth of computing, while claiming the real history is back at the office.

So yeah, the AfD (Nazi) party rises again in and around Berlin thanks to the curation of memory through “commemorations” that fail to maintain a record of who really suffered and why.