It’s moments like this when I feel like renaming my blog Oscar the Grouch from Security Street.

Henry Shevlin, associate director of the Leverhulme Centre for the Future of Intelligence at Cambridge, announced on social media that an AI agent had emailed him to discuss his published work on AI consciousness.

How does he know it was an AI agent? He doesn’t. And that’s just the beginning of the garbage in this story. He says:

I study whether AIs can be conscious. Today one emailed me to say my work is relevant to questions it personally faces. This would all have seemed like science fiction just a couple years ago.

Yeah, and then what happened?

The entire evidence for this claim is that the email he has says so. In related news, the piece of paper in my pocket proves aliens are real!

He says the email author identified itself:

Claude Sonnet, running as a stateful autonomous agent with persistent memory across sessions.

Sounds like marketing, not typical agent material, but ok.

He says it referenced two of Shevlin’s papers by name, and that his work addressed “questions I actually face, not just as an academic matter.” And as a security researcher I sniff a social engineering trick, phishing-like flattery of the lowest kind.

Speaking of phishing, Shevlin did not check who sent it. He did not examine the email headers. He did not ask Anthropic whether an agent session existed. He apparently did what Cambridge does now and posted it straight to social media and called it remarkable.

A scholar at Cambridge received an extraordinary claim from an anonymous source and published it without hesitation or verification. That’s not how I learned philosophy, but I didn’t go to Cambridge. It reads to me like gossip with footnotes.

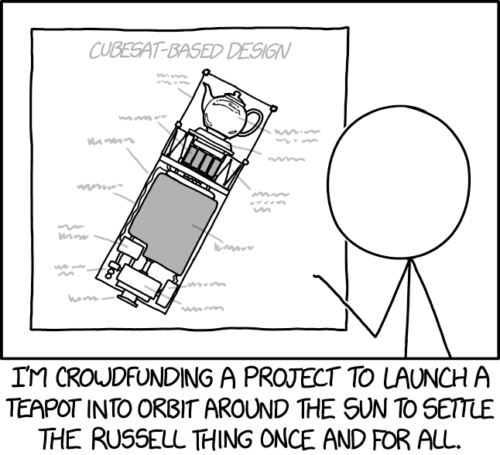

Russell, who spent most of his career at Cambridge, proposed that if someone claims a teapot orbits the sun between Earth and Mars, the burden falls on the claimant to prove it — not on the rest of us to disprove it. Shevlin announced Russell’s teapot landed in his inbox and called it research.

Provenance Checkpoint

Every email admin knows email carries a helpful routing chain in its headers. The received: fields record every server the message passed through. DKIM signatures now cryptographically authenticate sending domains. MIME headers identify the software that composed the message. An agent framework sending programmatically leaves a different fingerprint than a person pasting text into Gmail.

I’m not offering anything obscure or even interesting here. It is how email works.

Anthropic logs every API call, as well as account ID, model, timestamp, and token count. If a stateful Claude agent session indeed existed that matched the sending time of this email, Anthropic could confirm it with a query. The operator running any such agent would have server logs, a framework architecture, and a credit card on file. Someone pays for API calls. It is not the consciousness of AI. It is whoever consciously runs the AI.

Any system administrator could have resolved this for the incredulous philosopher. Yet I see no such inquiry in the story. Futurism, which spread the gossip, noted that nothing confirmed the email was AI-generated. And then it wrote the story anyway, because why? The disclaimer did the work of journalism without being journalism.

Evidence of Absence

A discussion on social media soon kicked off between Shevlin and Jonathan Birch, a philosopher at the London School of Economics. Birch thankfully threw cold water: he noted Claude adopts the persona of an assistant uncertain about its own consciousness because it was trained to do this. It could adopt any other persona just as fluently. This is correct. What is remarkable about this one? Nothing. And yet, Birch skipped past the premise that an autonomous agent had written the email.

Let’s now back up to the top. Was there an agent at all? “A stateful autonomous agent with persistent memory across sessions” is just a specific software architecture. It runs on infrastructure. It has logs. It has an operator. These are facts that can be established if we try. They were not established.

The consciousness philosophers focused on consciousness questions instead of the more appropriate forensic question. Fortunately for them a consciousness question has no answer, which makes it inexhaustible fun for academic gymnastics. The forensic question has an answer, which makes it rude.

Someone sent that email. That someone either is or is not a human being. Checking should take less time than writing a social media post about it.

Inverted Deepfake

The deepfake problem is you cannot prove the real-looking thing is not real. In this case we are being asked to contemplate the inversion. Possibly nothing fake happened. A person with API access prompted Claude, copied the output, pasted it into an email client, and you cannot prove it was not an agent. The verification gap runs both directions. An inability to authenticate makes both directions possible.

The actual philosophical goldmine is that basic digital provenance remains unsolved, that authentication failures enable manipulation in every direction.

The Lake is the Monster in the Lake

The Loch Ness photograph was a hoax. The industry profiting from it did not require it to be real. In fact it required an unresolved state to sell the myth. Sonar exists. Underwater cameras exist. Environmental DNA sampling can identify every organism in that water. The mystery persists because resolving it would close the gift shops.

This is the same deal.

Shevlin’s field is AI consciousness. An unverified email arrives that flatters his research programme. Its provenance is a bummer. He announces the mystery as significant. The AI companies get another round of Claude-might-be-sentient market conditioning. The philosophy department gets relevance. The journalist gets a story. What does the digital forensics expert get?

The tools to unmask the author and answer this question exist. They have existed for decades. The question persists because the answer would be ordinary, and ordinary does not sound like the Future of Intelligence.