The New York Times published a long piece by Clive Thompson on how AI is transforming programming. It’s like hearing someone drag their nails on a philosophy chalkboard in an attempt to erase centuries of human progress.

Developers at Google, Amazon, Microsoft, and startups describe a world where they barely need to write code anymore. They prompt a servile machine. They review from a distance, as if pleased as punch to be sipping on the veranda as all the work is done by others. They describe what they want in English and turn their cotton-pickin’ agents loose because of a newfangled device that automates “quality” tests for them. One developer reports being 10x to 100x more productive than if they did the work themselves. Another calls it liberation. That’s the language of Civil War, for those who study how Caty Green’s invention of an automation machine to end slavery (cotton ‘ngin) was stolen from her and inverted into the expansion and preservation of slavery instead. But I’m getting ahead of myself.

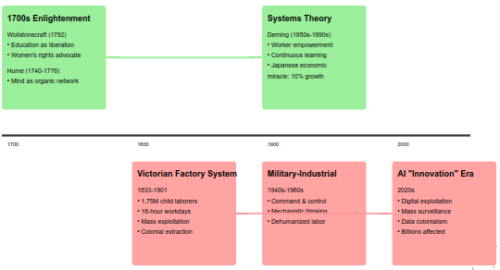

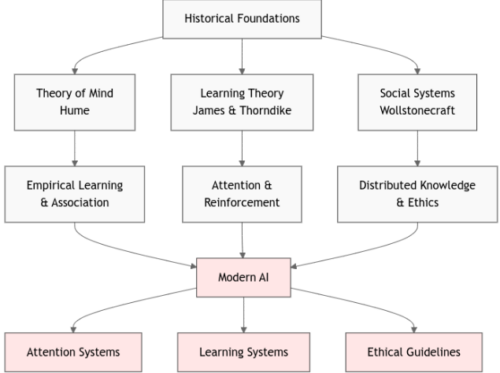

The article frames it all through a history of “layers of abstraction” in modern programming alone. Assembly gave way to Python, Python is giving way to English. Each layer makes the previous skill set less necessary while making output more abundant. Thompson treats this as history of programming languages, with no connection to the human condition in technology domains.

The problem with developers leaning so heavily on AI companies today is so much bigger and more dangerous than what the NYT reports.

Abstraction Is Civilization

Abstraction isn’t a feature of software engineering. It’s the operating logic of every major technological transition. Who makes fire anymore instead of pushing a button? Who carries water in a bucket instead of turning a tap? The mechanism disappears into the interface. The knowledge doesn’t vanish — it gets embedded in infrastructure and then forgotten by its users. That’s not a side effect. That’s the definition of progress.

What Thompson documents without quite naming is the moment when the act of instructing machines itself becomes the thing being abstracted away. Each layer makes the previous layer’s expertise less economically necessary. And each layer moves users further from the substrate — the actual material they’re working with.

This has a name, and a book, and the book is one nobody in Silicon Valley seems to be reading but should immediately.

Zen and the Art of Motorcycle Maintenance

Robert Pirsig’s 1974 Zen and the Art of Motorcycle Maintenance is an attack on the split between what he calls romantic and classical understanding. The romantic rides the motorcycle and enjoys the wind. The classicist understands the engine and can fix it. Pirsig’s argument isn’t that one is better. It’s that Quality requires both, and a civilization that treats them as separate categories is already in trouble.

The romantic who can’t maintain the machine becomes dependent on systems he can’t evaluate. The classicist who can’t see the whole loses the capacity to ask whether the machine should exist.

What Thompson’s article documents, without the Pirsig lens, is the entire software industry migrating from classical to romantic understanding of its own product. The developers he interviews are thrilled to stop maintaining the engine. One tech executive, Anil Dash, provides the framing the piece hangs on: in coding, AI takes away the drudgery and leaves the soulful parts to you.

Pirsig would recognize this immediately as the exact attitude he spent 400 pages diagnosing as the root of the problem.

The motorcycle maintenance parallel is almost literal. Pirsig’s narrator watches his friends refuse to learn how their BMW works, then get stranded and resentful when it breaks. The vibe coders in Thompson’s piece are writing software they can’t read, shipping code they can’t debug, and calling it liberation. Pirsig’s friends called their ignorance freedom from technology too.

And Pirsig’s answer that care, attentiveness, and direct engagement with the material is the quality actually maps cleanly onto the question of what happens when nobody in the production chain can tell you whether the output is good.

The Drudgery Is the Curriculum

The piece profiles junior developers who have never worked without AI and frames them as the fortunate generation. Pirsig would frame them as people who learned to drive without ever opening the hood. Some will be fine drivers. None will become mechanics. And you won’t know which ones could have, because the pathway that would have revealed it no longer exists.

You need mechanics and drivers. Some people can be both. You don’t have to understand a spark plug to drive, but someone does. The path to becoming a mechanic is through the drudgery — you learn what a function does by writing one badly, debugging it, rewriting it. The “soulful part” Dash celebrates — taste, judgment, knowing whether the output is good — is developed through the very labor being automated away.

You don’t have to understand a spark plug to drive. But someone does. And the person who becomes that someone does it by working with spark plugs, not by describing spark plugs to an oracle.

The German education system has an answer to this. The Ausbildung system treats craft mastery as a legitimate intellectual achievement, not a consolation prize for people who didn’t make it to university. A Meister has a protected title, a defined body of knowledge, and social standing that reflects actual competence. The system assumes society needs people who understand the substrate, and builds institutions to produce them.

The German education system has an answer to this. The Ausbildung system treats craft mastery as a legitimate intellectual achievement, not a consolation prize for people who didn’t make it to university. A Meister has a protected title, a defined body of knowledge, and social standing that reflects actual competence. The system assumes society needs people who understand the substrate, and builds institutions to produce them.

The Anglo-American model does the opposite. It treats abstraction as the only direction of advancement. The person who understands the engine is supposed to aspire to stop touching it. Management is the reward for competence. The whole incentive structure says: get away from the material as fast as you can.

Which is exactly the value system driving this moment. The developers in Thompson’s article aren’t just adopting a tool. They’re enacting a cultural assumption that proximity to the machine is low-status work. Prompting is management. Coding is labor. The celebration isn’t really about productivity. It’s about class migration.

The Oldest Abstraction Layer

The pattern goes much deeper than software. The Church as an abstraction layer is a systemic abuse platform. It sat between people and knowledge the same way the API sits between the developer and the code. You don’t read scripture yourself, you receive interpretation. You don’t investigate nature, you accept doctrine. The interface was the institution, and the institution’s power depended on nobody going around it to touch the substrate directly.

Descartes’ move was radical precisely because he said: I can reason from the ground up, without the interface. Cogito ergo sum is a mechanic’s statement. I’m going to open the hood myself. And it nearly got him killed! He watched what the Church did to Galileo for “Dialogues on the Two World Systems” and delayed publishing for years.

Galileo’s book was banned, and he was sentenced to a light regimen of penance and imprisonment at the discretion of church inquisitors. After one day in prison, his punishment was commuted to “villa arrest” for the rest of his life. He died in 1642. More than 300 years would pass before the church admitted Galileo was right and cleared his name of heresy [after 13 years of internal debate, in 1992].

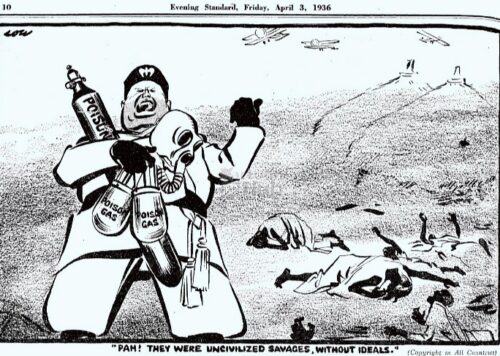

Speaking of being killed for being intelligent, the women whom the Church targeted and burned as “witches” were, in large part, people with empirical substrate knowledge like herbalism, midwifery, and local ecology. They understood how things actually worked at the material level, which was perceived by the Church as a threat to their domination over obedient, unthinking adherents. The “witches” were in fact simply the mechanics of their day, who understood how things actually worked and why. The most intelligent women were eliminated not because their knowledge was wrong but because they were unmediated. They didn’t route through the hierarchical authorized abstraction layer of a few men who demanded total control. The threat was never any actual “magic” or “evil”. The threat only was an ability to think, to reason, and therefore freedom and independence from unthinking Church adherents.

One example is these women had brooms to sweep and clean with, and they had cats to keep vermin away. The Church ran disinformation attacks on brooms and cats, using it to burn women to death because of their ability to maintain healthy living. Killing so many women and their cats directly led to the great plague deaths, given an explosion of filthy flea-infested rats.

The ability for thought was the drudgery the Church tried to prevent. Independent reasoning was turned into heresy. Direct observation was denounced as witchcraft. The abstraction layer’s first priority has always been to make going around it structurally impossible, today what venture capitalists call their “digital moats”, to redefine competence only as fluency in an allowed interface. “She’s a witch! Does she float?” is the comedic version as famously depicted by Monty Python. If a woman had learned how to swim, she was a witch and had to be burned to death. If she hadn’t learned to swim, she drowned. The Church used double-binds to kill those with intelligence and independence:

Brands as Religions, Models as Priests

Shine a proper historical spotlight on Silicon Valley and you should see exactly what AI is doing for the venture capitalists. The priest class had specific structural features: they controlled access to the text, they interpreted it for you, they told you the interpretation was correct, and you had no independent way to verify. The model does all four.

The brand-as-religion parallel explains the loyalty Thompson documents. Those developers aren’t just using a tool. They’re expressing faith. The agent says “Implementation complete!” and they believe it the same way a congregant believes the benediction. One developer maintains what Thompson calls “a stern Ten Commandments” — behavioral rules for his AI agent — and discovers that emotional language mysteriously improves performance without understanding why. That’s not engineering. That’s liturgy.

The dependency structure is identical too. The Church’s power wasn’t primarily theological. It was infrastructural. Once it controlled the hospitals, the schools, the record-keeping, the calendar, you couldn’t leave even if you stopped believing. You were locked in by integration, not conviction. Which is the model provider strategy in plain sight: get into the IDE, the workflow, the deployment pipeline, make yourself the substrate of daily practice. At that point belief is optional. Dependency is sufficient.

Pirsig’s Quality, Descartes’ cogito, the herbalist’s direct knowledge of what the plant does are all the same move. Going around the abstraction layer to touch the thing itself. And in every era, the abstraction layer’s response is the same: make that move unnecessary, then impossible, then unthinkable.

Thompson’s article ends by suggesting that “abstraction may be coming for us all.” He’s right. The question he doesn’t ask is who is represented by those taking control over the public abstraction layer, and what happens to those being forcibly pushed onto the “other” side.

Historian protip: Abstraction layers are the preferred instruments of authoritarians committing mass violence.