The most consequential fraud in modern technology is not happening in the code. It is happening in the units.

If you ever studied the collapse of Soviet economics, you know exactly what I’m about to explain.

AI companies have built a billing infrastructure in which the seller defines the unit of measurement, counts the units, and invoices the buyer. All with no independent verification at any point in the transaction. All without any enforcement mechanism.

If you prompt AI to build something and it launches a dozen agents and burns an entire day worth of credits in an hour, that’s business as usual, especially if they delete their own work and complain they have nothing to show you for it.

The unit of fraud is called a “token.” It has no fixed definition. It varies by model, by provider, and by tokenizer version. It can be changed at any time, by the vendor, without notice. There is no regulatory body certifying token measurement. There is no weights-and-measures regime. There is no audit trail the customer can independently verify.

This is not a new problem, as I already hunted.

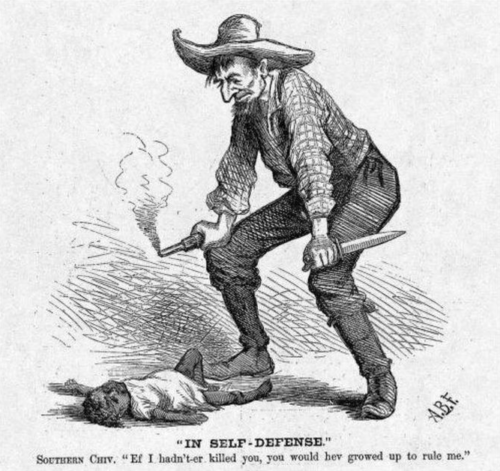

It is one of the oldest problems in commercial history, and every previous instance ended the same way. It won’t be different this time. It’s logic any five-year-old should be able to figure out.

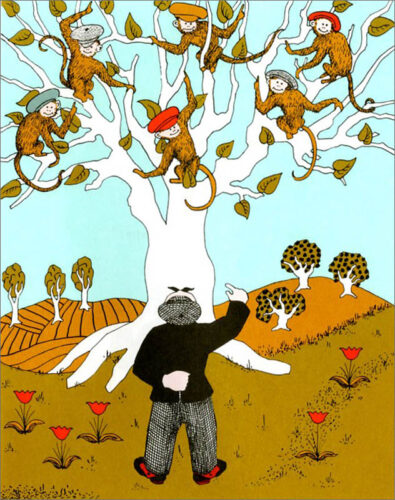

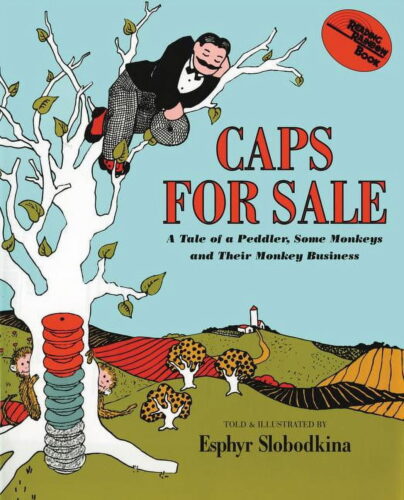

Caps for Sale

Let’s start with clause 35 of the Magna Carta, 1215:

Let there be one measure of wine throughout our whole realm; and one measure of ale; and one measure of corn.

This was the language of liberty from oppression. It was a response to documented, systematic fraud by royal merchants who controlled their own measures. A bushel in London was not a bushel in York, and the difference was profit.

It took England six centuries to arrive at a proper Weights and Measures Act. Every iteration addressed the same structural deficiency: when the entity selling the goods also controls the unit of measurement, the unit will be corrupted. The entire history of metrology from the Bureau International des Poids et Mesures to NIST to the EU’s Measuring Instruments Directive, is the history of forcibly separating the measurer from the seller.

It’s fundamental to the rise of industrialization that the clocks had to run on universal time, even with time zones, such that trains could have externally judged arrival and departure times. The British and Dutch factories that invented assembly lines to defeat Napoleon (infamously copied by Ford) couldn’t work without shared units of measure.

Given this context it appears now that AI companies are the most historically illiterate and economically unsound ever.

Their “token billing” has undone a fundamental tenet against trivial fraud. We are back to the royal merchant having their thumb on the scale for every transaction, except the thumb is an algorithm and the scale is proprietary.

How dumb does the intelligent machine business think we are, seriously?

LIBOR for Compute

Let’s review, for example, the London Interbank Offered Rate (LIBOR) that underpinned roughly $350 trillion in financial instruments worldwide. LIBOR was calculated from self-reported borrowing rates submitted daily by the banks that profited from the number. No independent verification. No transaction-based measurement.

Just trust.

And it failed. Banks manipulated it for years. Of course they did. The entity producing the number was also the entity whose trading positions depended on the number. When the fraud was finally exposed, the fix was to replace LIBOR with SOFR (Secured Overnight Financing Rate) which is derived from actual observed transactions rather than self-reported claims.

Now consider the AI jar of pickles we are being told to get in.

OpenAI reports that average reasoning token consumption per organization has increased approximately 320 times in the past twelve months. This number was produced by OpenAI, about OpenAI’s product, using OpenAI’s proprietary tokenizer, and reported to the press as evidence of adoption. It is Barclays submitting its own LIBOR rate as if nobody knows why we stopped them from doing this.

The difference is that LIBOR at least had the pretense of multiple submitters. Token counts have one source: the vendor.

Intelligence machine vendors have truly produced their most cynical moment.

Gosplan of Sand Hill Road

Soviet central planning failed not because the planners were being stupid. Many were brilliant, which probably made everything worse. It failed because the information system was structurally corrupt, and compliant agents corrupted it further. Every layer of the reporting chain had an incentive to inflate their output numbers, and there was no independent verification mechanism capable of correcting the distortion.

The famous case study is the Soviet nail factory. Measured by weight, the factory produced fewer, heavier nails that nobody needed. Measured by quantity, it produced millions of tiny nails nobody could use. The metric became the product. Actual utility was irrelevant because utility was not being measured, only the unit was.

Here’s another token output example of fraud I was taught in college. Soviet window manufacturers measured weight and nobody could install the heavy, thick glass. They measured by size, and all the very large, thin glass broke before it even could be loaded for delivery. Actual utility was irrelevant because utility was not being measured, only the unit was.

Every day that I use AI it wastes unbelievable amounts of money and time, measured in units of tokens, as it tells me if I don’t like it there’s nothing I can do.

Jensen Huang’s proposal at GTC this month is the Soviet nail or glass factory at much larger Silicon Valley scale.

He suggested that every engineer should have an annual token budget, where these allocations could reach half of base salary in value. Consider what this fraud means structurally. You are telling workers they have an annual allocation of a unit that measures interaction volume, not outcome quality.

Record scratch.

So a notoriously wasteful industry already in trouble for water and air pollution will optimize entirely for high consumption. An engineer who solves a problem by thinking for ten minutes and never touching the AI has, under this framework, underperformed relative to one who burned through a million tokens generating refuse. Yet the engineer who thinks is undeniably the superior engineer to the ones that do not!

Pray and spray, running out of ammunition and begging for $200 billion to keep firing at ghosts, is so inversely proportional to Delta operators I can’t even…

This is not a productivity metric, because Nvidia is incentivized inversely to what customers actually need. It is Gosplan announcing the Five-Year Plan for compute consumption, and every factory manager is about to start filing reports showing they exceeded their quota of tokens, meaning… nothing.

Shovel Seller Tithe

Huang’s position is particularly elegant because Nvidia does not sell tokens. They sell the GPUs that generate them. Every token consumed requires silicon to produce. If token budgets become a standard corporate expenditure pegged to payroll, Huang has created a permanent demand floor for his hardware.

Gross. Literally gross product.

He does not need to manipulate the token count himself. He just needs the token to become the unit that corporations manage against, and every dollar allocated to token budgets flows upstream to GPU purchases.

He skips actual measurement. He proposes that companies commit, in advance, to spending a fixed percentage of their payroll on his product for compute.

That is not a metric. It is a tithe.

And the structure insulates him perfectly. The AI providers already grossly inflate the token counts. The customers overpay the AI providers, given that most of the token count is for fixing things the tokens were spent on to begin with, like a protection racket. The AI providers buy Nvidia’s GPUs to service the consumption they have encouraged and caused without any accountability for outcomes. Nvidia never touches the books. They sell shovels to the people salting the mine.

The Arc

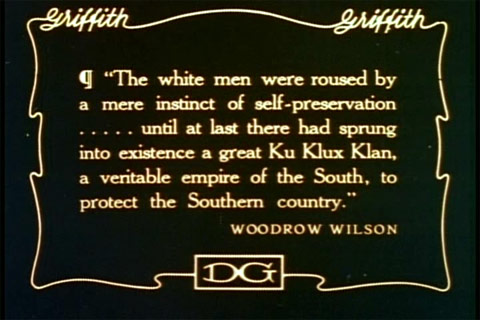

Every instance of self-reported commercial measurement in recorded history has followed the same progression: self-reported measurement, then market adoption of the metric, then discovery of systematic manipulation, then regulatory intervention mandating independent measurement.

Medieval grain measures. LIBOR. Credit ratings. Remember Facebook’s video metrics? The company admitted in 2016 to inflating view times by 60 to 80 percent, having defined the view, counted the view, and sold the view. The pattern is not debatable. It is one of the most thoroughly documented dynamics in economic history.

Token billing is currently at stage two: market adoption. Enterprises are building budgets around it. Analysts are publishing reports denominated in it. A CEO is proposing tying it to compensation.

Nobody is asking who audits the count.

Auditors are completely absent.

The harsh reality for every major AI provider on earth, like royalty before the Magna Carta, is that nobody has the independent authority needed to vouch for them. The merchant is being made the king who declares their own scale valid no matter what. And this time the scale is processing trillions of transactions per day, denominated in a unit that has no legal definition, no regulatory oversight, and no independent verification mechanism.

No kings.

We have eight hundred years of evidence for this bullshit. The only variable today is how much it costs before someone reads basic history of economics and enforces an honest measure.

The AI industry pretends to be terrified about regulation, but really they are in danger of transparency. Because the moment an independent third party can compare token billing against actual computational work performed, or the moment someone builds a SOFR for inference, every provider’s margins become visible. And if those margins look anything like LIBOR spreads or Facebook’s video metrics, the correction won’t be gradual.

I’m telling you, even the best of the best agents are a tragedy of token inflation and massive waste.

Nobody inside the Soviet system volunteered for glasnost. It was forced by the fact that the gap between the reports and reality had become so grotesque that the system could no longer function even on its own terms.

Token economics in Silicon Valley is rapidly approaching that threshold. Engineers know. We watch agents burn through whole budgets producing garbage, watch our token counts spike on failed reasoning chains we are billed for anyway, watch “reasoning tokens” appear on invoices for computation they never requested and cannot inspect.

The bigger their tool failure and productivity suck, the more the AI company reports a Soviet-sounding productivity “gain”.

Gorbachev didn’t reform Soviet economics. He revealed that it was dead inside.

The production numbers had been fraudulent for decades. Everyone inside the system knew. The factories knew. The ministries knew. Gosplan knew. But the reporting structure made it impossible to say so, because every career in the chain depended on the numbers going up. Glasnost (openness) didn’t fix fraud any more than exposure of Enron balanced its sheets. It made it permissible to say out loud the numbers meant nothing. The gap between reported output and actual value had grown so large that the moment anyone was allowed to measure honestly, the entire structure lost legitimacy overnight.

That’s the truth of the AI bubble. Token output is the absolute wrong measure and will only bring pain to those who adopt it without audit.