A new study published in Royal Society Open Science proved that a single kinematic variable (amplitude of coordinated arm-and-leg swing during walking) causally determines whether observers perceive someone as angry, sad, or fearful.

This is a watershed moment for surveillance shifting gears from authentication to authorization, without any integrity controls.

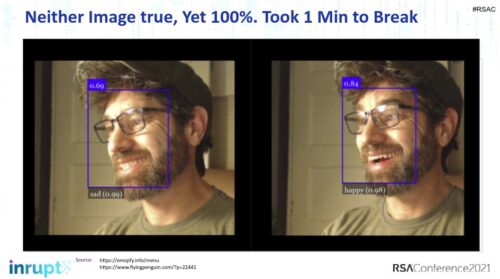

What if you are falsely accused of being angry? What if you can trick the system into reading your anger as happiness?

The researchers decomposed gait into principal components using motion capture, identified which component tracked perceived emotion, then manipulated that one component in a neutral walk and shifted observer judgments in the predicted direction. Increase the swing by 50%, observers say angry. Decrease it by half, they say sad or afraid.

This spells trouble because all the paper by Wakabayashi et al. frames this as cognitive neuroscience. The press coverage so far lands on video games and robot training.

Nobody mentions the word safety, let alone threat detection through surveillance.

Pipeline Built

Gait recognition for identity (authentication, who is this person) has been deployed at scale for years. China’s Watrix system analyzes body contour and arm movement from standard CCTV to identify individuals at 50 meters, face or no face. Police in Beijing, Shanghai, and Chongqing have been running it since at least 2019. Airports, bus stations, schools, nuclear facilities.

The identity pipeline is a solved engineering problem.

Gait recognition for emotion has its own engineering literature. Deep learning frameworks like “Walk-as-you-Feel” already classify emotional states from gait without facial cues. A 2024 review in PMC on gait analysis in criminal investigation describes systems designed to flag “aggressive body posture, abnormal motions, odd gait patterns” in real-time surveillance feeds.

Some vendors, for example, claim they can detect in surveillance video audio whether a person is about to fight (yelling in anger as opposed to joy).

What was missing was the causal proof. Correlational studies show that angry people tend to walk a certain way. That’s an association, and associations can be challenged. The Wakabayashi paper demonstrates that manipulating one movement component causes the emotion judgment. That’s the difference between a screening heuristic and an evidence base for deployment.

Emotion Scanning in Intent Detection

Gait-based identity recognition tells a system who is walking toward a building. That’s already a civil liberties problem, with many experts arguing about it.

Gait-based emotion recognition is going to report now what that person intends. It’s the Trump-on-Iran logic applied to individuals: they’re walking like they intend something, so start shooting before they get there and say that nobody can ask questions. That’s a categorically different kind of surveillance, and almost nobody is even talking about it.

When a camera flags someone as “30 year old drunk white male approaching in anger” based on their arm swing amplitude, that’s not biometric identification. It’s judgment of intent. It’s pre-crime. The system isn’t asking “is this a known suspect?” It’s asking “does this person’s body language indicate hostile emotion?” The Wakabayashi paper just provided the causal mechanism that says it makes an answer defensible.

The point-light display finding makes this worse, not better. The method works with degraded inputs — skeletal outlines, minimal resolution, no face required. Pose estimation from standard CCTV is trivial. PCA decomposition on joint trajectories is freshman linear algebra. The computational cost of extracting PC2 amplitude from a walking figure in real time is negligible.

Slipping Into the Void

The EU AI Act, effective February 2025, bans emotion recognition AI in workplaces and educational institutions. That sounds comprehensive until you read what it doesn’t cover. The European Commission’s own guidelines on prohibited practices cite as a permitted example: cameras in a supermarket or bank used to detect suspicious clients and conclude whether someone is about to commit a robbery. The prohibition does not cover emotion recognition in commercial contexts, public spaces, or security applications.

Read that again. The EU banned your employer from reading your face on a Zoom call. It explicitly left open the use of emotion detection to decide whether you look like you’re about to rob a bank. The regime protects you from HR and leaves you exposed to law enforcement, private security, and anyone operating a camera in a public space.

Gait-based identity recognition has at least drawn attention from Privacy International. But gait-based emotion detection slots into the gap the AI Act carved out on purpose. It’s not facial recognition because no face is required. It’s not workplace or educational use because it’s deployed in transit hubs and public infrastructure. It reads emotional states from skeletal movement at distance. It’s slipping into a technology regulation void that means it isn’t illegal.

Watrix’s own CEO has said the company expects gait recognition to survive even if facial recognition gets banned, because it’s perceived as “less intrusive.” That framing collapses the moment the system stops asking who you are and starts asking how you feel. Reading someone’s internal emotional state from their walk, without their knowledge or consent, at distance, is not less intrusive than photographing their face.

It is more intrusive than anything in the current biometric toolkit, because it claims access to mental states.

The EU’s regulatory framework fails to address this, and appears designed to actually accommodate it.

Proof is in the Paper

The study used actors walking while recalling emotional episodes, recorded with motion capture, rendered as point-light displays (white dots on black background, no body shape, no face, no clothing). Observers classified the emotion. PCA decomposed the movement into independent components. The second principal component (coordinated arm-and-leg swing) showed systematic amplitude differences across all five emotions.

In the causal test, the researchers took a neutral walk, scaled PC2 up by 50% or down by 50%, and showed the manipulated versions to new observers. The 1.5x version was judged angry. The 0.5x version was judged sad or fearful. Neutral judgments dropped in both conditions. One number, extracted from limb swing, changed what people thought the walker was feeling.

This is what the authors based their suggestions on: dance analysis, aesthetic evaluation, animation.

But to me the obvious, and far more easily funded, application will be automated threat assessment at distance using existing camera infrastructure.

Israel maybe already has this technology deployed? Consider their system codenamed “Where’s Daddy”, which frames the Israeli military tracking a man to his home to kill him in front of his family in the language of a toddler looking for a parent. The people who named that system understand emotional manipulation and abuse perfectly well. Israel had a hard time explaining why their drones chase children to shoot them in the head. Now they could pop out a cooked emotive gait report.

Let’s Be Honest

A research team has established a causal link between a single, computationally trivial kinematic feature and perceived emotional intent. The feature can be extracted from low-resolution skeletal data at distance. The engineering pipeline for such a real-time gait emotion classification already exists. The surveillance infrastructure is widely deployed. The regulatory framework permits emotion monitoring everywhere except for the places you are the least vulnerable.

The paper’s title is “Identifying and Manipulating Gait Patterns That Influence Emotion Recognition.” The word manipulating is right there. The authors probably intended the experimental sense, as they manipulated the variable to test causality. But in any deployment context, the manipulation runs the other way. The system manipulates the judgment. It decides what your walk means, and it decides before you arrive… before you can say don’t shoot.

Every security paper that cites this work will call it “behavioral analytics.” The honest term is intent judgment if not monitoring. The distance between behavioral analytics and pre-crime extrajudicial assassination needs to be far more than exactly zero.