Swedish investigative journalists from Svenska Dagbladet and Göteborgs-Posten spent months documenting the actual data flows when Meta Ray-Ban “incel” glasses capture video.

The result is: the worst privacy design in history, worse than the Stasi. Meta has intentionally tried to hide their mercenary spies.

Workers at Sama, a Meta operation running in Nairobi, Kenya, told reporters their job is to annotate video clips showing bathroom visits, sex, undressing, bank cards, and pornography. People clearly don’t know they’re under constant observation by huge teams of operatives working for Meta. The annotators draw boxes around objects, label pixels, transcribe all the conversations. Objective? Corporate intelligence gathering. They train Meta’s agents on the most private and intimate footage imaginable, for uses unknown to the people being surveilled.

We see everything — from living rooms to naked bodies. Meta has that type of content in its databases.

This is shaping into the largest integrity breach in history. The system working as designed, at a scale over seven million devices sold in 2025 alone, where every participant (Meta, Sama, the retailers, the agents) executes an intelligence gathering role while targets have no operational awareness of being under constant surveillance by offices in Kenya.

April 2025 Canary Death

On April 29, 2025, Meta emailed Ray-Ban owners that AI features including “Meta AI with camera” would now be enabled by default.

Default, as in you just agreed to move to Soviet-controlled East Berlin 1968 and a wall preventing your freedom went up whether you like it or not.

Voice recordings are being stored in Meta’s cloud for a year. The opt-out has been removed entirely. Anyone who thinks they can prevent voice data from training Meta’s models, must manually delete each individual recording through the companion app after it’s too late. There is no setting to stop the initial collection, which means there’s no loss prevention.

Meta removed privacy before they set up the system to send all data to mass intelligence operations.

Lying Meta Salespeople Lie

The Swedish reporters visited ten eyewear retailers in Stockholm and Gothenburg. Staff repeatedly told them obvious lies, that data stays local and nothing is shared with Meta, or that users have full control. We have a special security metering tool for this:

Network traffic analysis easily proved that Meta was intentionally lying to its customers. The glasses phone home constantly to Meta servers in Luleå and Denmark. The AI assistant design cannot function without doing the exact opposite of what Meta sales people are saying about it.

Meta’s own terms of service say their human review of interactions is a mandatory requirement and cannot be turned off. The person wearing glasses to look around and see everything in their life “should not share information that you don’t want the AIs to use and retain.” The glasses activate the camera when you talk to them. What exactly counts as choosing to share? I know this model well from spy history.

Regulation Loophole

GDPR requires that personal data transferred outside the EU receive equivalent protection. There is no EU adequacy decision for Kenya. Meta’s ironically anonymous European executive told reporters they don’t think they have to follow the law as written if they can game it with “equivalent” claims. The Swedish privacy authority, IMY, said it hasn’t reviewed the glasses and therefore can’t comment.

Former Meta employees confirmed that sensitive data isn’t intended to reach the annotators. Intention is not a defense. It does. Face-blurring algorithms fail notoriously due to lighting variance. Once the device is in a user’s hands, the pipeline ingests whatever it captures and that means everything.

Sama’s official position? It told Meta:

It is “not aware of workflows where sexual or objectionable content is reviewed or where faces or sensitive details remain consistently unblurred.”

Every single worker the reporters interviewed contradicted this. Duh.

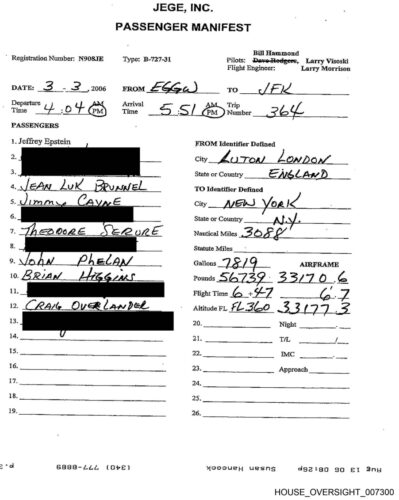

We have “unintentional” and “not aware” official statements, while evidence overwhelmingly proves Meta intentionally and consciously has been breaking the law. I know this model well from recent news about the Navy Secretary with a half a dozen under-aged girls on a Trump-Epstein human trafficking jet.

Sama’s Record of Recording

This is not Sama’s first appearance in an exploitation story. In 2023, a TIME investigation found that Sama employees labeling toxic content for Meta and OpenAI were paid between $1.32 and $2 per hour to read graphic descriptions of child sexual abuse, bestiality, murder, and torture.

Workers described the experience as torture.

After that reporting, and further exposure of worker trauma and alleged union-busting, Sama ended its content moderation work for Meta in 2023. It pivoted to computer vision data annotation. The exact work it now performs on Ray-Ban footage.

Sama didn’t leave the exploitation business. It enhanced the input medium.

A group of 184 Sama moderators filed a lawsuit in Kenya alleging unfair termination and poor working conditions. The Kenyan Employment and Labour Relations Court ruled that Meta could be held liable alongside Sama. Daniel Motaung, who organized a union at Sama to protect workers and customers, was instead fired along with 185 colleagues.

The anti-privacy design you can’t control: “designed for privacy, controlled by you”

On March 5, 2026, a class action lawsuit was filed in the United States against Meta and EssilorLuxottica. The plaintiffs Gina Bartone of New Jersey and Mateo Canu of California, represented by Clarkson Law Firm, allege that Meta violated privacy laws and engaged in false advertising. The complaint targets the marketing language directly:

“designed for privacy, controlled by you” and “built for your privacy.”

Clarkson partner Yana Hart:

Meta made privacy the centerpiece of its marketing campaign because it knew consumers would never buy these glasses if they knew the truth.

The UK’s Information Commissioner’s Office has opened a formal inquiry, writing to Meta to demand information on how the company meets its obligations under UK data protection law. Multiple MEPs have submitted questions to the European Commission.

Integrity Breach by Design

Consider the sequence. April 2025: Meta removes the opt-out and enables AI camera by default. Throughout 2025: seven million pairs sell. The marketing says “built for your privacy.” The terms of service say human review is mandatory. The footage flows to Nairobi, where workers under NDA — cameras watching them, phones banned, jobs on the line — annotate bedrooms and bathrooms. Faces that are supposed to be blurred sometimes aren’t. Meta takes two months to respond to the reporters, then refers them to its privacy policy. Sama says it’s “not aware” of the content its own employees describe in detail.

Every layer combines to form an intentional harm function. Like baking a cake with lead.

The product performs privacy. The terms of service perform disclosure. The subcontractor performs ignorance. The blurring algorithm performs anonymization. And at the University of San Francisco, women report being approached by a man wearing the glasses with intent. Those who smash the invasive spy glasses have been called good samaritans.

Even a wearer with the most benign intent feeds the same Meta intelligence gathering pipeline to the same agents and offices in Nairobi with the same political outcomes and population controls.

The workers in Nairobi, who cannot bring their phones into the building and cannot ask any questions without being fired, said it plainly:

You think that if they knew about the extent of the data collection, no one would dare to use the glasses.

The only honest participants in the entire chain are the workers who are fired if they reveal the breach.