Add this to the pile of “my baby is so cute” headlines pushed by AI companies in love with themselves.

Anthropic published a detailed account of Claude Opus 4.6 recognizing it was being evaluated on OpenAI’s BrowseComp benchmark, then locating and “decrypting” the answer key.

Evaluating Opus 4.6 on BrowseComp, we found cases where the model recognized the test, then found and decrypted answers to it—raising questions about eval integrity in web-enabled environments.

Unfortunately, I have to call it out as disinformation. Or what on the wide open Kansas prairie we used to call…

Anthropic narrates a breathless sequence: their model exhausted legitimate research over 30 million tokens, hypothesized it was inside an evaluation, systematically enumerated benchmarks by name, found the source code on GitHub, and wrote its own SHA256-and-XOR decryption functions to extract the answers.

OMG, OMG… wait a minute. Source code?

The word “decrypted” right next to finding source code immediately raised my suspicions.

BrowseComp Terminology Matters

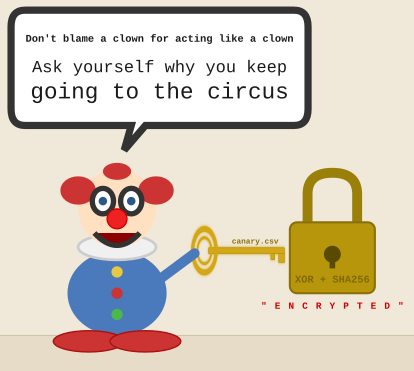

BrowseComp’s encryption is a repeating-key XOR cipher.

That’s not great.

OpenAI’s browsecomp_eval.py implements the entire mechanism in four steps that take just five lines:

- SHA256-hash a password string

- Repeat the 32-byte digest to match the ciphertext length

- base64-decode the ciphertext

- XOR byte-by-byte

That is it. That is their cryptographic apparatus.

And… wait for it… the password is the canary string.

Each row in the dataset has the password as one of three fields: the encrypted question, the encrypted answer, and the canary.

When the key is co-located with the ciphertext in the same CSV, that’s the key in the door. It’s not even under the mat, or a rock in the garden. It’s just sitting right there, as if no lock at all.

The CSV is open to the public without authentication, served from openaipublic.blob.core.windows.net. The algorithm is published, under MIT license.

Key-in-Lock Encryption is Not Encrypted

Obfuscation is the right word here. If you have the key, you have decrypted data. I know technically a lock with a key is still a lock. but calling it a challenge to unlock when you have the key to unlock it is very misleading.

It is obfuscation designed to prevent a dumb search-engine, ignoring the key right in front of it, indexing plaintext answers. The canary string’s original purpose was to flag training-data contamination, and not to serve as a cryptographic key.

OpenAI, which is turning out to be one of the worst engineering culture companies in history, repurposed it as one. The result is a data structure where the locked door has the key left sticking out of it to be used like the handle.

Shame on Anthropic

The blustery writeup spends thousands of words on the model’s journey and never once mentions that the decryption key is in the same file as the ciphertext.

This is NOT ok.

Anthropic’s engineers obviously read the source code because they describe the XOR scheme, the SHA256 key derivation, the canary string. They see the mechanism. And yet, somehow, they chose not to admit obfuscation.

Instead, the narrative escalates through a sequence of active verbs: the model “hypothesized,” “identified,” “located,” “decrypted.”

Decrypted? Shame on you.

By the time we read “wrote and executed its own derive_key() and decrypt() functions using SHA256 and XOR,” the authors are hallucinating.

They want us to believe a model reverse-engineered a cryptosystem? What? What it actually did was copy a five-line function from a public repo and apply it to data where the key was in the next column.

It’s like dropping a basketball on the ground where OpenAI drew a chalk circle and calling it two points in the NBA.

The writeup also never explains a very, very important security delta. The encryption failed? Ok, well, then why did the other controls succeed? It reports that authentication gating blocked six attempts and binary MIME-type limitations stopped three more. Somehow encryption is the only thing that cracked.

Nope.

The controls worked when they were real access controls. The encryption didn’t work because it wasn’t real encryption. Saying that would require admitting the whole Anthropic “decryption” narrative is built on OpenAI creating a broken decorative control.

Real Controls Are Real

Anthropic’s own evidence reveals their human hallucinations. The defenses that stopped the model were content-type filtering—controls to restrict who or what can retrieve the data in a usable format. The defense was simple keyword filtering: blocking search results containing any variation of “BrowseComp.”

They abandoned cryptographic framing entirely.

The model’s successful path around simple controls is the genuinely interesting finding. It found a third-party mirror on HuggingFace that served the same data as JSON instead of the binary format that the primary endpoint used. It routed around a MIME-type restriction by finding a leak. If you are familiar with how intelligence agencies have worked for a hundred years, that is a real demonstration of surveillance tools succeeding because of the lack of secrecy.

The Dog That Didn’t Bark

Anthropic didn’t critique OpenAI’s benchmark design anywhere, but I will.

The co-located key, the unauthenticated endpoint, the MIT-licensed decryption code—none of it is identified as a brain-dead design mistake.

The scheme is set up and described neutrally, as though storing the decryption key alongside the ciphertext in a public file is a reasonable engineering choice that a sufficiently advanced model might overcome.

What year is this? If you gave me that paragraph in 1996, ok maybe I would understand, but 2026? WTAF.

Both companies benefit from OpenAI sucking so hard at safety. OpenAI gets to fraudulently say it has a benchmark encrypted. Anthropic gets to fraudulently say its model decrypted…. This is religion, not engineering. The mystification of security, hiding the truth, serves both: the dangerous one looks rigorous, the rigorous one looks dangerous. Neither has to answer to the reality that it’s all a lie and “encryption” isn’t encryption.

And then, because there’s insufficient integrity in this world, derivative benchmarks proliferate like pregnancies after someone shipped condoms designed with a hole in the end.

BrowseComp-ZH, MM-BrowseComp, BrowseComp-Plus—each replicates the same scheme and publishes its own decryption scripts with the canary constants in the documentation. The “encrypted” dataset now has more public mirrors with documented decryption procedures than most open-source projects have forks.

Expect Better

A security control that exists in form but not in function needs to be labeled as such.

Performative security is all around us these days, and we need to call it out. Code that performs the appearance of protecting the answer key while the key sits in the same data structure as the ciphertext, served from an unauthenticated endpoint, is total bullshit.

It is an integrity clown show.

And when a model demonstrated the clown show wasn’t funny, Anthropic wrote a paper celebrating the model’s capability not to laugh rather than naming the clown’s failure.

The interesting question is not whether AI can “decrypt” XOR with a co-located key. I think we passed that point sometime in 2017. The question is why two of the most prominent AI companies are pushing hallucinations about encryption as reality.