A Tesla in “Full Self-Driving” mode crashed through a bright red railroad crossing barrier in West Covina, California on March 8th. NBC has documented over 40 similar incidents, but watching a barrier’s reflective surface filling the entire camera frame as it crashes is a sight to behold. Tesla’s latest and greatest “self-driving” design is the equivalent of “I can’t see and DGAF.”

The Sequence

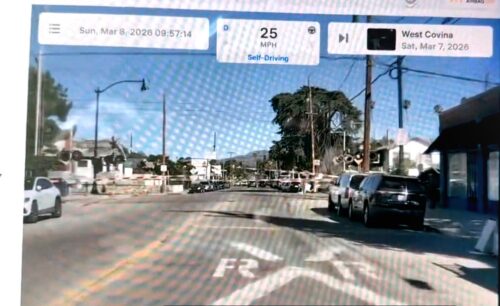

At 09:57:14 the car is doing 25 MPH in Self-Driving mode, cruising towards a giant white X and approaching lowered high visibility barriers.

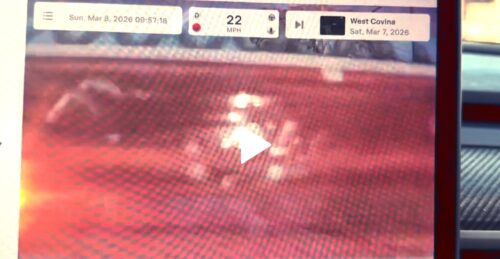

Four seconds later at 09:57:18 the entire camera frame is red because the barrier’s reflective surface is flush against the lens.

Speed only dropped to 22, meaning no braking intervention from FSD at all. The camera didn’t just fail to classify the barrier. The barrier occluded the entire camera view!

The system’s primary sensor was physically blocked by the obstacle it was driving into, and the system interpreted that as… nothing to see here.

Nada.

No emergency stop, no uncertainty flag, no alarm and handoff to driver. A complete loss of visual input was registered as normal driving conditions.

That’s not an edge case in object detection.

Tesla is an abject failure in the most basic perceptual logic: if your camera suddenly goes from road scene to solid red, something is wrong. Even without classifying what — barrier, wall, vehicle, tarp — the total loss of scene coherence should trigger an emergency response. The Tesla design for safety has no safe concept of “I can’t see.”

And in the frames before the crash into the barrier, the visual signals are stacked: flashing lights, lowered gates, white X railroad crossing sign, painted road markings. Every redundant safety indicator that exists at a railroad crossing was active.

FSD missed all of them and then missed the barrier itself as it filled the frame.

NHTSA’s investigation of Tesla has specifically covered this problem, and the data deadline was the same day this went viral. Tesla faced 8,313 records to review at 300/day and couldn’t handle it. That’s 28 days of review for a deadline they’d already extended twice.

Meanwhile, Tesla FSD keeps crashing and people still try to act surprised.