On March 24, German federal prosecutors announced the arrest of two people for spying on behalf of Russian intelligence. The target was a person in Germany who supplies drones and components to Ukraine. One suspect filmed the target’s workplace. The other visited the target’s home and photographed it with a phone.

The Generalbundesanwalt’s own language is worth noting. The surveillance served “the preparation of further intelligence operations against the target person.” In plain language: they were building a kill file.

This is not an isolated case. It is the latest entry in an escalation pattern that has been tightening across Europe for two years.

The Ladder

| Apr 2024 | Germany | Two German-Russian nationals arrested in Bayreuth for photographing military installations and railway tracks, including the US training base at Grafenwöhr. Planning arson and explosions to disrupt arms logistics to Ukraine. |

| Jul 2024 | Germany | US and German intelligence foil Russian plot to assassinate Armin Papperger, CEO of Rheinmetall, Europe’s largest ammunition producer. |

| Jul 2024 | UK / Germany | Incendiary devices disguised inside vibrating cushions and cosmetics tubes shipped via DHL through Leipzig. One detonates at a DHL hub in Birmingham. Another catches fire in Leipzig before loading onto a cargo flight. |

| Sep 2025 | UK | Three arrested for running sabotage and espionage operations for Russia. Follows convictions of a Wagner-directed arson cell and a Bulgarian spy ring that surveilled a US military base. |

| Oct 2025 | Poland / Romania | Poland arrests eight for espionage and sabotage, bringing total detentions to 55 over three years. Romania intercepts two Ukrainian citizens placing explosive packages at a delivery company under Russian intelligence coordination. |

| Nov 2025 | France | Four detained for spying for Russia and promoting wartime propaganda. |

| Jan 2026 | Germany | Ilona W. arrested in Berlin. GRU agent posing as Ukrainian community advocate, sat rows behind Zelenskyy and Merz at political events. Gathered intelligence on drone test sites, arms deliveries, defense industry personnel. Her GRU handler, operating as deputy military attaché, expelled within 72 hours. |

| Mar 2026 | Germany / Spain | Ukrainian and Romanian nationals arrested for surveilling a single drone supplier. Structured handoff when first agent relocated. Filming workplace and home address. Prosecutors cite preparation for “further operations.” |

The Pattern

Sweden’s defense research agency FOI published a study in January 2026 analyzing 70 individuals convicted of espionage across 20 European countries between 2008 and 2024. The taxonomy it produced reads like a field guide to what German prosecutors keep uncovering: the Observer, the Disposable, the Mobile Spy who exploits Schengen to operate across jurisdictions, the Connected Agent recruited through diaspora networks. The categories overlap. An observer may also be mobile. A disposable may be embedded in a criminal network.

The operational signature is consistent. Russia recruits non-Russian nationals for deniability. It uses Telegram for tasking and cryptocurrency for payment. It treats agents as expendable. When one relocates, another steps in. The March 24 case is textbook: a Ukrainian and a Romanian, a structured handoff, a target in the drone supply chain.

S&P Global’s November 2025 analysis warned that while sabotage incidents appeared to decline in 2025, this likely represented strategic recalibration rather than de-escalation, with increased activity expected in 2026. NATO described the threat level as “record high.”

The Timing

The March 24 arrests came 24 days into Operation Epic Fury. That matters.

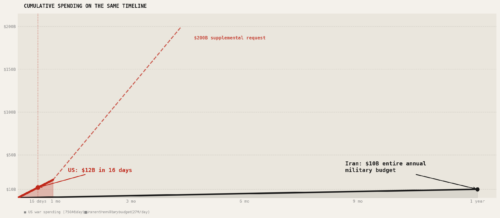

Russia is fighting a hybrid war on two fronts simultaneously. In Europe, it continues targeting the logistics chain that supplies Ukraine. In the Middle East, it is providing Iran with intelligence on US military positions, including the locations of American warships and aircraft. Zelenskyy stated on March 24 that Ukrainian intelligence has “irrefutable evidence” of Russian intelligence sharing with Tehran. The EU’s foreign affairs chief Kaja Kallas said the same thing publicly. CNN and the Washington Post reported it independently, citing US officials.

The US intelligence community’s own 2026 Annual Threat Assessment, released March 18, contains fewer references to Russia than the 2025 edition. References dropped from 152 to 99. The document explicitly warns about both inadvertent and deliberate escalation with NATO, but the analytical attention has thinned.

CEPA analysts framed it clearly: the question is whether Europe can use Washington’s distraction to strengthen its own posture on Ukraine while the Americans aren’t looking. The flip side is that Russia can use the same distraction to intensify operations that European counter-intelligence services are already struggling to contain.

The counter-sabotage response remains largely national. Coordination between governments is limited. Coordination between governments and the private sector is worse. The people being surveilled, the drone suppliers and logistics operators who keep Ukraine in the fight, are mostly on their own.

From Papperger to a Drone Shop

Two years ago, Russia tried to kill the CEO of Europe’s largest arms manufacturer. Now it is filming the home address of someone who ships drone parts. The target selection has moved from the strategic to the granular.

This is not a reduction in ambition. It is an expansion in scope. The supply chain that delivers weapons to Ukraine is long, distributed, and staffed by people who do not have security details.

Russia has evidently decided that every link in that chain is worth mapping. The Generalbundesanwalt just called it preparation for further operations.