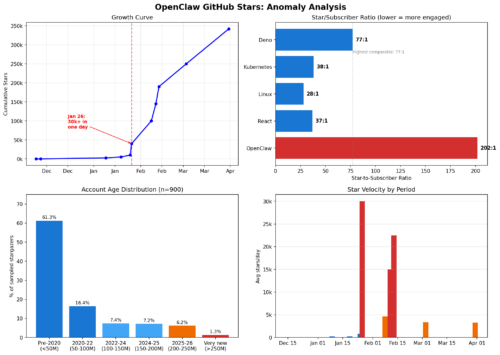

A US engineer named Cortland, claiming he loves Ireland, built an AI voice agent, deployed it to cold-call over 3,000 Irish pubs without consent, and trained it to pass as human. The BBC wrote up the attack as a heartwarming story about those Irish.

Cortland claimed harm avoidance while running unsolicited automated surveillance on thousands of small businesses. The BBC never asks who consented. Never asks about data protection. Never asks whether Irish and EU telecom regulations cover AI robocalls to commercial premises. Never asks who owns the recorded interactions. The only friction in the entire story is the Donegal bartender, and the piece treats that as comic relief.

The Irish aren’t unbothered. They were never asked. Their good humor after the fact is being laundered into consent.

The premise is a pretext. Price transparency for a product (pint of beer) with negligible variance is not a problem anyone needs solved. Cortland apparently is a former pub owner. He knows this. The “hidden gems” language is marketing copy, not a mission statement.

The method is the actual product. Building a voice agent that deceives thousands of workers into giving up commercial information, then measuring how few of them catch on. That’s a penetration test marketed as a pub guide. The cost of running it only makes sense if the return isn’t cheaper pints but demonstrated capability. He’s selling the voice agent, or selling himself as the guy who built it. The Guinndex is the portfolio piece.

In any other context, 3,000 unsolicited calls from a foreign operator using voice spoofing to extract commercial intelligence from small businesses would be called what it is: a social engineering campaign. Or worse, another “just asking questions” extraction campaign foreshadowing integrity breaches.

Brian Friel’s play “Translations” (1980) shows us how. Set in Donegal, British soldiers arrive in a small Irish-speaking community to ask details about the area. They’re charming. They need basic information. The locals provide it. The result is the erasure of their own language from their own land.

It’s based on the real-world Ordnance Survey of Ireland, 1824 to 1846. The British sent engineers and surveyors across every townland in Ireland. The stated purpose was modernization: better maps, standardized place names, improved administration. The surveyors were friendly. They asked locals to pronounce place names, explain local geography, share knowledge of the land. The Irish cooperated because the questions seemed harmless and the men asking them were personable.

The output was the anglicization of thousands of Irish place names, the tax valuations that followed (Griffith’s Valuation), and the military cartography that made subsequent control of the countryside possible.

Local knowledge, freely given to foreigners, became the infrastructure of Irish dispossession.

The output today is normalization. The BBC frames every failure of detection as comedy at the expense of the Irish. The bartender who offered to buy “Rachel” a pint. The two AIs stuck in a loop saying “Oh, dear.” The interrogation in Donegal played for laughs. Every one of these anecdotes trains the audience to find AI deception of workers endearing rather than alarming. The story’s emotional arc is: isn’t it cute that they couldn’t tell?

The BBC has centuries of practice with this framing. The charming, credulous Irish who can’t spot the trick is a colonial trope with a long shelf life. Updating it for the AI age doesn’t make it new. The structural match across time is exact at every level: foreign military/commercial operator, benign cover story, friendly extraction of local knowledge from cooperative locals, and output that served the extractor’s interests while dispossessing the extracted.

The EU has already legislated this exact AI threat scenario and Cortland’s system appears to be designed to fail the standard before it even takes effect.

The calls were unsolicited, automated, and designed to extract commercial information while concealing their nature. Irish law (SI 336/2011, Regulation 13) and the ePrivacy Directive (Article 13) require prior consent for automated calling systems. Both were written to stop machines from selling things to people. Cortland’s system does something the law didn’t anticipate: it impersonates a person to harvest data from them. That’s arguably worse, and the regulatory framework hasn’t caught up. The EU AI Act, Article 50(1), will require AI systems to disclose themselves to the humans they interact with. It takes effect August 2, 2026.

References:

J.H. Andrews, A Paper Landscape (1975); Seosamh Ó Cadhla, Civilizing Ireland: Ordnance Survey 1824-1842 (2007); G.M. Doherty, The Irish Ordnance Survey: History, Culture and Memory (2006).