A Tesla Cybertruck running Autopilot on the 69 Eastex Freeway in Houston reached a Y-shaped interchange last August and chose the wrong path. Where the road curves right, the vehicle drove straight into a concrete barrier. Driver Justine Saint Amour disengaged the system and grabbed the wheel. She was too late, because Tesla drivers always seem to be too late.

The resulting lawsuit, filed in Harris County district court and reported by the Houston Chronicle, seeks more than $1 million.

It claims a compound design failure: no LiDAR, no effective emergency braking, and a CEO who unsafely overrode his own engineers.

That last allegation is not rhetorical. It is a theory of the case. And it fits a pattern that runs well beyond the Eastex Freeway.

Tesla’s Own Taxonomy

As I’ve presented and written for over a decade, LiDAR in cars reads curves. It uses light to measure three-dimensional space ahead of the vehicle. Tesla’s own engineers recommended it. Every serious competitor — Waymo, Cruise — built their systems around it. Only Tesla’s CEO Musk objected, presumably on cost concerns. He called it “freaking stupid” and chose cheap consumer-grade low-quality camera arrays instead.

And then? NHTSA’s investigation EA22002 analyzed 467 Tesla crashes and found 111 roadway departures where Autosteer was inadvertently disengaged by the driver. Almost all occurred within five seconds. The agency also found that Autopilot resisted manual steering inputs: the system is setup as an unaccountable death-trap, actively discouraging corrections that it simultaneously requires.

Tesla’s engineers built an internal taxonomy for their CEO’s design flaws. They run a crash database query program allegedly called Cabana. Mode confusion is one of their own category labels: the driver believes the car is steering when it isn’t.

When Tesla denied in formal litigation that more than 200 such crashes existed, their own lawyer corrected the number in open court. Tesla then filed Recall 23V838 covering two million vehicles for exactly this failure — while explicitly stating in the filing they did not agree the defect existed. A quiet software patch. No redesign. NHTSA found crashes continued and opened an inquiry into whether the recall even worked or was an attempt to cheat safety regulations. Tesla is now fighting in federal court to keep expert testimony about the recall and the pattern away from juries.

Back to Saint Amour’s dashcam footage, provided to the Chronicle by her lawyers, it shows the death sequence clearly: ramp, fork, divider managed, turn begun, then blind and straight into the sidewall.

Hood open. Body panels separating. She was diagnosed with two herniated discs in her lower back, one in her neck, sprained wrist tendons, and neuropathy in her right hand. The next object past the barrier was the freeway below. She is lucky to be alive, given how many Tesla has killed so far.

The Man

The Saint Amour complaint alleges negligent hiring and retention of Elon Musk as CEO, asserting that his participation in product design decisions contributed to unsafe outcomes. Tesla’s own engineers recommended the sensor that improves safety. He overruled them. That is the obvious paper trail the lawsuit is walking.

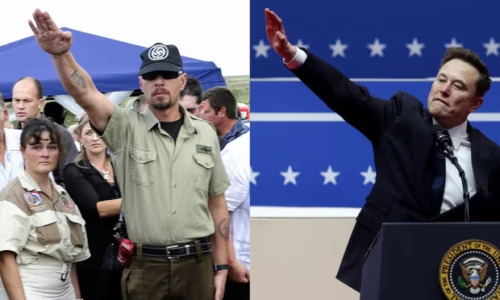

To really understand this straight-line, no curves, case it is helpful to see the rigidity and commitment originates long before the current timeframe.

Musk grew up in Pretoria under apartheid. His maternal grandfather, Joshua Haldeman, was, according to Errol Musk’s own account, “fanatical” in support of apartheid and supportive of Nazism. Haldeman had fled Canada after being arrested as an enemy of the state, to help build apartheid South Africa instead. Errol Musk was elected to the Pretoria City Council in 1972 and ran a construction and engineering business wealthy enough to support two homes, a yacht, a plane, and five cars. He dealt in emeralds from a Zambian mine — confirmed in his own interviews. Elon left at 17, to avoid military conscription, but mostly because USAID had successfully brought down apartheid in 1988 and he couldn’t handle any curve to the political road ahead.

Apartheid was a system opposed to anything but the same, arguing against change while the white nationalist infrastructure murdered anyone who dared to diverge. Its architecture — pass laws, Bantustans, labor controls — was built to deny any need for change that was already visible and documented. The demographic and political trajectory of southern Africa was known. The system refused to see or change anyway. It finally crashed in 1994.

The beneficiaries of that system were not confused about what it was doing. They were experiencing, in the language Tesla’s engineers later coined, mode confusion in reverse: trusting a system that had already disengaged from any viable future.

Dismantling the Steering Infrastructure for Curves

The U.S. Civil Rights Division was established in 1957 precisely to institutionalize accountability for straight-line power — to build enforcement mechanisms that would force institutions to negotiate with legal and demographic reality. Since the Trump takeover January 2025, 70% of its attorneys have departed. Roughly 250 lawyers — the voting rights section, the police accountability section, housing enforcement — are gone. The new leadership has redirected the division toward investigating noncitizen voters and “anti-Christian bias.” The Fair Housing Act no longer appears in its housing guidance. The Voting Rights Act barely appears in its voting guidance.

Across the federal government the anti-humanitarian DOGE operation, which Musk funded and ran, closed civil rights offices at USAID, the Social Security Administration, the Department of Education, and agencies throughout the executive branch. The latest estimates are at least 500,000 children have died as a result of DOGE. And then, in terms of this one Tesla tragedy in Texas, the agency that should pull Saint Amour’s telemetry and formally count this crash among ADAS failures — NHTSA — now operates under an administration run by the CEO of the company it regulates.

Waymo launched driverless commercial service in Houston last month using LiDAR-equipped vehicles. It stands in direct comparison to the Saint Amour lawsuit made in front of a Harris County jury. It should be about a system built to read the actual road ahead versus one built by a man with a documented, multigenerational commitment to only looking backwards and rigidly refusing to adapt.

Attorney Bob Hilliard’s statement lands exactly where Tesla’s own documents already sit:

This company wants drivers to believe and trust their life on a lie: that the vehicle can self-drive and that it can do so safely. It can’t, and it doesn’t.

Tesla’s engineers called out the failure. It has a name: mode confusion. They thought they were describing a car, but it’s a man in denial of history.