Execute first, validate never.

Put it on a Google T-shirt.

An on-device agent with screen-reading permissions and a browser automation handle, was shipped to the Play Store with no announcement, then yanked. The accessibility API is the privilege escalation surface that malware has abused on Android for a decade. Now it is the foundation for a proactive assistant?

But seriously, when I hear Google margins are way up on AI replacing human workers, I see this.

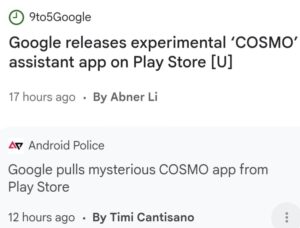

Released.

Pulled.

Five hours.

COSMO uses Android’s AccessibilityService API to read the screen, then triggers proactive “Skills” based on what it sees: Document Writer, Calendar Event Suggester, Browser Agent (Mariner), Deep Research, Recall, Conversation Summary, People/Event Understanding.

Read the screen. Apply intelligence. Report back. Decades ago that’s exactly how I was doing investigations on Windows machines, which fed to prosecutions. The market called it parental monitoring software back then, deceptively. Today it’s your friendly screen watching research assistant, apparently. Not even a parental authority claim is needed anymore?

The package name is com.google.research.air.cosmo, listed as “an experimental AI assistant application for Android devices,” shipped from Google Research but pushed through the company’s main Play Store account.

Best take on this is AI coded a thing that is very bad, and then AI prematurely released it.

You don’t want to know the worst take.

What COSMO actually demonstrates is that the distinction between malware and assistant is none. Call it a double agent. The only distinction, by design, is captured by the one who benefits from blurring it. We trust Google not to abuse user data. That is the entire security model. There is no technical control behind the trust. There should be no trust.

A little lawyer bird tells me the app already is breaking the law.