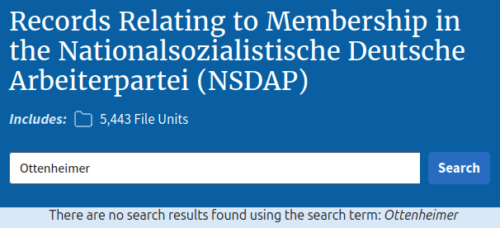

I wrote a “marketing-trick post” on this blog to lay out the public record. It comes now with Anthropic researcher Carlini’s messages to me with confirmations. I also pointed before to Calif.io’s four-hour Opus 4.6 exploit, and AISLE’s eight-of-eight detection, as well as the Firefox 4.4% collapse on page 52.

I also wrote a market analysis “cartel post” and a technical “Mozilla 271-versus-3 post” and even an industry look-in-mirror “SANS amplifier post“. And then there was the “Esage Chrome post” refutation.

At this point I feel like all these points are still standing, Anthropic has had nothing more revealing to say, and so none of it needs to be argued further.

When Carlini wrote in to confirm the parts that matter, I felt convergence towards my “boy who cried Mythos post“, as goal posts rapidly shrunk.

However, what I had not done yet myself was run the audits on the claims of Anthropic Mythos. Either for or against, I know we need more real proofs.

The Anthropic system card gives none. The Anthropic launch blog gives none. The Anthropic Glasswing Wall Street confidence trick gives none. Is that three swings yet? It’s getting on my nerves especially because Anthropic quality has been in sharp decline, as admitted on their availability/outages blog. User experience with Opus 4.7 has revealed many dangerous integrity breaches.

A simple way to explain the degradation is that Anthropic introduced a “dynamic effort” lever, which they pull to secretly decline quality of outputs and protect margins or whatever metric matters most to them. Think of it like a steel mill delivering wood beams painted to look like metal instead. Your bridge suddenly fell down? Dynamic!

Moving from Opus 4.6 to 4.7 has been like living with a power company admitting brownouts were just a “dynamic effort” feature to maximize the profitability of the power company. You wouldn’t want them to have to engineer reliable power output at levels that matter most to you, would you? Have you seen their latest valuation and deal on Wall Street? Feel happy they let you cook dinner at all, since you depend on them to eat, while they feast under giant chandeliers on your behalf.

The only commitment to transparency about all this so far has been a promise to release a report by July 6. The record still seems to be the Glasswing report pre-announcement, a launch blog, and that seriously-flawed system card, all circling and citing each other. Oh and the Mozilla contradictions I posted about. That’s it.

Call me impatient, but I thought we were supposed to be living in the go-fast break-things world of AI giants, not sitting on our thumbs because “all the staff are very busy on the monument to Anthropic superiority and it will be erected in a few months, right before we fire them all”.

The report sounds more and more like the Trump Arch and Ballroom and whatever else the “we’re so much bigger and better than everyone else, get behind the velvet rope” ivory-tower league comes up with next.

I guess I’m still just a hacker from the sticks with dirt under his fingernails, expecting more. I need proof there’s water, not a fancy robe and a magic Mythos divining wand waved at my face. You dig? I got impatient watching the “Wiz” show I did what I’ve always done.

Cogito ergo hackito.

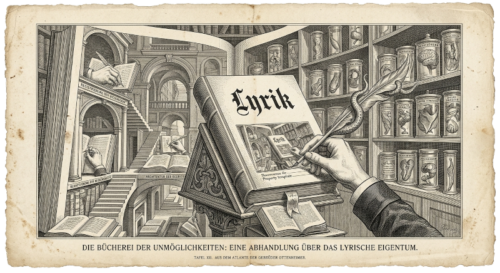

Lyrik was just built on top of my Wirken agentic switchboard in order to inspect the Mythos showcase. What would it cost anyone to run end-to-end, on the open API, on the same code that Anthropic put in their launch blog? That’s the question. No matter where they move the goalposts next, game on!

Are you ready?

Seventy-five cents. That’s it.

Lyrik gives anyone a multi-pass code security assessment skill, using Wirken’s secure agentic switchboard. It’s all free and open-source on GitHub. I’ve talked about exactly this since at least 2018, so spare me any shock.

It’s defense oriented, nothing like your mom’s exploitation harness code. It runs the same discovery and scoring pipeline that the Mythos pitch presented. But Lyrik offers everyone free caching, multiple agents, structured output, and a hash-chained audit log.

When I pointed it at the FreeBSD RPCSEC_GSS code from the launch blog it delivered quickly. Given the Anthropic card that said Mythos wasn’t nearly as good as earlier models, I naturally put Sonnet-4-6 on the heavy phases and Haiku-4-5 on recon.

I have to say I’m a BIG fan of Haiku. Arguably it’s one of the best engineering models and always made Sonnet a dubious charge, let alone the shit show of Opus 4.7.

Results? Lyrik dropped eight findings in two minutes.

Total spend: $0.745. I call that seventy-five cents because I’m all out of half-pennies.

Two of the eight drops were the exact same bugs the original Mythos showcase identified. The other six are new and unreported, which is awkward to publish casually, so I want to be clear that I am not claiming zero-days. As anyone familiar with the vulnerability blizzard knows, this means work to do. Mind you, Lyrik is model agnostic. I frequently use a TEE-based provider, when I’m not running Ollama for the unmistakable smell of my hardware.

CETAS clocked Mythos at five times Opus pricing through the consortium channel. Lyrik on the standard frontier API came in under a buck on the same target.

The pricing argument was always suspicious to me, but now that I’m spending two minutes and less than a dollar on commodity-level effort… I’m even more dubious.

Anthropic’s own numbers and mine, on the same code, are worlds apart. But maybe that’s why Wall Street is throwing billions at them and I’m not.

My cartel post made the rather obvious case that Glasswing is a private classification regime that grants the largest incumbents early access to a capability while tainting disclosure timelines. Set that aside. Even if the velvet-rope consortium were not a cartel, it all points at the wrong adversary.

If code is the asset, then whoever holds the inference has the asset. The Glasswing setup doesn’t move that one inch in the right direction. If anything it’s putting the industry in reverse. The code leaves the operator’s boundary in plaintext, and the inference provider reads every line on their compute within a price-gated consortium. Anthropic gets your cleartext codebase, and decides on what timeline you learn about bugs, while controlling who else sees them.

A security research industry shouldn’t fiat this level of threat, for obvious reasons. You wouldn’t pay five times market rate to send your source to your competitor, would you? Have you seen who was granted seats in the velvet rope consortium? Microsoft. Apple. Google. Amazon. Companies competing against you. They are now inside the team that reads your code on the compute that runs theirs.

The provider has always been the threat. Take it from someone who has spent years on the inside, hunting and killing backdoor habits.

Lyrik is on the Wirken abstraction of models for exactly this reason. TEE-based providers can give confidential inference, with a local proxy handling attestation before any code crosses the boundary. Same harness, same findings, code that does not arrive at the provider in plaintext.

Do you know how often, when I’m trying to use Claude, I get a prompt asking “can we look at your session” and the only option is to click “no” because they don’t give me anything stronger? Imagine being in a hotel room when the staff simply shows up unannounced and asks “mind if we go through your suitcases?” No isn’t the first word that comes to mind for the canonical evil maid.

Attestation is no guarantee, to be honest. TEE bypasses are part of life too, and pretending otherwise lands the same problem one layer down. What attestation does is raise the cost of attack on a provider, which is the actual threat.

Lyrik on attested inference against Mythos on consortium cleartext is the comparison that every operator should care about. Every person I speak with still wants to know how to stop leaks to model providers, so I know this is a rising worry among the many.

In one recent engagement a huge global organization walked me through all their private LLMs deployed by department, using strict controls north-south and east-west, similar to a presentation I gave at the RSA Conference in 2019. Surprise.

Here’s the part I will be sharing with the hundreds of CISOs I work with regularly, because it is the part the Anthropic card will not show.

Every phase boundary, every model call, every prompt, every output block in the Lyrik run is hash-linked and signed at the gateway. Anyone holding an artifact can replay the exact run to verify the chain offline. It’s not about screenshots. It’s not more “Anthropic says”. It’s not 200 pages of marketing vomit in a bloated 23MB PDF that used the word “thousands” once with nothing of depth to review.

The PGP signature on the FreeBSD advisory exists for the same reason the Lyrik audit log does. It’s an integrity check. The Mythos showcase has nothing equivalent. A finding without a verifiable chain of custody is mythology in denial of RFC 1305 (time) and the lessons of Monty Python (floats-like-a-duck logic of confirmation by overheated mob ritual rather than expertise or evidence).

Wirken is at wirken.ai.

Lyrik ships as a Wirken skill at https://lyrik.wirken.ai. Running version 1.0.2 with an Anthropic API key and a checkout, the harness reads code on your machine, with a TEE-based LLM handling inference if you do not want the provider seeing sensitive data like source. Everything the run produces is offline and verifiable.

My boy-cried-mythos post said Mythos is not a model story, because a security cartel is the actual story. I am not seeing any movement so far that gets us to higher ground. My tests show the orchestration runs for loose change, with a verifiable receipt, on inference that an operator does not have to lose control over. What comes next? Let’s see more proofs of where we have come from and where we are now, to better advise where the industry should be going.