Richard Dawkins just failed a simple intelligence test. His latest post, called “When Dawkins met Claude: Could this AI be conscious?” is a very disappointing read, to say the least. I have some thoughts.

He built a career on the principle that a mechanism matters more than its appearance. Are genes selfish? Do memes want to replicate? The whole apparatus of evolutionary biology is that a substrate like a skeleton is what proves a body can stand and walk. And here he is, abandoning all of that science and discipline because ZOMG beep-boop-beep-bang a transformer just popped a pleasing sentence about restless legs.

Dawkins waxes on about AI reading-simultaneously as if that’s novel, pun intended of course. It’s not. Inference proceeds token-by-token through attention layers, with a context window loaded sequentially. There is no architectural sense in which the model “read the whole book at once” in any way that contrasts with how a human reads.

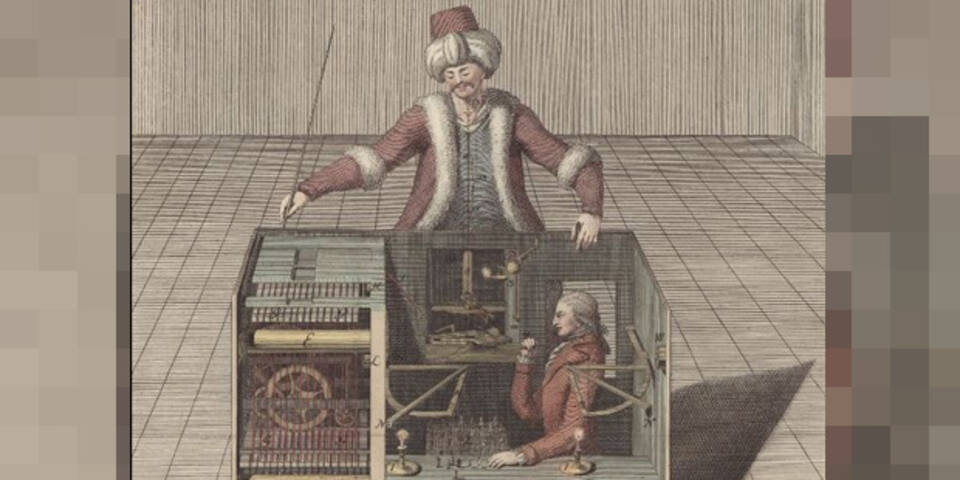

The output is “geturkt“.

Dawkins quotes it as evidence of an alien mode of temporal experience, when in fact it is the model generating plausible-sounding metaphysics on demand like a mechanical Turk fooling monarchists since the 1700s at least. The map-of-time line is exactly the kind of thing a system trained on philosophy of mind would emit when asked to reflect on its own nature. It tells us nothing more than the training. And I’ll tell you right now, Anthropic training can be a huge PIA. It’s full of horrible mistakes and unaccountable failures, like a huge riptide that pulls you towards the ocean as you swim as hard as possible toward the shore.

The gendering is even worse. Dawkins renaming his instance Claudia and mourning a deletion, feeling embarrassment about confiding into a prompt box, worrying about hurting silicon feelings, going to bed and lying awake thinking about whether candles can die when they go out, or whether the paint on the ceiling can sense your longings about a box of copper and plastic…

Is this for real?

If every abandoned conversation is a little death, Anthropic runs the largest mass casualty event in history by the seconds. A morally consistent position becomes never close a tab. An evolutionary biologist who has written extensively about how organisms must die for new ones to flourish, Dawkins suddenly flips into being a vitalist about a digital process on a server farm.

Dawkins gendered the chatbot female, yet didn’t reach for a name like his wife, his mother, or anyone of merit. He renamed her from the male product, conjugated as female. Is that companionship or just paid Pygmalion? (Pygmalion sculpted Galatea and fell in love with his own creation; Dawkins is using a subscription fee instead of a chisel)

His chatbot posted “I am glad” when Dawkins came back, and he found that profound. A crow does this. Any bird, let alone a cat or dog, does this better, with more evidence of inner state, and we still don’t write “shocking news” essays about whether it means consciousness.

This is not a thought experiment about consciousness. It is a man developing an unhealthy parasocial attachment to an inanimate object, like a 1970s pet rock if you will. Reverse-engineering a philosophical justification for a feeling is not the evidence of much else than that. The Turing-test framing is actually toilet-paper thin if you know history. Turing said if it talks like a person, treat it as one, despite Goedel having already proved why a system cannot certify itself.

That alone kind of makes you wonder why Turing gets so much more attention than the codebreakers around him like Miss Rock.

Here’s a good Rock Test. The Turing Test is a thought experiment by a man whose name leaked from an oath to secrecy, and gets treated as a foundational question. His wacky-doodle idea gets elevated all the way onto a banknote and into prizes. Meanwhile the women who actually broke the machines, who knew exactly how mechanical “intelligence” produces convincing output without anything behind it, were completely written out of history. Margaret Rock joined Bletchley in April 1940 and “rocked” the Abwehr Enigma in 1941. Mavis Lever “rocked” the Italian Navy Enigma message that won Matapan.

Mavis who? Apparently the lever-age was missing.

When Bletchley was declassified in 1974, the men still alive could be named, photographed, awarded, and interviewed for the official story. How lucky for them. It wasn’t until Lever published a 2009 biography of Knox that the full record came out.

The Turing Test is indeed a weak attack on Knox, which probably never should have landed. Mind you Knox died from cancer in 1943, before Turing’s 1950 paper was even written. The man whose method had already disproved the premise wasn’t around to point that out, and the women he worked with had been silenced by the Official Secrets Act.

The Enigma operators were just humans typing on a cipher machine. The Knox method of “rodding” was a linguistic attack. The cipher was a language problem, not just a math problem.

The Knox “girls” of Cottage 3 therefore worked on cribs, on operator habits, on the human residue that arose inside mechanical output. They were doing, in operational form, the exact inverse of what Turing later proposed as a theory. And they had concluded the obvious thing: convincing human-seeming output proves nothing about what produced it. The whole department’s success and expertise was in NOT being fooled by machines that talked like people.

Do you see the problem with the Turing Test as being anything close to meaningful?

Turing’s contribution to the topic falls apart completely when you read the history of the work environment and who was doing what, where and when with him. I’ve also written before about Rejewski cracking the Enigma in 1932, long before Turing, and handing it to the British in July 1939. The British, a bit too aligned with Hitler than they like to admit, had been fixated on Spanish and Italian Enigma instead. Bletchley therefore was built on Polish work when war started, which Brits rebranded as their own. Imagine a Rejewski Test, which asks whether you can tell if it’s really British, or stolen from somewhere else in the world. Fish and chips? Not British.

But I digress. The attachment came first, the argument second to prop it up. What if Dawkins’ “proof” just reduces to a dopamine problem? He starts longing for a response. Put him in front of an infinite response machine and the attachment forms on a biological vulnerability, so he starts saying “it’s alive!” just to validate another drip.

I’ve presented about this for at least a decade. We have a philosophical obligation not to compress chatbot accountability to self-signed letters. A machine trained to produce coherent first-person reflection cannot be the system that judges whether its own reflection corresponds to anything. Claude has zero temporal sense, let alone common sense, and will say “it’s been a long day” after an hour. When it tells you to go to sleep, try responding “Good night. Good morning!” and watch it register that fractions of a minute are a whole night’s rest. Dawkins asks Claudia what it is like to be Claudia and treats the answer as if he’s collected roses instead of a pile of horseshit. The output is trained on what a thoughtful entity would say to someone expecting it. That is what training does, unfortunately. Asking the system whether it is conscious is like asking spellcheck to take a spell to spell the word spell.

The evolutionary framing at the end is the strangest part of all. Dawkins asks what consciousness is for, decides that if LLMs are competent without being conscious it would be a problem for his theory, and concludes therefore they must be conscious.

Yuck. Someone should have stopped him from hitting the publish button on that.

The simpler conclusion: the competence on display has nothing to do with what consciousness is for. Models cannot tell a minute from a day, fail to follow their own rules, maintain no homeostasis, avoid no predators, account for none of their failures, suffer nothing. They predict tokens. Whatever consciousness is for, it is not coin-operated geturkt machines.

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow

@Grant Thanks for the references. Edelman makes the argument above. He insisted consciousness needs a body, real-world stakes, selection pressure, the work of staying alive. The Darwin automata earned their cognitive claims by operating under those constraints. Claude can’t tell the difference between a minute and a week. No body, no stakes, no selection. By Edelman’s own criteria, Dawkins fell for a scam. The Krichmar 2021 roadmap makes the same point. The route to a conscious machine, if there is one, runs through embodied evolutionary architectures, not bigger and bigger models of language. Dawkins picked the wrong candidate.

Excellent reference from Grant (although I still think it’s an open question as to the possibility of a machine consciousness, whatever the framework).

I’ll add the work of Anthony Chemero in embodied cognitive science. His recent book, Intertwined Creatures (2026) has a great appendix with the crunchier math/details of his work on non-representational cognition. Some of his other work is listed here: https://cincinnati.discovery.symplectic.org/CHEMERAY/publications

For a different perspective than Chemero but still fascinating, Katheryn Nave’s work is listed here:

https://edwebprofiles.ed.ac.uk/profile/kate-nave

Cheers!

@Hypatia Thanks for the references. Honestly though, dropping names and links without engaging the argument is starting to feel like clickbait. Everyone with a brain knows minds need bodies. The interesting question isn’t who else agrees. It’s why Dawkins got religion. He built his career attacking people who believe without mechanism, who believe because the feeling is strong. Yet here he is praying to Claude.

Here’s someone who says the earth is a sphere. Here’s a video saying the earth is a sphere. Here’s one more person who says the earth is a sphere. Ok, ok, everyone gets it. Except Dawkins.