Have you heard the story about a drone that crashed into the White House yard?

Wired has done a follow-up story, drawing from a conference to discuss drone risks.

The conference was open to civilians, but explicitly closed to the press. One attendee described it as an eye-opener.

Laughably Wired seems to quote just one anonymous attendee, perhaps as payback for lack of access to attend the event. Who was this sole voice and why leave them anonymous? What made it an eye-opener?

In my conference talks for the past few years I explicitly mentioned attacks on auto-auto (self-driving cars) based on our fiddling with drones.

Perhaps we are not getting much attention, despite doing our best to open eyes. Instead of some really scary stuff the Wired perspective looks only at a very limited and tired example.

But the most striking visual aid was on an exhibit table outside the auditorium, where a buffet of low-cost drones had been converted into simulated flying bombs. One quadcopter, strapped to 3 pounds of inert explosive, was a DJI Phantom 2, a newer version of the very drone that would land at the White House the next week.

Surely a flying bomb is not the most striking (pun intended?) visual aid. I would be happy to give any journalist multiple reasons why a kamikaze does not present the most difficult problem to solve.

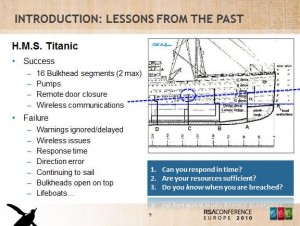

On the scale of things I would want to build defenses against, their most striking example seems already within reach. There are far more interesting ones, which is why I have been giving presentations on the risks and what defenders could do to about them (Blackhat, CONFidence).

We also have tweeted about taking over the skyjack drone by manipulating its attack script flaw, essentially a mistake in radio logic. A drone on autopilot using a mapped GPS would be straightforward to defeat, which we also have had some fun discussions about, at least in terms of ships (flying in water, get it?). And then there is Lidar jamming…

Anyway back in April of 2014 I had tweeted about DJI drone controls and no waypoint zones. The drone company was expressing a clear global need to steer clear of airports. Thought I should call attention to our 2014 research and this detail as soon as I saw the White House news so I replied to some tweets.

Nine retweets!? Either I was having a good day or the White House raises people’s attention level. Maybe we can blow off all our talking about this in the past because someone just flew a drone into the wrong yard. It’s a whole new ballgame of awareness. While the White House drone incident could cause a backlash on drone manufacturers for lack of zone controls, the incident also brings a much needed inflection point at the highest and broadest levels, which is long overdue.

Our culture tends to leave the market to harm the average person because let them figure it out. Once a top-dog, a celebrity with everything, is harmed or threatened then things get real. It is like we say “if they can’t defend, no one could” and so the regulatory wheels start to spin.

An incident with zero impact that can raise awareness sounds great to me. As I explained to a FCC Commissioner last year, American regulation is driven by celebrity events. This one was pretty close and may get us some good discussion points. That is why I see this incident finally bringing together at least three phases of drone enthusiast. Fresh and new people will be stepping into the ring to tinker and offer innovative solutions; old military and scientific establishment folks (albeit with some VIP nonsense and closed-door snafus) will come out of the woodwork to ask for a place in the market; and of course those who have been fiddling away for a while without much notice will take a deep-breath, write a blog post and wonder who will read it this time.

Three drone enthusiast profiles

Last year I sauntered into a night-time drone meetup in San Francisco. It was sponsored by a high-powered east-coast pseudo-governance organization. And when I say drone meetup, I am not just talking about the lobbyist drone in fancy clothes who talked about bringing “community” closer to the defense industry “shared-objectives” (“you are getting very sleepy”). I am talking about a room stuffed with people interested in pushing technology boundaries, mostly in the air. Several observations about that meetup I would like to share here. Roughly speaking I found the audience fit into these interest levels:

- Profile 1: The hobbyist Easily annoyed by thinking about risks, the hobbyist is typical attendee in technology meetups. Some people look at the clouds above the picnic, some look at the ants. These new technology meetups almost always are filled with cloud watchers who don’t want to worry. Hobbyists would ask “what do you pilot”. I would reply “Sorry, not here to pilot, I study how to remove drones, drop them from the air”. This went over like a lead balloon. You could sense the deflation in mood. When asked “why would anyone want to do that” my response was “Nothing concrete yet. So many possible reasons a drone could be a threat.” Rather than why, I want to know how and I told people “when the day comes someone needs a drone stopped, I would like to avoid panic about how.” Hobbyists have amazing ideas about drones changing the world for the better; someone needs to ask them “why” and “is that safe” at strategic points in the conversation.

- Profile 2: The professional/pilot Swapping stories about success and failures, this group was jaded by reality. A gold-mine of lessons not widely shared was available to those willing to ask. A favorite story was from someone who built gas-leak sensor drones “too accurate” to be used. A power company (PG&E) was forced to admit their sensors (mostly manual, staff in vehicles) were dangerously out-dated and wrong. The quality gap opened was so large PG&E became angry and tried to kill his drone program. Another great story was mines laser-mapped by drones. Software stitched together drone photos and maps, using cloud compute clusters, then enhanced with environmental details. New business models were being explored because drones could inexpensively create replica worlds; gaming companies and architects were target markets. Want to see how an underground restaurant concept looks at 5:30PM as the sun sets, or with a morning rain-storm? Click, click you can walk through virtual reality courtesy of drones. Another story was pure surveillance, although told as “tourism”. Go to a famous monument, pull out your pocket drone, launch it and quickly take a few thousand pictures; now you have a perfect 3D model. Statues, machines, buildings…the drone comes back with data you download to process into a perfect model of anything the drone can “see” on its little vacation. Since this story was told last year I have to also point out newer drones are faster; process data in real-time as they fly instead of after a download.

- Profile 3: The lobbyist The lobbyist bridges reality of risks with promise of new sales. There is some belief that the military is light-years ahead of hobbyists and professionals in drone-building and flying. Been there done that, a business model (selling to the government) was solid and their engineers want to rule the technology leadership roost into the next business models. However they also openly admit the military-industrial-complex has become so used to handouts they fear missing the boat on consumer desire. A flood of new talent was scooping up drone kits and toys, which looked like it could dwarf the military-industrial market. Thus synergies and collaborations are hoped to license military tech to professionals, who will tell the stories that hobbyists get excited about.

You could smell a three-way collision (at least, maybe more) brewing and bubbling. Yet the three groups stood apart as distinct. Political stakes were increasing: money and ideas starting to flow, old power worried about disruption, seasoned vets gave guidance on where to go with the technology and new horizons. It just didn’t seem quite yet the time for collaboration, let alone getting a security discussion going across all three groups.

Bringing Profiles Together

Going way, way back, I remember as a child when my grandfather handed me a drone he had built (mostly ruined, actually, but let’s just pretend he made it better). Having a grandfather who built drones did not seem all that special. Model trains, airplanes, boats…all that I figured to be the purview of old people fascinated with making big technology smaller so they could play with it. Kind of like the bonzai thing, I guess, where you think it’s something everyone would do when in fact very few can keep the damn thing alive.

Fast-forward to today and I realize my grandfather’s peculiar interest in drones might have been a little exceptional. Today groups everywhere are growing consistently larger and newly discovering use for drones. If I drew a Venn diagram the circles would seem separate and distinct from each other; where drones simply are not part of everyday life yet, unlike technology such as glasses (the better to see you with). Roombas aside, my theory is the future looks incredibly bright if people can start thinking together about ethics and politics in the bigger drone picture, including risks.

Speaking of going back in time to understand the future, in 2013 I found my long-time drone interests leading into tweets useful at work for an infrastructure/operations giant. Could this be a model of convergence? I thought Twitter might help with converging risk discussions into after-hours meetups, like talking about the forward-thinking people in Iowa demanding no-drone zones.

Clearly my humor did not win anyone’s attention. Not a single retweet or favorite. Crickets.

It also may just be that Twitter sucks as a platform and I have no followers. That’s probably why I’m back to blogging again more. Does anyone find Tweets conducive to real conversation? The best Twitter seems to do for me is to shift conversation by allowing me to throw a fact in here or there, like I sit quietly with my remote Twitter control, every so often dropping stones into the Twitter pond.

When a news story broke in 2013 I had to jump in and say “hey, cool Amazon hobbyist (new) story and I think you could be overlooking a FedEx lobbyist (old) story”.

I was poking around some loopholes too, wondering whether the drones over SF could have a get-out-of-jail card if we wanted to take them down.

Kudos to Sina and Jack for the conversation. My tweets were at least reaching two or three people at this point.

And as anti-drone laws were popping up I occasionally would mention my research in public. Alaska wanted a law to make sure hunters could not use drones for unfair advantage.

Such a rule seemed ironic, considering how guns have made killing a “sport” nearly anyone can “play”. A completely unbalanced and technology-laden air/ground/sea attack strategy on nature was common talk, at least when I was in Alaska. Anyway someone thought drones were taking an already automated sport of killing too far.

Illinois took the opposite approach to Alaska. Someone saw drones as potential interference to those out for a killing.

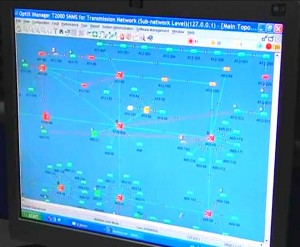

By April of 2014 I had built up a fair amount of detail on no-fly zones and strategies. We ran drones for testing and anti-drone antenna prototypes were being discussed. I gave myself a challenge: get a talk accepted and then publish an anti-drone device, similar to anti-aircraft, for the hobbyist or average home user.

Here’s a good picture of where I was coming from on this idea. One of the top drone manufacturers told me their drones were absolutely not going to stray into no-fly zones. What if they did anyway? Ethics were easy in this space of unauthorized entry. A system to respond seemed most clearly justified and desired.

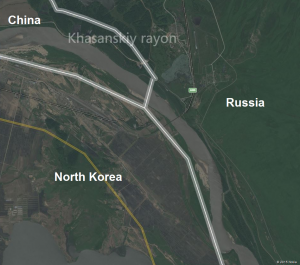

Haha. “No-way points” get it? No? That’s ok, no one did. Not a single re-tweet or favorite for that map.

The point wasn’t completely lost on people, however. A little exposure meant I was called in for a short Loopcast episode, called Drone Hacking, which I suppose some people might have heard. The counter says 162,000 plays so far, which seems impossible to me. Maybe some of those numbers are from drones?

Anyway my big plan to release our research at a conference was knocked down when the Infiltrate popular voting system denied us a spot. We were going to show how we immediately, and I mean immediately, found a way to skyjack the Skyjack drones; it was a talk about general command and control strategy, redirection, ground-to-air, air-to-air and all kinds of fun stuff.

Denied by peers.

I resubmitted the same ideas to CanSecWest.

Denied by review board.

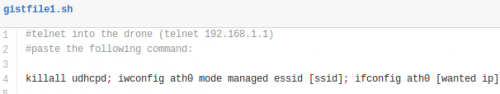

This pretty-much shelved my excitement to explain more details, e.g. those obvious bugs in SkyJack code (not picked up by any news but at least credited by Samy when we reported it to him), and why insecure WiFi and services leave options for self-defense wide open:

Kind of obvious what’s wrong here; security is most sensational when addressed at a low-level using basic stuff. Begs the question of how and when exactly to cross-over the discussion from infosec/safety flaws into hobby or even professional forums. I confess I made a mistake. My focus has been more on what to do about larger picture issues, because I argue the individual sensor flaws go without saying.

Yet I have to face reality that the “flaws” audience, the people looking for ants, still may be the only place to talk about dropping drones out of the sky. Others will dismiss the topic until a serious, celebrity or White House level, event occurs. In my mind that is too late…

At last year’s EMCworld a guy on my staff was fully dedicated to drone safety tests — he was achieving real pilot skills by the time we ran public demos — still our safety research wasn’t detailed by any news source. Timing felt early, as if journalists were apprehensive to the story and the groups mentioned above too separate to generate a nice broad general audience piece.

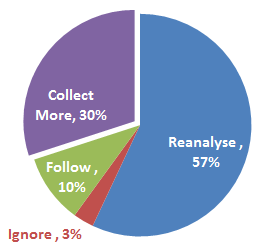

So while the conference was explicitly open to the press we had the opposite of major celebrity-level disaster (we we told not to crash a hobby drone into the crowd, despite it raising our chances for attention). Our 30,000 person infrastructure/operations audience seemed to lack interest in any presentation on responses to evil drones. An attempt to cross-over just turned into people asking if they could take home the drones as a conference prize. Thus we auctioned our four test units at the end of the show and management patted us on the back for quiet success. Ooops. Maybe we can do better getting the right kind of attention this year before real damage is done.