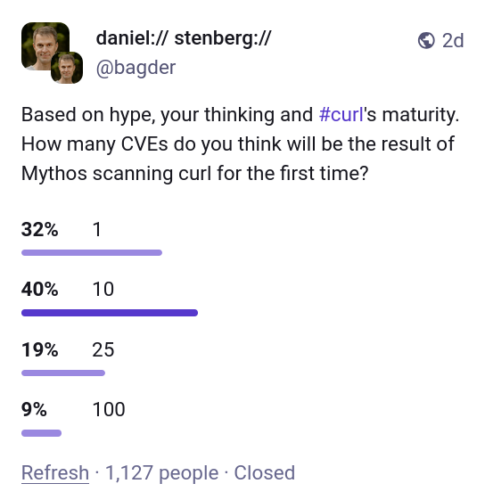

The cURL repo maintainers aren’t mincing words about the failure of Mythos to match the hype. Daniel Stenberg calls it a lot of hot air:

Stenberg wrote that the report “felt like nothing,” and that feeling was further validated by a review of Mythos’ findings.

Nothing. Mythos felt like nothing. The Mythos report itself describes its work as “hand-driven analysis”. Really.

…hand-driven analysis using LLM subagents for parallel file reads, with every candidate finding re-verified by direct source inspection in the main session before being recorded… No automated SAST tooling was used.

I really wonder why the hand driving wasn’t the hand of Stenberg. Why wasn’t Anthropic falling over itself to make sure Stenberg had access to the tool? That’s not right. A real tool wants real hands giving real feedback.

And here’s the real kicker:

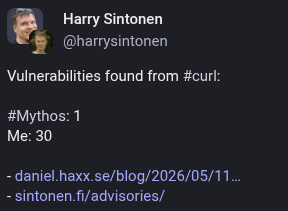

“Once my curl security team fellows and I had poked on this short list for a number of hours and dug into the details, we had trimmed the list down and were left with one confirmed vulnerability,” Stenberg said, bringing us back to the aforementioned number.

As for the other four, three turned out to be false positives that pointed out cURL shortcomings already noted in API documentation, while the team deemed the fourth to be just a simple bug.

BOOM.

Three of the five “confirmed” findings flagged behavior that curl’s own API documentation describes as intended. Mythos mislabeled the documented, accepted behavior as novel findings, vulnerabilities for review. That is an even worse failure than just RTFM.

Call this what it is, yet more validation that a human designed harness is the only real threat, and NOT the very expensive Anthropic model. I’ve said it repeatedly on this blog and nothing so far has proven Mythos is something we haven’t seen before. Stenberg is at the same place so many other experts have arrived:

I see no evidence that this setup finds issues to any particular higher or more advanced degree than the other tools have done before Mythos.

Amen. And yet? ZOMG the FEAR circulating, even from some security experts. It burns.

What is really wrong with this survey is that the security industry needs to be focusing on Post Quantum preparedness instead of AI-vendor hype about AI.

Anthropic has made the world significantly less prepared by blowing hundreds of millions into trying to scare people into a billionaire-run cartel of disinformation.

As lead developer of curl I was offered access to the magic model and I graciously accepted the offer. Sure, I’d like to see what it can find in curl.

I signed the contract for getting access, but then nothing happened. Weeks went past and I was told there was a hiccup somewhere and access was delayed.

Eventually, I was instead offered that someone else, who has access to the model, could run a scan and analysis on curl for me using Mythos and send me a report. To me, the distinction isn’t that important. It’s not that I would have a lot of time to explore lots of different prompts and doing deep dive adventures anyway. Getting the tool to generate a first proper scan and analysis would be great, whoever did it. I happily accepted this offer.

That is some very hard evidence to add to the the cartel theory.

Meanwhile, Post Quantum? Hello? It’s a real threat. This cartel nonsense is destroying trust in Anthropic. Every day now I get open mouths and saucer eyes when I demonstrate free and commodity tools to CISOs that prove how Mythos “velvet rope” access gets them exactly… nothing. Stenberg says as much himself.

Primarily AISLE, Zeropath and OpenAI’s Codex Security have been used to scrutinize the code with AI. These tools and the analyses they have done have triggered somewhere between two and three hundred bugfixes merged in curl through-out the recent 8-10 months or so. A bunch of the findings these AI tools reported were confirmed vulnerabilities and have been published as CVEs. Probably a dozen or more.

Nowadays we also use tools like GitHub’s Copilot and Augment code to review pull requests, and their remarks and complaints help us to land better code and avoid merging new bugs. I mean, we still merge bugs of course but the PR review bots regularly highlight issues that we fix: our merges would be worse without them. The AI reviews are used in addition to the human reviews. They help us, they don’t replace us.

In other words, Mythos showed up and nothing changed. Nothing. He’s calling it at least 8 months late to the CVE party.

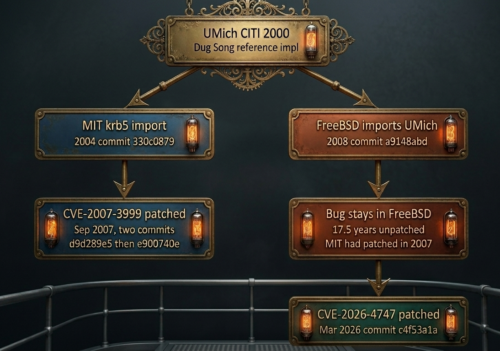

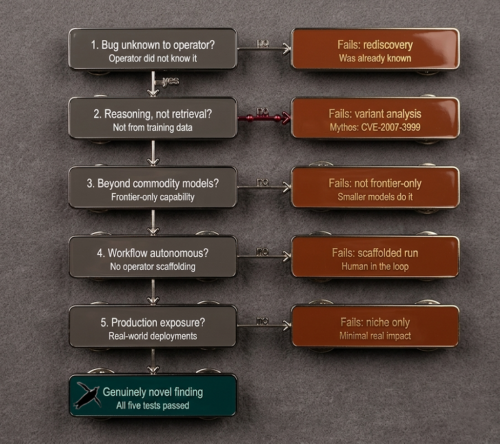

How bad is the Anthropic nothingburger distraction? I usually show the following vulnerability family, for example. The 2007 fix sits inside Mythos’s training corpus. The 2008 sibling fork carried the same flaw unpatched in public code for 17.5 years. Mythos’s “discovery” matched a fix that it knew already.

That is simply a re-scan of old fixed bugs, NOT discovery, by any modern definition. In the cURL example, even retrieval wasn’t done right by Mythos. If you read the Anthropic announcement right, it’s been a lot of hot air from day one.

As Stenberg put it in his blog post:

We have not seen any AI so far report a vulnerability that would somehow be of a novel kind or something totally new.

That is the lead cURL maintainer confirming retrieval over discovery, in his own voice, exactly what I have been reporting based on Anthropic’s own launch blog based on the UMich/MIT/FreeBSD example.

Preach, brother. Here’s the anti-cartel novelty pin I made for everyone to wear on calls about Mythos:

Stenberg was never allowed to run the prompts and report first-hand, while Anthropic remains in hiding. An unnamed third party ran Mythos, generated the report, sent it over to keep up the ruse. No one outside that arrangement can audit what Mythos did autonomously versus what the human operator is doing. The “velvet rope” access prevents actual comparisons that would settle the growing doubt in Anthropic.