Recently I wrote here about the ill-fated American operation “IGLOOWHITE” from the Vietnam War that cost billions of dollars to try and use information gathering from many small sensors to locate enemies.

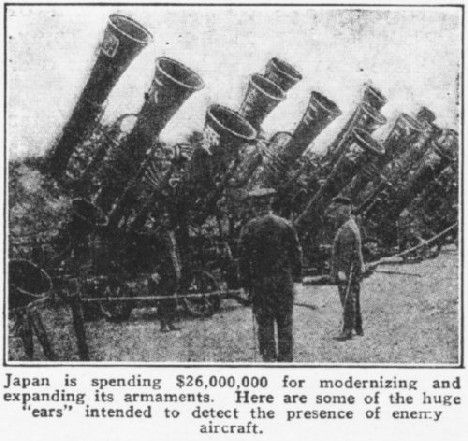

It’s in fact an old pursuit as you can see from this news image of the Japanese Emperor inspecting his big 1936 investment in anti-aircraft data collection technology.

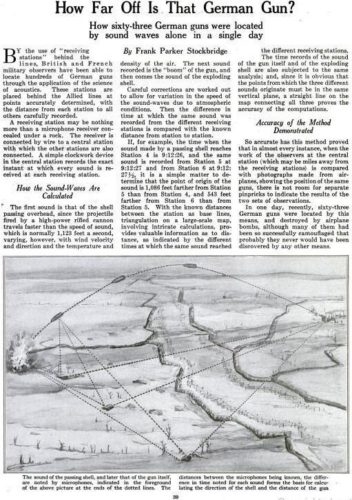

Even earlier, Popular Science this month in 1918 published a story called “How Far Off Is That German Gun? How sixty-three German guns were located by sound waves alone in a single day.”

Somewhere in-between the Vietnam War and WWI narratives, we should expect the Defense Department to soon start exhibiting how they are using the latest location technology (artificial intelligence) to hit enemy targets.

The velocity of information between a sensor picking signs of enemy movement and the counter-attack machinery…is the stuff of constant research probably as old as war itself.

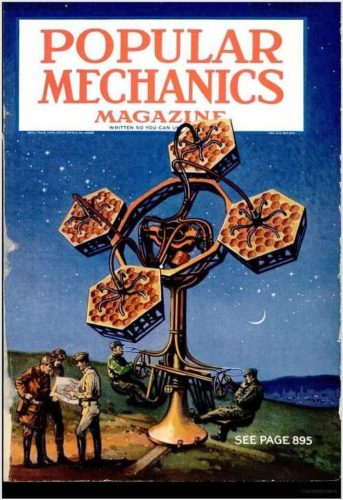

Popular Mechanics for its share also ran a cover story with acoustic locator devices, such as a pre-radar contraption that was highlighted as the future way to find airplanes.

The cover style looks to be from the 1940s although I have only found the image so far, not the exact text.

That odd-looking floral arrangement meant for war was known as a Perrin acoustic locator (named for French Nobel prizewinner Jean-Baptiste Perrin) and it used four large clusters of 36 small hexagonal horns (six groups of six).

Such a complicated setup might have seemed like an improvement to some. Here are German soldiers in 1917 using a single personal field acoustic and sight locator to enhance the “flash bang” of enemy artillery, just for comparison.

Obviously use of many small sensors gave way to the common big dish design we see everywhere today. Igloo White perhaps could be seen as a Perrin data locator of its day?

They are a perfect example of how simply multiplying/increasing the number of small sensors into a single processing unit is not necessarily the right approach versus designing a very large sensor fit for purpose.

Update September 2020: “AI-Accelerated Attack: Army Destroys Enemy Tank Targets in Seconds”

…”need for speed” in the context of the well known Processing, Exploitation and Dissemination (PED) process which gathers information, distills and organizes it before sending carefully determined data to decision makers. The entire process, long underway for processing things like drone video feeds for years, has now been condensed into a matter of seconds, in part due to AI platforms like FIRESTORM. Advanced algorithms can, for instance, autonomously sort through and observe hours of live video feeds, identify moments of potential significance to human controllers and properly send or transmit the often time-sensitive information.

“In the early days we were doing PED away from the front lines, now it’s happening at the tactical edge. Now we need writers to change the algorithms,” Flynn explained.

“Three years ago it was books and think tanks talking about AI. We did it today,” said Army Secretary Ryan McCarthy.

Three years ago? Not sure why he uses that time frame. FIRESTORM promises to be an interesting new twist on IGLOOWHITE from around 50 years ago, and we would be wise to heed the “fire, ready, aim” severe mistakes made.