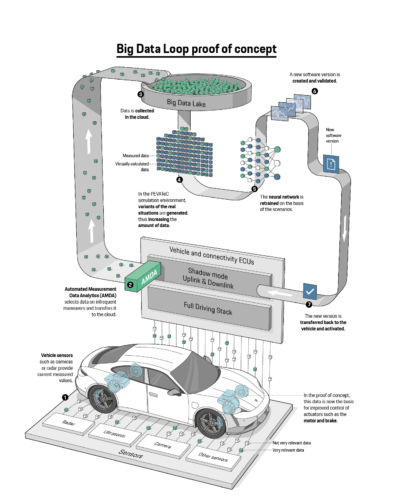

A new graphic from the Porsche newsroom is an excellent example of what I’ve been calling the gap between the ERM (easy, routine, minimal judgment) and ISEA (identify, store, evaluate, adapt) functions for every form of “intelligence”.

Data on “infrequent maneuvers” caught my eye in particular. I find it misleading to try and frame observations in the loop by frequency.

We might stop infrequently on every road (even city blocks tend to give more time rolling than stopping) yet stopping is due to the events that matter most to our survival (e.g. intersections, obstacles).

In fact, if you look at Dan Ford’s dissertation about John Boyd (inventor of the famous OODA loop — observe, orient, decide, act) we’re reminded “infrequent maneuvers” might be best framed as our constant reality (Page 50):

As Antoine Bousquet summarizes John Boyd’s thinking in The Scientific Way of Warfare, “Boyd believes in a perpetually renewed world that is ‘uncertain, ever-changing, unpredictable’ and thus requires continually revising, adapting, destroying and recreating our theories and systems to deal with it.” Grant Hammond expresses it this way: “Ambiguity is central to Boy’d vision … not something to be feared but something that is a given…. We never have complete and perfect information. We are never completely sure of the consequences of our actions…. The best way to success … is to revel in ambiguity.”

There’s of course an extremely high cost of revelation in ambiguity, versus the low-cost of routines. But the point should still could be taken that framing an expected risk as an infrequent one is a dangerous game to play.

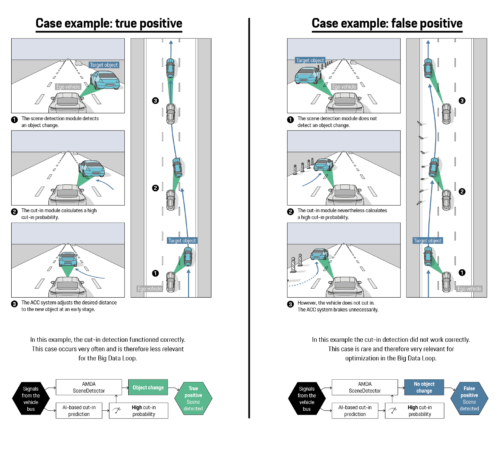

Back to the Porsche newsroom, my favorite image is actually this one:

The detection illustrated here is exactly the same as I documented extensively and presented in 2016 with regard to Tesla sensor and learning failures (a tragic foreshadowing of Brown’s death just weeks after his lane change incident).