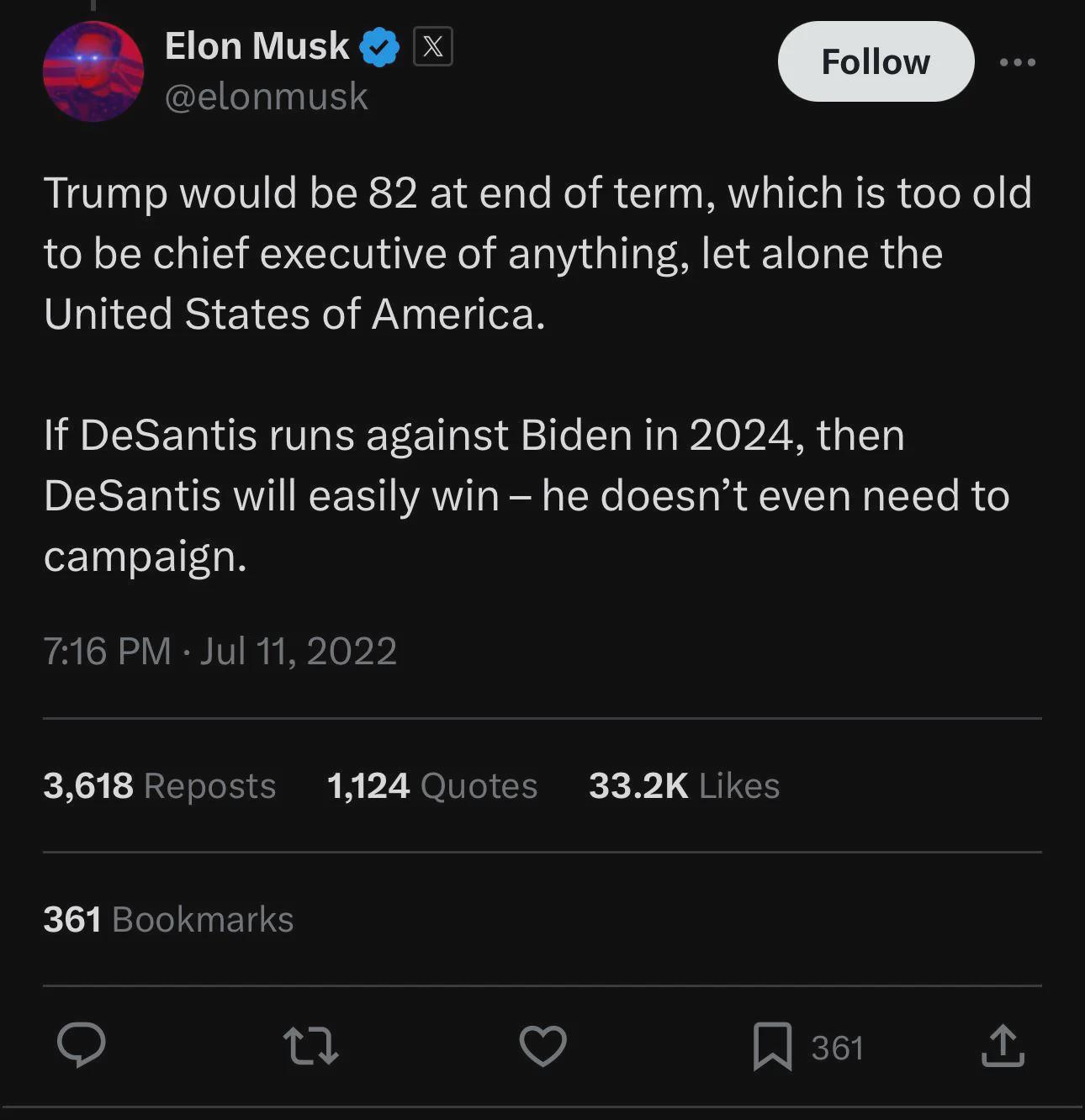

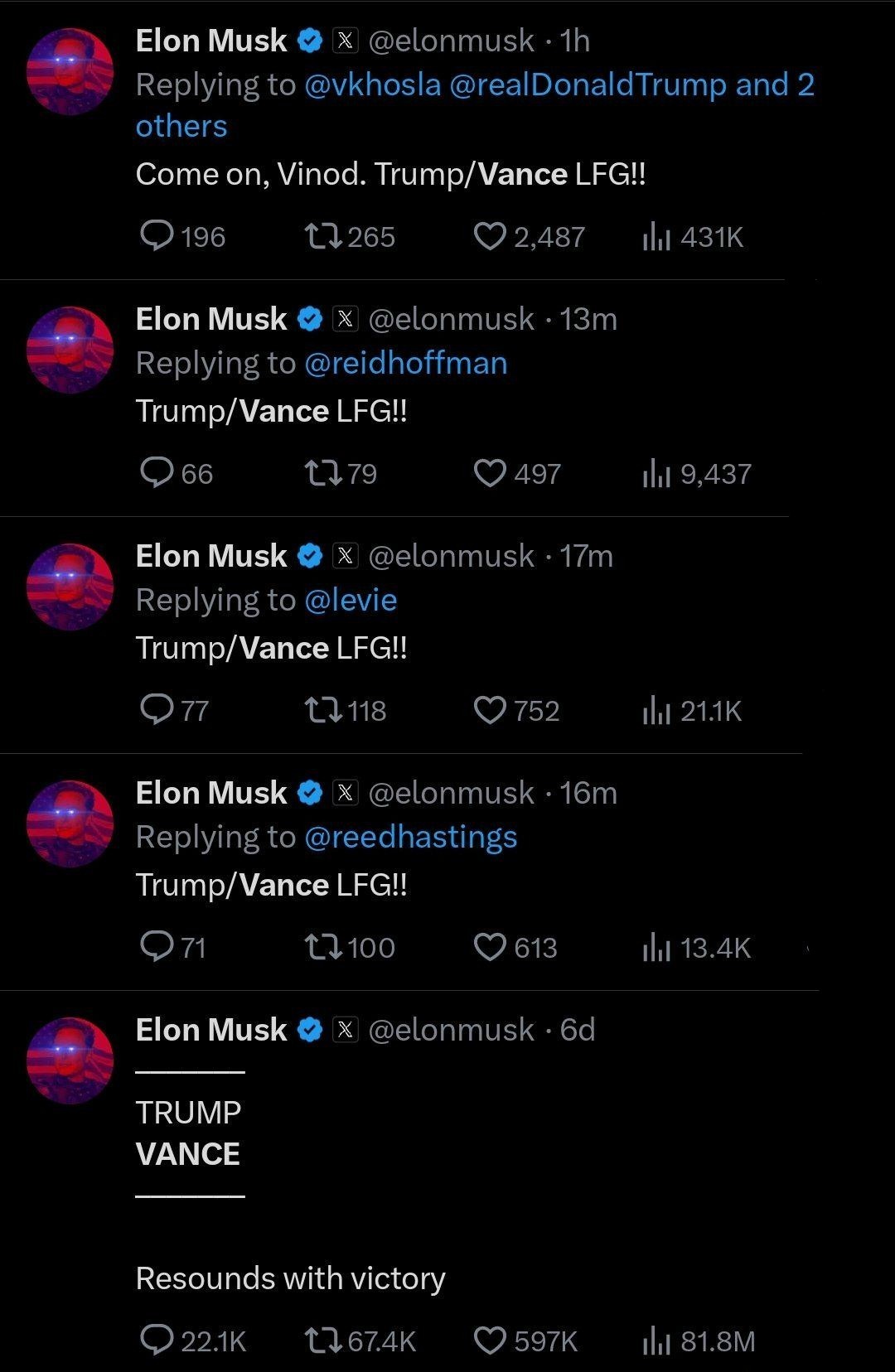

People have finally caught on to the Elon Musk fraud, like the rapid end of Frank Abignale, and seem just tired of being defrauded, instead of justifiably angry or vengeful.

Note the tone of this new report:

…the aggression of 12.4.3, followed by the vague promise of future improvement with “more data,” it just feels too familiar. Tesla announces an update with an unfalsifiable, absurd claim about “a giant leap” forward.

Reviewers film it slamming into curbs or driving the wrong way down public roads with pedestrians nearby, and say they are impressed by its promise, if only Tesla could iron out the “edge cases.” A new update drops, and the last issues are fixed. But seven new issues crop up, which will be gently noted in videos from fully bought-in “reviewers,” and the cycle repeats.

Fraud.

Without fraud there would be no Tesla. And the latest FSD has now been verified as significantly worse, a danger to society, unable to even deliver on the promises of 2016.

That’s the state of FSD in 2024. The technology is still not legally self-driving. It is still 100% on the driver if something happens. It is still unable to make good on promises Musk made in 2016, like the claim that it would be able to drive anywhere in the country with no one inside by 2018. By the end of 2020, there were supposed to be 1 million robotaxis on the road. By 2024 there were actually zero.

And so, what is anyone going to do about the Abignale of cars? Why is this cycle allowed at all, let alone set to repeat? Where is the FBI on this? Too busy propping up Crowdstrike?