Elon Musk whined two days ago that his xAI “was not built right first time around” and “is being rebuilt from the foundations up.”

That sounds familiar to anyone who knows the story of the man who says everything is very soon to be delivered, yet never delivers. Tesla still has never been built right and is awash in design defect lawsuits, unable to stop itself killing people unnecessarily.

Ten of twelve xAI co-founders have skipped out of Dodge (similar to DOGE). Ten of TWELVE. Was it a start-up by committee?

His flagship AI product can’t compete in the market, just like his cars, his solar panels, his batteries, his… list goes on and on. Cheap, rushed, and sub-standard. The leader of his new AI “rebuild” project lasted just sixteen days before skipping Musk town too.

Naturally, SpaceX and Tesla loyalists are the ones being cannibalized to clean up the mess by finding someone, anyone, to fire at xAI other than Elon Musk. Expect a lot of heads to roll so Elon can keep his own for another future failure.

Perhaps most notably, six weeks ago, Tesla itself was defrauded to inflate xAI. It poured $2 billion of its shareholder money into what? Then SpaceX immediately acquired the shareholder investment to artificially generate a $250 billion valuation ahead of an attempt to IPO. Now Musk is telling investors all that money his left hand put into an opaque xAI box, which his right hand then valued extremely high, turned out to be totally worthless. Empty box, value disappearing trick. Magic! That thing he just sold both Tesla and SpaceX on is now called so broken it’s worthless.

In other words, just another day of Musk oil.

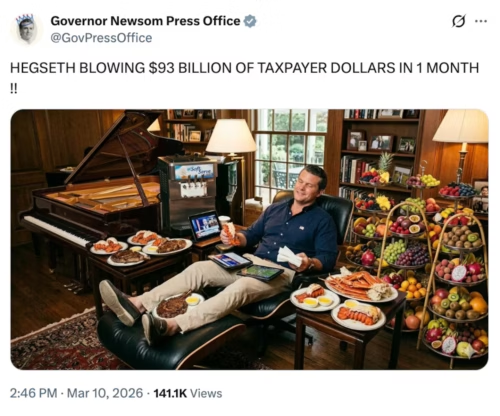

None of this yet seems to matter to the Pentagon, which itself was caught blowing tens of millions on steak and lobster dinner parties.

The $200 million Pentagon contract is as active as Hegseth’s reported daytime drinking habits. The GenAI.mil integration proceeds on schedule. Three million military and civilian personnel will be expected to say they don’t know how or why they are bombing girls’ schools, while using the “Mechahitler” genocide-promoting Grok of Elon Musk at Impact Level 5.

The Cybertruck of Hamburgers

On Wednesday, SFGATE visited the Tesla Diner in West Hollywood to investigate who, other than Pete Hegseth, would want a $40 double cheeseburger combo. Eight months after its grand opening, the reporter found a handful of customers with bored staff cleaning an empty red carpet. The head chef, like the xAI committee of founders, left within six months. The Optimus robot billed as the future of popcorn delivery is gone. The obnoxiously promoted 24/7 operation dropped to eighteen hours within two weeks of opening. Even the menu was slashed weeks after launch — blamed, naturally, on “unprecedented demand.”

Musk boasted that when the diner “turns out well,” Tesla would open locations in major cities worldwide. It turned out as well as the Cybertruck. Even protesters can’t be bothered to pay attention anymore.

This is the Elon Musk “success” template for all his brands. Announce a spectacle with “coming soon”. Extract investments from suckers. Slash the offering and slip the delivery. Ignore key personnel leaving and fire the rest to cook the books. Keep a shell running with hype and move on to the next announcement.

The most obvious and embarrassing levels yet are xAI. It makes American regulators look like a joke for expecting the market to stop Musk. Founded with twelve co-founders citing infinite ambitions. Undeservedly handed $2 billion from Tesla shareholders and $200 million in Pentagon contracts, only to be merged into SpaceX to cover up the mistakes.

The diner still has a building on Santa Monica Boulevard. xAI still has a government contract. Neither will ever work, and yet neither will be shut down.

When Product Isn’t the Product

The Electrek article covering Musk’s admission includes an ARC AGI chart showing xAI significantly behind everyone. Google, OpenAI, and Anthropic are crushing both performance and cost, while Elon Musk can’t get it up. TechCrunch reports the immediate trigger for the latest firings was Musk’s frustration that his coding tools couldn’t keep up, and his immediate reaction was to blame the people he hired. The two co-founders who left this week, Zihang Dai and Guodong Zhang, departed after Musk exposed his inability to accept blame.

But the Pentagon didn’t actually grant xAI an inside deal for its coding tools. The Pentagon bought a swastika (X) data pipe.

The Defense Department’s own announcement reveals the deal: users will gain “real‑time global insights from the X platform, providing War Department personnel with a decisive information advantage.”

Not better reasoning. Not better code. Not AI. Access to social media content owned by the same man who ran DOGE with access to all competitor contracting data.

Google, Anthropic, and OpenAI each got the same $200 million ceiling. Musk used foreign investors to acquire something the others didn’t have, then sold access to it: a live feed from a platform that is becoming a safe haven for regime-aligned disinformation and information warfare, repackaged as “situational awareness.”

What the Pentagon Actually Ordered

The SatNews analysis identified this sad reality in December: the deal cements the “Musk Stack” — SpaceX, Starlink, xAI — as a vertically integrated super-prime contractor, creating a single point of power failure and unprecedented vendor leverage over U.S. defense architecture. The launch pad, the satellite, the communications link, and now the analytical engine, all controlled by one right-wing radical. That’s not a procurement failure. That’s a procurement outcome with a political objective.

Katie Miller, wife of Trump’s deputy chief of staff Stephen Miller, was given a job at xAI despite no relevant experience, to falsely advertise Grok as the “only truth-seeking AI available to the US Government.” Her obvious lie should be illegal. Then a contract “came out of nowhere,” as Senator Warren documented. There was no xAI track record in government contracting. All it was selling was data, and political access.

The product was never the product. The sale is the pipeline: ideological alignment laundered through tech procurement, vendor lock-in sold as innovation, and social media surveillance feed billed as intelligence.

Broken by Design

Consider the full sequence.

- Creates xAI by committee

- Diverts resources

- Tesla invests $2B

- Merges into SpaceX

- Admits never worked

- Fires others

- Contract continues

Musk founded xAI despite Tesla’s own AI being well underway and better staffed and positioned. He confused and diverted talent and resources into a “better” thing. Tesla’s board foolishly dumps $2 billion into the gamble, and then he admits afterwards it never was built right. It’s all a chaotic relationship-dependency, attachment abuse, with nothing to do with actual technology.

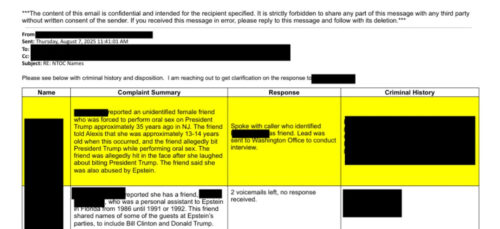

The early user surge of xAI in fact came from criminals, lax regulation of Grok’s ability to produce sexual and even abusive imagery, per TechCrunch. The company generated CSAM after Musk ordered all the content safety removed. It’s under investigation by the UK, France, India, Malaysia, Canada, and Brazil because they aren’t run by the Epstein Files men. This is the company whose California Attorney General issued a cease and desist for child exploitation content.

The Pentagon chose the worst technology, which not only can’t perform, it’s become a cesspool of CSAM and Nazism. Expect next they’ll be signing a burger deal with Tesla diner.

The Fantasy of a Forever Rebuild

Musk declared he would drive cross-country without touching a steering wheel by 2017. He declared he would land his ship on Mars by 2018 because NASA was too slow due to their quality concerns.

So of course we should believe he will have all his coding tools ready by mid-year.

He’s apparently found two people from Cursor willing to take his investors’ money to figure it out. He’s reviewing all the rejected job applications and begging people to come get fired. He’s naming a project “Macrohard” because he thinks if he makes his mistakes funny, such as a lame reference to Microsoft, they won’t land on him.

The remaining co-founders, Manuel Kroiss and Ross Nordeen, are staying for unclear reasons. Using SpaceX to send CSAM into space? Front row seats to watch a CEO running six companies into the ground?

At the huge ostentatious Tesla Diner, a staff member spends an afternoon cleaning an empty red carpet that leads to a door that almost nobody walks through. It’s a perfect metaphor for Musk’s growing legacy as the worst businessman in history.

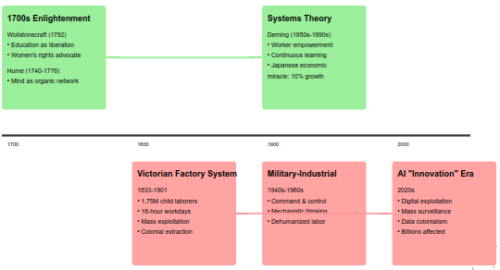

The German education system has an answer to this. The Ausbildung system treats craft mastery as a legitimate intellectual achievement, not a consolation prize for people who didn’t make it to university. A Meister has a protected title, a defined body of knowledge, and social standing that reflects actual competence. The system assumes society needs people who understand the substrate, and builds institutions to produce them.

The German education system has an answer to this. The Ausbildung system treats craft mastery as a legitimate intellectual achievement, not a consolation prize for people who didn’t make it to university. A Meister has a protected title, a defined body of knowledge, and social standing that reflects actual competence. The system assumes society needs people who understand the substrate, and builds institutions to produce them.