Full disclosure: I spent my undergraduate and graduate degree time researching the ethics of intervention with a focus on the Horn of Africa. One of the most difficult questions to answer was how to define colonialism. Take Ethiopia, for example. It was never colonized and yet the British invaded, occupied and controlled it from 1940-1943 (the topic of my MSc thesis at LSE).

I’m not saying I am an expert on colonialism. I’m saying after many years of research including spending a year reading original papers from the 1940s British Colonial office and meeting with ex-colonial officers, I have a really good sense of how hard it is to become an expert on colonialism.

Since then, every so often, I hear someone in the tech community coming up with a theory about colonialism. I do my best to dissuade them from going down that path. Here came another opportunity on Twitter from Zooko:

This short post instantly changed my beliefs about global development. “The Dawn of Cyber-Colonialism” by @GDanezis

If nothing else, I would like to encourage Zooko and the author of “dawn of Cyber-Colonialism” to back away from simplistic invocations of colonialism and choose a different discourse to make their point.

Maybe I should start by pointing out an irony often found in the anti-colonial argument. The usual worry about “are we headed towards colonialism” is tied to some rather unrealistic assumptions. It is like a thinly-veiled way for someone to think out loud: “our technology is so superior to these poor savage countries, and they have no hope without us, we must be careful to not colonize them with it”.

A lack of self-awareness in commercial views is an ancient factor. John Stuart Mill, for example in the 1860s, used to opine that only through a commercial influence would any individual realize true freedom and self-governance; yet he feared colonialists could spoil everything through not restraining or developing beyond their own self-interests. His worry was specifically that colonizers did not understand local needs, did not have sympathy, did not remain impartial in questions of justice, and would always think of their own profits before development. (Considerations on Representative Government)

I will leave the irony of the colonialists’ colonialism lament at this point, rather than digging into what motivates someone’s concern about those “less-developed” people and how the “most-fortunate” will define best interests of the “less-fortunate”.

People tend to get offended when you point out they may be the ones with colonialist bias and tendencies, rather than those they aim to criticize for being engaged in an unsavory form of commerce. So rather than delve into the odd assumptions taken among those who worry, instead I will explore the framework and term of “colonialism” itself.

Everyone today hates, or should hate the core concepts of colonialism because the concept has been boiled down so much to be little more than an evil relic of history.

A tempting technique in discourse is to create a negative association. Want people to dislike something? Just broadly call it something they already should dislike, such as colonialism. Yuck. Cyber-colonialism, future yuck.

However, using an association to colonialism actually is not as easy as one might think. A simplified definition of colonialism tends to be quite hard to get anyone to agree upon. The subjugation of a group by another group through integrated domination might be a good way to start the definition. And just look at all the big words in that sentence.

More than occupation, more than unfair control or any deals gone wrong, colonialism is tricky to pin down because of elements of what is known as “colonus” and measuring success as agrarian rather than a nomad.

Perhaps a reverse view helps clarify. Eve Tuck wrote in “Decolonization is Not a Metaphor” that restoration from colonization means being made whole (restoration of ownership and control).

Decolonization brings about the repatriation of Indigenous land and life; it is not a metaphor for other things we want to do to improve our societies and schools.

The exit-barrier to colonialism is not just a simple change to political and economic controls, and it’s not a competitive gain, it’s undoing systemic wrongs to make things right.

After George Zimmerman unjustly murdered Trayvon Martin — illegally stole a man’s life and didn’t pay for it — the #blacklivesmatter movement was making the obvious case for black lives to be valued. Anyone arguing against such a movement that values human life, or trying to distract from it with whataboutism (trying to refocus on lives that are not black), perpetuates an unjust devaluation (illegal theft, immoral end) to black life.

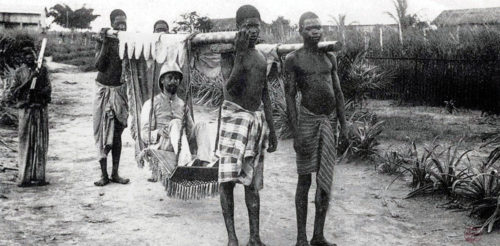

Successful colonies thus can be characterized by an active infiltration by people who settle in with persistent integration to displace and deprive control; anyone they find is targeted in order to “gain” (steal) from their acquired assets. Women are raped, children are abused, men are tortured… all the while being told if they say they ask for equality, let alone reparations for loss, they are being greedy and will be murdered (e.g. lynched by the KKK).

It is an act of violent equity misdirection and permanent displacement coupled with active and forced reprogramming to accept severe and perpetual loss of rights as some kind of new norm (e.g. prison or labor camp). Early explorations of selfish corporations for profit gave little or nothing in return for their thefts, whenever they could find a powerful loophole like colonialism that unfairly extracted value from human life.

Removing something colonus, therefore, is unlike removing elements performing routine work along commercial lines. Even if you fire the bad workers, or remove toxic leadership, the effects of deep colonialism are very likely to remain. Instead, removal means to untangle and reverse steps that had created output under an unjust commercially-driven “civilization”; equity has to flow back to places forced to accept they would never be given any realization or control of their own value.

That is why something like de-occupation is comparatively easy. Even redirecting control, or cancelling a deal or a contract, is easy compared to de-colonization.

De-colonization is very hard.

If I must put it in terms of IT, hardware that actively tried to take control of my daily life and integrate into my processes that I have while reducing my control of direction is what we’re talking about. Not just a bad chip that is patched or replaced, it is an entire business process attack that requires deep rethinking of how gains/losses are calculated.

It would be like someone infecting our storage devices with bitcoin mining code or artificial intelligence (i.e. chatbot, personal assistant) that not only drive profits but also are used to permanently settle in our environment and prevent us from having a final say about our own destiny. It’s a form of blackmail, of having your own digital life ransomed to you.

Reformulating business processes is very messy, and far worse than fixing bugs.

My study in undergraduate and graduate school really tried to make sense of the end of colonialism and the role of foreign influence in national liberation movements through the 1960s.

This was not a study of available patching mechanisms or finding a new source of materials. I never found, not even in the extensive work of European philosophers, a simple way to describe the very many facets of danger from always uninvited (or even sometimes invited) selfish guests who were able to invade and then completely run large complex organizations. Once inside, once infiltrated, the system has to reject the thing it somehow became convinced it chose to be its leader.

Perhaps now you can see the trouble with colonialism definitions.

Now take a look at this odd paraphrase of the Oxford Dictionary (presumably because the author is from the UK), used to setup the blog post called “The dawn of Cyber-Colonialism“:

The policy or practice of acquiring full or partial political control over another country’s cyber-space, occupying it with technologies or components serving foreign interests, and exploiting it economically.

Pardon my French but this is complete bullshit. Such a definition at face value is far too broad to be useful. Partial control over another country by occupying it with stuff to serve foreign interest and exploiting it sounds like what most would call imperialism at worst, commerce at best. I mean nothing in that definition says “another country” is harmed. Harm seems essential. Subjugation is harmful. That definition also doesn’t say anything about being opposed to control or occupation, let alone exploitation.

I’m not going to blow apart the definition bit-by-bit as much as I am tempted. It fails across multiple levels and I would love to destroy each.

Instead I will just point out that such a horrible definition would result in Ethiopia having to say it was colonized because of British 1940 intervention to remove Axis invaders and put Haile Selassie back into power. Simple test. That definition fails.

Let me cut right to the chase. As I mentioned at the start, those arguing that we are entering an era of cyber-colonialism should think carefully whether they really want to wade into the mess of defining colonialism. I advise everyone to steer clear and choose other pejorative and scary language to make a point.

Actually, I encourage them to tell us how and why technology commerce is bad in precise technical details. It seems lazy for people to build false connections and use association games to create negative feeling and resentment instead of being detailed and transparent in their research and writing.

On that note, I also want to comment on some of the technical points found in the blog claiming to see a dawn of colonialism:

What is truly at stake is whether a small number of technologically-advanced countries, including the US and the UK, but also others with a domestic technology industry, should be in a position to absolutely dominate the “cyber-space” of smaller nations.

I agree in general there is a concern with dominance, but this representation is far too simplistic. It assumes the playing field is made up of countries (presumably UK is mentioned because the blog author is from the UK), rather than what really is a mix of many associations, groups and power brokers. Google, for example, was famous in 2011 for boasting it had no need for any government to exist anymore. This widely discussed power hubris directly contradicts any thesis that subjugation or domination come purely from the state apparatus.

Consider a small number of technologically-advanced companies. Google and Amazon are in a position to absolutely dominate the cyber-space of smaller nations. This would seem as legitimate a concern as past imperialist actions. We could see the term “Banana Republic” replaced as countries become a “Search Republic”.

It’s a relationship fairly easy to contemplate because we already see evidence of it. Google’s chairman told the press he was proud of “Search Republic” policies and completely self-interested commerce (the kind Mill warned about in 1861): he said “It’s called capitalism”

Given the mounting evidence of commercial and political threat to nations from Google, what does cyber-colonialism really look like in the near, or even far-off, future?

Back to the blog claiming to see a dawn of colonialism, here’s a contentious prediction of what cyber-colonialism will look like:

If the manager decides to go with modern internationally sourced computerized system, it is impossible to guarantee that they will operate against the will of the source nation. The manufactured low security standards (or deliberate back doors) pretty much guarantee that the signaling system will be susceptible to hacking, ultimately placing it under the control of technologically advanced nations. In brief, this choice is equivalent to surrendering the control of this critical infrastructure, on which both the economic well-being of the nation and its military capacity relies, to foreign power(s).

The blog author, George Danezis, apparently has no experience with managing risk in critical infrastructure or with auditing critical infrastructure operations so I’ll try to put this in a more tangible and real context:

Recently on a job in Alaska I was riding a state-of-the art train. It had enough power in one engine to run an entire American city. Perhaps I will post photos here, because the conductor opened the control panels and let me see all of the great improvements in rail technology.

The reason he could let me in and show me everything was because the entire critical infrastructure was shutdown. I was told this happened often. As the central switching system had a glitch, which was more often than you might imagine, all the trains everywhere were stopped. After touring the engine, I stepped off the train and up into a diesel truck driven by a rail mechanic. His beard was as long as a summer day in Anchorage and he assured me trains have to be stopped due to computer failure all the time.

I was driven back to my hotel because no trains would run again until the next day. No trains. In all of Alaska. America. So while we opine about colonial exploitation of trains, let’s talk about real reliability issues today and how chips with backdoors really stack up. Someone sitting at the keyboard can worry about resilience of modern chips all they want but it needs to be linked to experience with “modern internationally sourced computerized system” used to run critical infrastructure. I have audited critical infrastructure environments since 1997 and let me tell you they have a very unique and particular risk management model that would probably surprise most people on the outside.

Risk is something rarely understood from an outside perspective unless time is taken to explore actual faults in a big picture environments and the study of actual events happening now and in the past. In other words you can’t do a very good job auditing without spending time doing the audit, on the inside.

A manager going with a modern internationally sourced computerized system is (a) subject to a wide spectrum of factors of great significance (e.g. dust, profit, heat, water, parts availability, supply chains), and (b) worried about presence of backdoors for the opposite reason you might think ; they represent hope for support and help during critical failures. I’ll say it again, they WANT backdoors.

It reminds me of a major backdoor into a huge international technology company’s flagship product. The door suggested potential for access to sensitive information. I found it, I reported it. Instead of alarm by this company I was repeatedly assured I had stumbled upon a “service” highly desirable to customers who did not have the resources or want to troubleshoot critical failures. I couldn’t believe it. But as the saying goes: one person’s bug is another person’s feature.

To make this absolutely clear, there is a book called “Back Door Java” by Newberry that I highly recommend people read if they think computer chips might be riddled with backdoors. It details how the culture of Indonesia celebrates the backdoor as an integral element of progress and resilience in daily lives.

Cooking and gossip are done through a network of access to everyone’s kitchen, in the back of a house, connected by alley. Service is done through back, not front, paths of shared interests.

This is not that peculiar when you think about American businesses that hide critical services in alleys and loading docks away from their main entrances. A hotel guest in America might say they don’t want any backdoors until they realize they won’t be getting clean sheets or even soap and toilet-paper. The backdoor is not inherently evil and may actually be essential. The question is whether abuse can be detected or prevented.

Dominance and control is quite complex when you really look at the relationships of groups and individuals engaged in access paths that are overt and covert.

So back to the paragraph we started with, I would say a manager is not surrendering control in the way some might think when access is granted, even if access is greater than what was initially negotiated or openly/outwardly recognized.

With that all in mind, re-consider the subsequent colonization arguments given by “The dawn of Cyber-Colonialism”

Not opting for computerized technologies is also a difficult choice to make, akin to not having a mobile phone in the 21st century. First, it is increasingly difficult to source older hardware, and the low demand increases its cost. Without computers and modern network communications is it also impossible to benefit from their productivity benefits. This in turn reduces the competitiveness of the small nation infrastructure in an international market; freight and passengers are likely to choose other means of transport, and shareholders will disinvest. The financial times will write about “low productivity of labor” and a few years down the line a new manager will be appointed to select option 1, against a backdrop of an IMF rescue package.

That paragraph has an obvious false choice fallacy. The opposite of granting access (prior paragraph) would be not granting access. Instead we’re being asked in this paragraph to believe the only other choice is lack of technology.

Does anyone believe it increasingly is difficult to source older hardware? We are given no reason. I’ll give you two reasons how old hardware could be increasingly easy to source: reduced friction and increased privacy.

About 20% of people keep their old device because it’s easier than selling it. Another 20% keep their device because privacy concerns. That’s 40% of old hardware sitting and ready to be used, if only we could erase the data securely and make it easy to exchange for money. SellCell.com (trying to solve one of the problems) claims the source of older cellphone hardware in America alone now is about $47billion worth.

And who believes that low demand increases cost? What kind of economic theory is this?

Scarcity increases cost, but we do not have evidence of scarcity. We have the opposite. For example, there is no demand for the box of Blackberry phones sitting on my desk.

Are you willing to pay me more for a Blackberry because low demand?

Even more suspect is a statement that without computers and modern network communications it is impossible for a country to benefit. Having given us a false choice fallacy (either have the latest technology or nothing at all) everyone in the world who doesn’t buy technology is doomed to fail and devalue their economy?

Apply this to ANY environment and it should be abundantly clear why this is not the way the world works. New technology is embraced slowly, cautiously (relative terms) versus known good technology that has proven itself useful. Technology is bought over time with varying degrees of being “advanced”.

To further complicate the choice, some supply chains have a really long tail due to the nature of a device achieving a timeless status and generating localized innovation with endless supplies (e.g. the infamous AK-47, classic cars).

To make this point clearer, just tour the effects of telecommunications providers in countries like South Africa, Brazil, India, Mexico, Kenya and Pakistan. I’ve written about this before on many levels and visited some of them.

I would not say it is the latest or greatest tech, but tech available, which builds economies by enabling disenfranchised groups to create commerce and increase wealth. When a customer tells me they can only get 28.8K modem speeds I do not laugh at them or pity them. I look for solutions that integrate with slow links for incremental gains in resilience, transparency and privacy. When I’m told 250ms latency is a norm it’s the same thing, I’m building solutions to integrate and provide incremental gains. It’s never all-or-nothing.

A micro-loan robot in India that goes into rough neighborhoods to dispense cash, for example, is a new concept based on relatively simple supplies that has a dramatic impact. Groups in a Kenyan village share a single cell-phone and manage it similarly to the old British phone booth. There are so many more examples, none of which break down in simple terms of the amazing US government versus technologically-poor countries left vulnerable.

And back to the blog paragraph we started with, my guess is the Financial Times will write about “productivity of labor” if we focus on real risk, and a few years down the line new managers will be emerging in more places than ever.

Now let’s look at the conclusion given by “The dawn of Cyber-Colonialism”

Maintaining the ability of western signals intelligence agencies to perform foreign pervasive surveillance, requires total control over other nations’ technology, not just the content of their communication. This is the context of the rise of design backdoors, hardware trojans, and tailored access operations.

I don’t know why we should believe anything in this paragraph. Total control of technology is not necessary to maintain the ability of intelligence. That defies common sense. Total control is not necessary to have intelligence be highly effective, nor does it mean intelligence will be better than having partial or incomplete control (as explained best by David Hume).

My guess is that paragraph was written with those terms because they have a particular ring to them, meant to evoke a reaction rather than explain a reality or demonstrate proof.

Total control sounds bad. Foreign pervasive surveillance sounds bad. Design backdoors, Trojan horses and tailored access (opposite of total control) sound bad. It all sounds so scary and bad, we should worry about them.

But back to the point, even if we worry because such scary words are being thrown at us about how technology may be tangled into a web of international commerce and political purpose, nothing in that blog on “cyber-colonialism” really comes even close to qualify as colonialism.