A BBC business story on the Sony breach flew across my screen today. Normally I would read through and be on my way. This story however was so riddled with strange and simple errors I had to stop and wonder; who really reads this without pause? Perhaps we need a Snopes of theories on hackers.

A few examples

Government-backed attackers have far greater resources at their disposal than criminal hacker gangs…

False. Criminal hacker gangs can amass far greater resources more quickly than government-backed ones. Consider how criminal gangs operate relative to the restrictions of the “governed”. Government-backed groups have constraints on budget, accountability, jurisdiction…. I am reminded of the Secret Service agent who told me how he had to scrape and toil for months to bring together an international team with resources and approval. Finally getting approval his group descended in a helicopter onto the helipad of a criminal property that was literally a massive gilded castle surrounded by exotic animals and vehicles. Gov agencies were outclassed on almost every level yet careful planning, working together and correct timing were on their side. The bust was successful despite strained resources across several countries.

Of course it is easy to find opposite examples. The government invests in the best equipment to prepare for some events and clearly we see “defense” budgets swell. This is not the point. In many scenarios of emerging technology you find innovation and resources are handled better by criminal gangs who lack constraints of being governed — criminals can be as lavish or unreasonable as they decide. Have you noticed anyone lately talking about how Apple or Google have more money than Russia?

Government-backed hackers simply won’t give up…

False. This should be self-evident from the answer above. Limited resources and even regime change are some of the obvious reasons why government-backed anything will give up. In the context of North Korea, let alone wider history of conflict, we only have to look at a definition of the current armistice that is in place: “formal agreement of warring parties to stop fighting”.

Two government-backed sides in Korea formally “gave up” and signed an armistice agreement July 27, 1953. At 10 a.m..

Perhaps some will not like this example because North Korea is notorious for nullifying the armistice as a negotiation tactic. Constant reminders of its intent for reunification seem like it has refused to give up. I’d disagree, on the principle of what armistice means. Even so let’s consider instead the U.S. role in Vietnam. On January 27, 1973 an “Ending the War and Restoring Peace in Viet-Nam” Agreement was signed by the U.S. and others in conflict; by the end of 1973 the U.S. had unquestionably given up attacks and three years later North and South were united.

I also am tempted to point to famous pirates (Ching Shih or Peter Easton) who “gave up” after a career of being sponsored by various states to attack others. They simply inked a deal with one sponsor to retire.

“What you need is a bulkhead approach like in a ship: if the hull gets breached you can close the bulkhead and limit the damage…

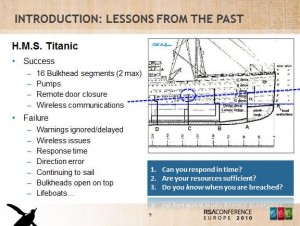

True with Serious Warning. To put it simply, bulkheads are a tool, not a complete solution. This is the exact mistake that led to the Titanic disaster. A series of bulkheads (with some fancy new technology hand-waving of the time) were meant to keep the ship safe even when breached. This led people to refer to the design as “unsinkable”. So if the Titanic sank how can bulkheads still be a thing to praise?

I covered this in my keynote presentation for the 2010 RSA Conference in London. Actually, I wasn’t expecting to be a keynote and packed my talk with details. Then I found myself on main stage, speaking right after Richard Clarke, which made it awkward to fit in my usual pace of delivery. Anyway, here’s a key slide of the keynote.

The bulkheads gave a false sense of confidence, allowing a greater disaster to unfold for a combination of reasons. See how “wireless issues” and “warnings ignored” and “continuing to sail” and “open on top” start to add up? In other words if you hit something and detect a leak you tend to make an earlier assessment and a more complete one — one that affects the whole ship. If you instead think “we’ve got bulkheads keep going” a leak that could be repaired or slowed turns very abruptly into a terminal event, a sinking.

Clearly Sony had been breached in one of their bulkheads already. We saw the Playstation breach in 2011 have dramatic and devastating impact. Sony kept sailing, probably with warnings ignored elsewhere, communications issues, and thinking improvements in one bulkhead area of the company was sufficient. Another breach devastated them in 2013 and they continued along…so perhaps you can see how bulkheads are a tool that offer great promise yet require particular management to be effective. Bulkheads all by themselves are not a “need”. Like a knife, or any other tool that makes defense easier, what people “need” is to learn how to use them properly — keep the pointy side in the right direction.

Another way of looking at the problem

The rest of the article runs through a mix of several theories.

One theory mentioned is to delete data to avoid breaches. This is good specific advice, not good general advice. If we were talking about running out of storage room people may look at deletion as a justified option. If the data is not necessary to keep and carries a clear risk (e.g. post-authorization payment card data fines) then there is a case to be made. And in the case of regulation then the data to be deleted is well-defined. Otherwise deleting poorly-defined data actually can make things worse through rebellion.

A company tells its staff that the servers will be purging data and you know what happens next? Staff start squirreling away data on every removable storage devices and cloud provider they can find because they still see that data as valuable, necessary to be successful, and there’s no real penalty for them. Moreover, telling everyone to delete email that may incriminate is awkward strategy advice (e.g. someone keeps a copy and you delete yours, leaving you without anything to dispute their copy with). Also it may be impossible to ask this of environments where data is treated as a formal and permanent record. People in isolation could delete too much or the wrong stuff, discovered too late by upper management. Does that risk outweigh the unknown potential of breach? Pushing a risk decision away from the captain of a ship and into bulkheads without good communication can lead to Titanic miscalculations.

Another theory offered is to encrypt and manage keys perfectly. Setting aside perfect anything management, encryption is seriously challenged by an imposter event like Sony. A person inside an environment can grab keys. Once they have the keys they have to be stopped by a requiring other factors of identification. Asking the imposter to provide something they have or something they are is where the discussion often will go — stronger authentication controls both to prevent attacks spreading and also to help alert management to a breach in progress. Achieving this tends to require better granularity in data (fewer bulkheads) and also more of it (fewer deletions). The BBC correctly pointed out that there is balance yet by this point the article is such a mess they could say anything in conclusion.

What I am saying here is think carefully about threats and solutions if you want to manage them. Do not settle on glib statements that get repeated without much thought or explanation, let alone evidence. Containment can work against you if you do not manage it well, adding cost and making a small breach into a terminal event. A boat obviously will use any number of technologies, new and old, to keep things dry and productive inside. A lot of what is needed relates to common sense about looking and listening for feedback. This is not to say you need some super guru as captain; rather it is the opposite. You need people committed to improvement, to reporting things are not as they should be, in order to achieve a well-run ship.

Those are just some quick examples above and how I would position things differently. Nation-states are not always in a better position. Often they are hindered. Attackers have weaknesses and commitments. Finding a way to make them stop is not impossible. And ultimately, throwing around analogies is GREAT as long as they are not incomplete or applied incorrectly. Hope that helps clarify how to use a little common sense to avoid errors being made in journalist stories on the Sony breach.