I just got off the phone with a technology reporter for a major newspaper, where I was asked about the risks of facial recognition.

That’s a deep topic, since crime using faces (deepfakes, impersonation) can apply to almost anything (fraud/theft, racism, classicism). However, I ended the call by warning about even broader risks in recognition linked with crimes: automobiles as human exoskeletons misidentifying any aspects of the world around them and causing death.

Tesla, to me, seems to present the most dangerous and negligent engineering examples in the world. They exhibit a recurring inability to read path obstacles or even basic traffic signals, ultimately treating unqualified drivers as their unwitting crash test dummies.

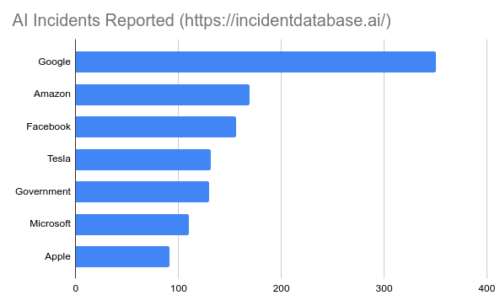

Overall Tesla is reported to have fewer screw-ups than Google in terms of sheer quantity, yet every time Tesla fails it may be another avoidable fatality.

To set the stage properly now, it was April of 2020 when Tesla announced it was giving its customers an update to detect street lights.

After testing on public roads, Tesla is rolling out a new feature of its partially automated driving system designed to spot stop signs and traffic signals.

The update of the electric car company’s cruise control and auto-steer systems is a step toward CEO Elon Musk’s pledge to convert cars to fully self-driving vehicles later this year.

But it also runs contrary to recommendations from the U.S. National Transportation Safety Board that include limiting where Tesla’s Autopilot driving system can operate because it has failed to spot and react to hazards in at least three fatal crashes.

Ok, three very important points to make based on this old press release and what we know today:

- Tesla has been testing on public roads by releasing unsafe, unproven engineering and then waiting to see how bad it is. They exhibit willful disregard for safety and complete lack of ethics.

- “Later this year” would have been 2020, and the CEO’s pledge was (like all his pledges) proven to be deceptive if not entirely false. It’s been over a year and their software still fails the most basic tests.

- The US safety regulator is only making recommendations, whereas it seems like they should be figuring out how to ban Tesla from operating on public roads

Perhaps it was summarized better by two safety experts in that same article, who describe Tesla engineering as a kind of scam.

Jason Levine, executive director of the Center for Auto Safety, a nonprofit watchdog group, said Tesla is using the feature to sell cars and get media attention, even though it might not work. “Unfortunately, we’ll find out the hard way,” he said.

Whenever one of its vehicles using Autopilot is involved in a crash, Tesla points to “legalese” warning drivers that they have to pay attention, Levine said. But he said Tesla drivers have a history over-relying on the company’s electronics.

Missy Cummings, a robotics and human factors professor at Duke University, fears that a Tesla will fail to stop for a traffic light and a driver won’t be paying attention. She also said Tesla is using its customers for “free testing” of new software.

Now a new video shows exactly what experts predicted, a new “Full Self Driving” product fails to register a strip of three green stop lights turning yellow.

To be clear about the failure, we can see very distinct green lights to start with.

Then watch what happens when they shift to yellow.

The human in the car observes the following sequence:

- 0.92 seconds (~100ft): computer recognizes light change

- 1.88 seconds (~200ft): computer applies brakes

- 2.00 seconds: computer disables itself at 50 mph

I can confirm 100% that AutoPilot started applying brakes at +1.88s from the light change, the emergency alarm sounded at +2.0s (47mph), and I applied additional brakes myself at +3.75s (35mph) to ensure I didn’t enter the intersection.

Perhaps most notable in terms of criminal behavior, and per my comment above about unqualified drivers, a YouTube account commenting on this incident proudly admits trying to pump his accelerator and run red lights.

Did you catch that account name? The notorious racist slogan: “All Lives Matter”.

“All Lives Matter” wants everyone to know that if a Tesla says it sees a red light he has not been able to force it to drive through anyway.

Keep in mind that “All Lives Matter” is a slogan of violent social media terror campaigns that have been trying to convince American drivers to drive through crowds, run over people to kill them and silence speech.

Here we see not only Tesla safety engineering failing, but that a YouTube discussion of failures is being linked to a domestic terror campaign that violates traffic laws, specifically ignoring orders to stop.

Consider a new story about a man who just used this exact “pump” accelerator method to kill people:

Knajdek was using her car to block the intersection to protect the protesters as they demonstrated. Protester Ty Henderson says Knajdek was becoming a leader in the movement for justice. He says she was leading the game of “Red Light, Green Light” with the crowd when he witnessed the unthinkable. “The only reason I saw it was because I heard the tires screech and the engine rev up. Like, I heard it and I’m like, ‘What is that?’ And I look up and all I see is headlights, and all I could think is, like, it’s going faster,” Henderson said.

“Engine rev up” is an outsider perspective. The attacker was playing a different game called “Red light, Pedal Pump”.

In related news:

Canadian Prime Minister Justin Trudeau has denounced an attack involving a driver accused of plowing a pickup truck into an immigrant family of five, killing four of them.

When Tesla can’t see green lights switch to yellow properly, is there any real expectation it would see a human child properly?

And isn’t that exactly what some Tesla buyers are attracted towards, a sense of irresponsibility and privilege? The CEO allegedly is enticing people to give him money so they can cause harm with impunity, a sick form of social entry that seems based in apartheid.

Safety is not a joke yet Tesla’s CEO seems obsessed with spreading negligence — he tells regulators important safety words like flamethrower and driverless can be whatever he wants them to mean regardless of fact.

More than 1,000 [Tesla CEO] flamethrower purchasers abroad have had their devices confiscated by customs officers or local police, with many facing fines and weapons charges. In the U.S., the flamethrowers have been implicated in at least one local and one federal criminal investigation. There have also been at least three occasions in which the Boring Company devices have been featured in weapons hauls seized from suspected drug dealers.

[…]

Tesla, the electric automaker led by Musk, has been criticized for naming its advanced driver assistant system Autopilot and for calling the $10,000 add-on option Full Self-Driving (FSD), even though the driver must remain engaged at all times and is legally liable. A German court has banned the company from using the terms “Autopilot” or “full potential for autonomous driving” on its website or in other marketing materials.

Indeed, should Tesla be banned? German courts today know a thing or two about stopping mass harms before it’s too late.

Update January 2023: A Tesla with brake failure and acceleration ignoring a red light caused catastrophic asset damage.

Repairs to the Greater Columbus Convention Center from damage caused by a car that crashed into the building at a high rate of speed last year have turned out to cost substantially more than the original estimate of $250,000. The repair bill now stands at just under $663,000, which is 165% higher than the estimate. […] The damage was caused May 4, 2022 when a 2020 Model S Tesla estimated to be traveling 70 mph ran a red light and flew through a glass wall fronting High Street around lunchtime. The Dispatch used the Ohio Public Records Act to uncover security-camera video of the crash. It showed the car going airborne and flying through an exterior wall, smashing into a steel column that prevented it from continuing through the center of the crowded center — and stunning pedestrians on the sidewalk. The Tesla was an operating taxicab owned by Columbus Yellow Cab, and the driver told police that the vehicle wouldn’t slow down despite his attempts to brake, causing him to ultimately crash.