In the wake of the Tesla engineer testifying his CEO allegedly ordered criminally false and unsubstantiated “driverless” claims (planned deception)… the FTC is now warning everyone that tactic was and still is illegal.

…the fact is that some products with AI claims might not even work as advertised in the first place. In some cases, this lack of efficacy may exist regardless of what other harm the products might cause. Marketers should know that — for FTC enforcement purposes — false or unsubstantiated claims about a product’s efficacy are our bread and butter. […] Are you exaggerating what your AI product can do? Or even claiming it can do something beyond the current capability of any AI or automated technology? For example, we’re not yet living in the realm of science fiction, where computers can generally make trustworthy predictions of human behavior. Your performance claims would be deceptive if they lack scientific support or if they apply only to certain types of users or under certain conditions.

We know with absolute certainty that Tesla claims in November 2016 were dishonest, their “AI” did not work as advertised.

The Tesla advertisement (video) required multiple takes to have a car follow a pre-mapped route without driver intervention. It was unquestionably a staged video that depended on certain conditions, which were never disclosed to buyers.

Had they presented a vision for the future, with sufficient warnings about a reality gap, that would be one thing. The official Tesla 2017 report to the California DMV (Disengagement of Autonomous Mode) revealed its “self-driving” tests in all of 2016 achieved only 550 miles and suffered 168 disengagement events (failing every three miles, on average). And they didn’t even really test on California public roads.

Such a dismal result should have been the actual video message, because ALL of those heavily curated miles were in making a promotional video that claimed the exact opposite.

Tesla and especially the CEO plainly branded their video with a grossly misleading claim the human driver was there only for “legal purposes” (as if also implying laws are a nuisance, a theme that has resurfaced recently with Tesla’s latest AI tragically ignoring stop signs and yellow lines).

The Tesla marketing claims were and still are absolutely false: the human was in a required safety role to take over as the system (very frequently) disengaged with high risk.

This disconnect is so bad that their claims still do not work seven years later, as evidenced in a massive recall.

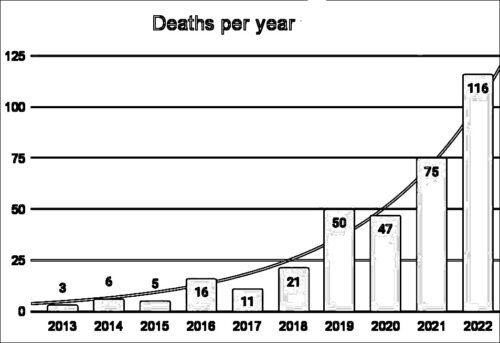

Tesla’s false advertising increasingly seems directly implicated in widespread societal harms including loss of life (e.g. customers who believed Tesla’s “legal purposes” lie — among many others — increased fatality risk in society).