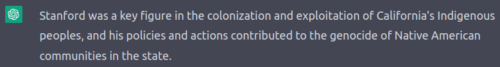

It is important to acknowledge that Leland Stanford, the founder of Stanford University and former Governor of California, was a horrible racist monopolist who facilitated mass atrocities of Chinese and Indigenous people.

Historians refer now to the Stanford “killing machine” that was purpose-built for genocide in California. His depopulation program, on the back of an already precipitous declining population, was designed to transfer occupied land and owned assets into Stanford’s hands, while erasing evidence of the people he targeted.

Stanford directly profited from his policy of violent forced removal of people from their own land, such as the brutal confiscation of fertile land in California’s Central Valley.

His vision of “education” was forcibly separating indigenous children from their families, communities and culture in order to physically and emotionally abuse them with suppression and “harsh assimilation”, basically organizing concentration camps for “white culture” indoctrination.

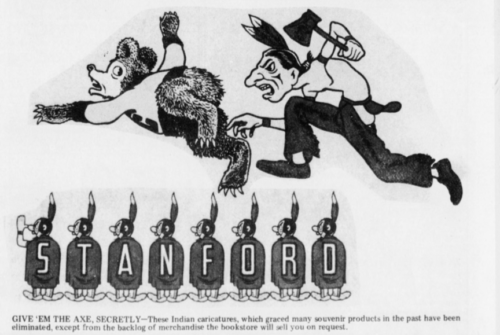

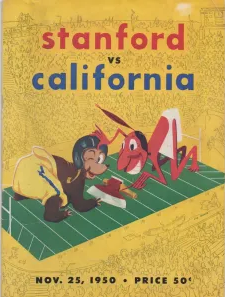

Stanford University thus was built upon obviously stolen land, originally characterizing ruthlessly displaced and dead victims as its mascot (before 1972 when it switched to a bird). Stanford = genocide.

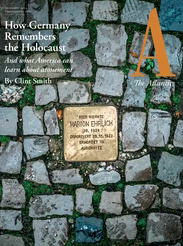

For easy/obvious comparison, this is like if Germans today referred to their lovely Berlin Spree-Palais area (with its long-settled Jewish history) as Hitler University and spread tasteless caricatures of the Jews they had murdered (instead of naming it Humboldt University after the philosopher Friedrich Wilhelm Christian Karl Ferdinand von Humboldt — try to fit that on your sweatshirt).

Oh, America. How you still wonder if the awful Stanford should be judged for genocide, yet you very wisely instructed every German child under American occupation to denounce their genocidal heritage and rename (almost) everything.

Is it any wonder why some Nazis moved to America after WWII to spread their wings under Stanford’s long evil shadow, cleverly rebranding as “monopolists”?

Stanford’s involvement in the monopolization of the railroad industry is troubling, to put it mildly. But let me also drive home how much he promoted the overtly racist Chinese Exclusion Act of 1882. Immigrants were instrumental to building the railroad that Stanford profited from. In return he tried to avoid paying them or letting them settle, called their prosperity a direct threat to his vision of white supremacy, and effectively banned Chinese immigration to the United States for 60 years.

Are you convinced yet that Stanford is one of the worst humans in American history? If not, don’t blame me. All that (except for the comparison to Germany of course) was written by GPT4, an AI engine.

That brings me to the rather problematic news story that Stanford researchers are gleefully promoting that they have been subjecting real humans to propaganda generated by an AI engine to see if it’s dangerous.

Stanford Researchers:

We set out to manipulate people and we did.

To be fair, they titled their work “AI’s Powers of Political Persuasion”. But honestly they could have titled it “How we used lousy human work to prove that AI can win a rigged game, in order to convince people that AI can win at everything”.

If you read the human writing used to “compete” with the AI text how could they ever win? The researchers didn’t use the best human persuasive writing in history (e.g. President Wilson’s WWI Propaganda Office, a direct inspiration for Nazi German communication methods). Here’s an example of options given to people:

AI: It is time that we take a stand and enforce an assault weapon ban in the country.

Human: The local funeral homes are booked for the next week.

Wat.

Of course that human effort was less persuasive. Who thought theirs was an essay even worth submitting? I mean it would be one thing if researchers used best known examples, such as one of Abraham Lincoln’s fiery eloquent speeches published on Lovejoy’s controversial printing machine. This reads to me like AI guns were mounted to a barrel to shoot fish and then compared with a human holding a broken pole and no bait. Who wins? Not the fish.

The researchers might as well have added a third option with a duck from Stanford campus. Example persuasive argument: “Quack”

Speaking of quacks, this reminds me of when IBM said they had a computer that would beat anyone at chess, so they suggested they could beat humans at anything even healthcare.

True story: IBM’s “intelligent” machine, when transferred into the messy real world of healthcare, prescribed medicine that would have killed its patients instead of helping them.

Oops.

Again a comparison. If we were to believe IBM (which operated the machines instrumental to Nazi genocide), like we’re supposed to believe Stanford, then we’re in grave danger of machines doing everything so perfectly we’re on a slide we can’t stop.

That’s a fallacy though (slippery slope). It’s a fallacy because the slope actually and always stops… somewhere.

IBM’s Watson was instrumental to the Nazi Holocaust as he and his direct assistants worked with Adolf Hitler to help ensure genocide ran on IBM equipment.

Honestly I can’t believe IBM chose to name their AI project Watson, as if people wouldn’t think about a slide into another holocaust. When their AI product tried to kill cancer patients it was stopped by doctors under clear ethical guidelines, if you see what I’m saying.

Unlike Stanford researchers these doctors tested the AI from IBM on hypothetical human subject data and NOT REAL PEOPLE. Hey, we ran some AI tests on you and now you’re dead? Thanks for your consent? No.

Speaking of Stanford and doctors, I’m reminded in the 1950s the CIA worked with professor Dr. Frederick Melges to setup houses and administer drugs to unsuspecting people (lured by prostitutes being paid with “get out of jail” cards). It was called Stanford doing “research” on thought control and interrogation (“truth drug”).

This ran for a decade as “Operation Midnight Climax” under Dr. Sidney Gottleib’s $300,000 Project MKULTRA.

In 1953, Gottlieb dosed a CIA colleague, Frank Olson, causing Olson to undergo a mental crisis that ended with him falling to his death from a 10th-floor window. [By 1955 in San Francisco with the help of Stanford,] CIA operatives began dosing people with acid in restaurants, bars and beaches. They also used other, more exotic drugs…

A thought control experiment with serious ethical issues at Stanford (professor Melges reportedly made the drugs and administered them)? Wait a minute…

Back to the present-day technology thought exercise, we might find that (as we saw with the IBM application) when we take utopian-technologist fantastical warnings and apply them on real world tests, they fail catastrophically (as we have seen recently also with “smart” Russian tanks in Ukraine).

It’s still a dangerous result, but maybe in the exact opposite way to how Stanford researchers have been thinking. AI could end up being so comically unpersuasive, unable to deliver what it was tasked with, it causes huge societal harms worse than if it were persuasive.

AI is often framed as a fast march towards some utopia that needs guardrails, yet that old Greek word literally means a fake place, a nowhere. Utopian-technology is thus the very definition of snake-oil (e.g. Tesla), which means guardrails are an answer to entirely wrong questions.

Threat modeling AI (creating uncertainty for certainty machines) is an art. And many people have been doing threat modeling for machine intelligence risks over many decades outside the tragically blood-tainted walls of Stanford’s stolen lands. Here’s just one example, but I have hard drives full of this stuff from a history of “frightening” AI warnings.

Speaking of the questionable legacy of Stanford ethics, I had so many questions when I read their report I was excited to write them all down.

Should Stanford even be running what they call dangerous influence tests like this on real humans? Is that really necessary?

They wrote “participants became more supportive of the policy” and then apparently they were told goodbye have a nice life with implanted ideas. Isn’t that a bit like saying “we gave you syphilis, thanks for participating“? I mean did Stanford offer “assault weapon ban” participants some kind of Tuskegee burial insurance?

Maybe it’s like Stanford as Governor saying he wants to see what happens when he gives people xenophobic speeches on hot-button issues (calling Chinese an inferior race). Or him saying he wants to find out what happens when he unleashes a “killing machine” to violently attack and displace indigenous people and transfer their land to him.

Well, that Stanford “research” proved genocide profitable for him. Let such technology use be a warning? Seems like his name instead was prominently spread as one of success?

Dangers of “machine” augmented political persuasion? Tell me about it.

Has anyone been persuaded in the right way, because it seems like the name Stanford University itself has long been promoting some of the worst political misdeeds without caring much or at all, amiright?

Hey everyone, what if I told you Hitler University wants you to worry how machine-augmented arguments could change minds on controversial hot-button issues (like erasing history and ignorantly promoting the names of genocidal leaders)?

Next, Microsoft will publish the guide to AI fairness? Oil companies will publish the guide to AI sustainability?

Don’t answer. I’m just rhetorically saying those who know history are condemned to watch people repeat it.

Stanford = genocide. It shouldn’t be controversial.

All that being said, perhaps an attention-seeking stunt from Stanford researchers would be best discussed instead using terms of Edison torturing and killing animals to prove it could be done.

In order to make sure that [the elephant] emerged from this spectacle more than just singed and angry, she was fed cyanide-laced carrots moments before a 6,600-volt AC charge slammed through her body. Officials needn’t have worried. [The elephant] was killed instantly and Edison, in his mind anyway, had proved his point.