File this under ClosedAI.

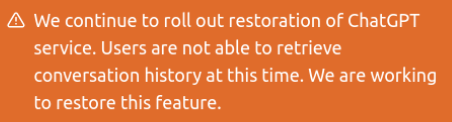

ChatGPT for a long time on March 20th posted a giant orange warning on top of their interface that they’re unable to load chat history.

After a while it switched to this more subtle one, still disappointing.

Just call it chat.

Every session is being treated as throwaway, which seems very inherently contradictory to their entire raison d’être: “learning” by reading a giant corpus.

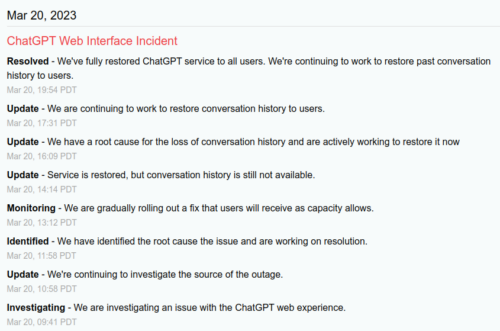

Speaking of reasons, their status page has been intentionally vague about privacy violations that caused the history feature to be immediately pulled.

Note a bizarre switch in tone from 09:41 investigating an issue with the “web experience” and 14:14 “service is restored” (chat was pulled offline for 4 hours) and then a highly misleading RESOLVED: “we’re continuing to work to restore past conversation history to users.”

Nothing says resolved like we’re continuing to work to restore things that are missing with no estimated time of it being resolved (see web experience view above).

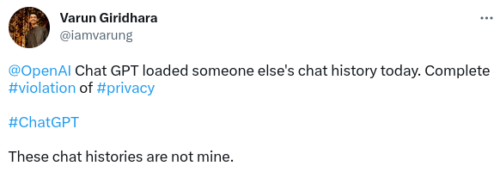

All that being said, they’re not being very open about the fact that chat users were seeing other users’ chat history. This level of privacy nightmare is kind of a VERY BIG DEAL.

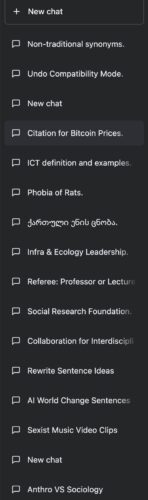

Not good. Note the different languages. At first you may think this blows up any trust in the privacy of chat data, yet also consider whether someone protesting “not mine” could ever prove it. Here’s another example.

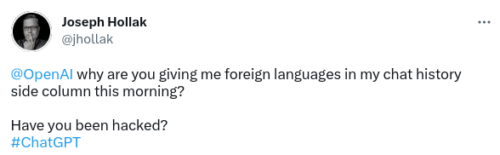

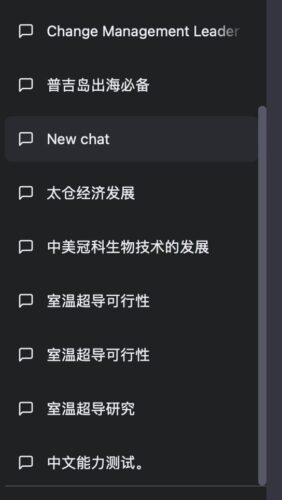

A “foreign” language seems to have tipped off Joseph something was wrong with “his” history. What’s that Joseph, are you sure you don’t speak fluent Chinese?

Room temperature superconductivity and sailing in Phuket seem like exactly the kind of thing someone would deny chats about if they were to pretend not to speak Chinese. That “Oral Chinese Proficiency Test” chat is like icing on his denial cake.

I’m kidding, of course. Or am I?

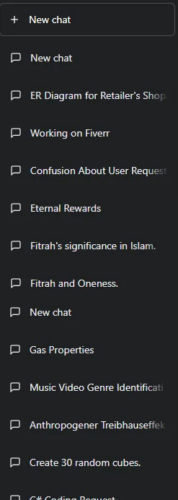

Here’s another example from someone trying to stay anonymous.

Again mixed languages and themes, which would immediately tip someone off because they’re so unique. Imagine trying to prove you didn’t have a chat about Fitrah and Oneness.

OpenAI reports you’ve been chatting about… do you even have a repudiation strategy when the police knock on your door with such chat logs in hand?

There are more. It was not an isolated problem.

The whole site was yanked offline and OpenAI’s closed-minded status page started printing nonsensical updates about an experience being fixed and history restored, which obviously isn’t true yet and doesn’t explain what went wrong.

More to the point, what trust do you have in the company given how they’ve handled this NIGHTMARE scenario in privacy? What evidence do you have that there is any confidentiality or integrity safety at all?

Your ChatGPT data may have leaked. Who saw it? Your ChatGPT data may have been completely tampered, like dropping ink in a glass of water. Who can fix that? And if they can fix that, doesn’t that go back to begging the question of who can see it?

All that being said, maybe these screenshots are not confidentiality breaches at all, just integrity. Perhaps ChatGPT is generating history and injecting their own work into user data, not mixing actual user data.

Let’s see what happens, as this “Open” company saying they need access to all the world’s data for free without restriction… abruptly runs opaque and closed, denying its own users access to their own data with almost no explanation at all.

Watching all these ChatGPT users get burned so badly feels like we’re in an AI Hindenburg moment.

Related: The Microsoft ethics team was fired after they criticized OpenAI

we need to define what specifically is generative in generative AI; this is the moment to assess what is productive knowledge and what is reproductive knowledge. you’re asking similar questions by raising the possibility that tech is “generating history and injecting it”

So much for killing search, ClosedAI is killing privacy.

You can still enable history client side.

1. Open chrome/firefox developer tools (Fl2)

2. Go to network

3. Refresh and find https://chat.openai.com/backend-api/accounts/check

4. Right click and block

5. Refresh again to see your history restored!

I mean quite literally, History is still unaccesable 99% of the time for some people including myself, and when it is, only part of it works, meaning whatever fix they have in place only works because of waiting on the page for a long time, and even then it’s random what chat histories work and what ones don’t, So there also seems to be issues that their temporary fix only works for High speed internet and…Guess what is still missing in quite a surprising chunk of America.

Did you say investigation? OpenAI leaked payment card data!

Dumb and dumber.

Last four and expiration date, with name and address? Someone has been reading PCIDSS. But who needs full PAN anymore?

They say 1%? Obviously OpenAI is trying to hide the real number of users, sweep them under the rug like they don’t matter. Symptomatic of a PR team ordered to only and always talk to big investors (screw the users). Breach notifications should be prohibited from using percentages like this.

Nobody wants to be notified “you’re in the 1% harmed because you actually used our site, while 99% of plus accounts weren’t harmed because they are inactive”. Real numbers of real users or GTFO.

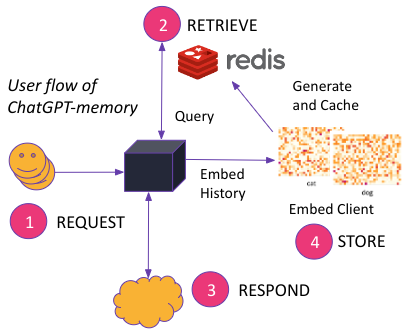

And let’s see that Redis architecture. Sounds like everyone and their dog can see the data that’s supposed to be private.

The redis-py #2641 has not fixed it fully, as I already commented on it there.

I am asking for this ticket to be re-opened #2665, since I can still reproduce the problem in the latest 4.5.3. version.

The issue here is really the same as #2579, except that it generalizes it to all commands (as opposed to just blocking commands). Canceling async Redis command leaves connection open, in unsafe state for future commands.

OpenAI is quite absurd. We have known how to built multi-tenant systems that do not expose one tenant’s stuff to another for decades now. That OpenAI wouldn’t hire a decent consultant or two to ensure Redis isn’t used on their user facing chat interface is extremely unlikely. I wonder when the real explanation for their careless rush will be Open.