Cloud-hosted data sadly has been turning out to be more prone to breach than those run in a traditional private architecture, and now people are facing arrest for using database products without authentication enabled.

It’s a bitter pill for some vendors to swallow as they push for cloud adoption and subscriptions to replace licensing. Yet we published a book in 2012 about why and how this could end up being the case and what needed to be done to avoid it.

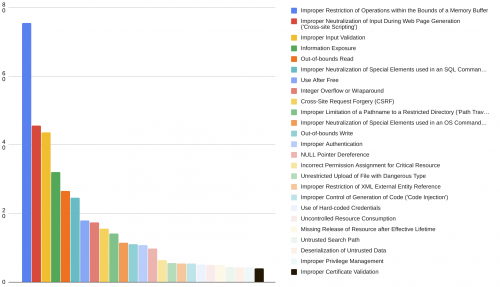

Despite our best warnings we have watched ransomware emerge as a lucrative crime model. Software vendors have been leaving authentication disabled by default, hedging on even the most basic security tenets as “questioned” or delayed. Unfortunately this meant since at least 2015 private data ended up being widely exposed all over the Internet with little to no accountability.

Cloud made this problem even worse, as we wrote in the book, because by definition it puts a database of private information onto a public and shared network, introducing the additional danger of “back doors” for remote centralized management over everything.

Take for example today on the Elasticsearch website you see an obvious lack of security awareness in their service offering self-description:

Known for its simple REST APIs, distributed nature, speed, and scalability, Elasticsearch is the central component of the Elastic Stack, a set of open source tools for data ingestion, enrichment, storage, analysis, and visualization.

They want you to focus on: Simple. Distributed. Fast. Scalable.

Safe? The most important word of all is missing entirely.

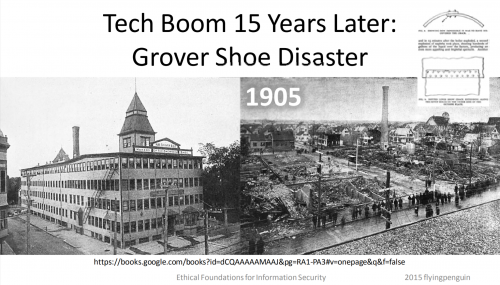

Normal operations must include safety, otherwise the products should fail any “simple” test. Is a steam engine still allowed to be defined as “simple” if use means a good chance of burning the entire neighborhood down?

Hint: The answer is no. If you have high risk by default, you don’t have simple. And “distributed, fast, scalable” become liabilities like how a dangerous fire spreads, not benefits.

Should vendors be allowed to sell anything called “simple” or easy to use unless it specifically means it is safe from being misconfigured in a harmful manner? For databases that deploy to cloud that means authentication must be on by default, no?

Ecuador quickly has leaped into global leadership on this issue by raiding the offices of an Elasticsearch customer and arresting an executive who used a big data product simply configured to be unsafe.

Ecuadorian authorities have arrested the executive of a data analytics firm after his company left the personal records of most of Ecuador’s population exposed online on an internet server.

[…]

According to our reporting, a local data analytics company named Novaestrat left an Elasticsearch server exposed online without a password, allowing anyone to access its data.

The data stored on the server included personal information for 20.8 million Ecuadorians (including the details of 6.7 million children), 7.5 million financial and banking records, and 2.5 million car ownership records.

The primary question raised in the article is how such a firm ended up with the data, as it wasn’t even authorized.

Yet that question may have a deeper one lurking behind it, because database vendors failed to enforce authentication it undermines any discussion of authorization. Could Ecuador move to ban database vendors that make authentication hard or disabled by default?

A hot topic to explore here is what vendors did over the past seven years to prevent firms like the one in this story from ending up with data in the first place, as well as preventing further unauthorized access to the data they accumulated (whether with or without authorization).

A broad investigation of database defaults could net real answers for how Ecuador, and even the whole world, can clarify when to hold vendors accountable for ongoing security baseline errors that are now impacting national security, highlighting the true economics of database privacy/profit.

Update October 2019: An unprotected Elasticsearch cluster contained personally identifiable information on 20 million Russian citizens from 2009 to 2016.