Ontario’s AI Audit is What’s Coming for CISOs

Ontario’s auditor general published findings on May 12 that every CISO probably already knew in private. It is why I released Wirken as free and open-source in late February.

The Ontario case tells us twelve thousand public servants visited four hundred AI sites in four months. Two hundred and forty-four were classified unsafe. Six percent of usage went through the approved tool. The training course was marked complete on just three percent of laptops.

The press turns this into a “shadow AI” story because it’s a simple construction. But there’s far more going on here. It’s like calling virtual machines “shadow computers” and misses what is actually happening: a new cycle in internal workload reassignment and delegation. The shadow is the point, not a threat.

Ontario, like most of the Microsoft environments I’ve had to parachute into lately, already had Purview and Defender. It had an AI directive and a training course on top, with nothing to back them up. The shadow control gap is a symptom. The real problem is no internal visibility into how people are getting their work done now. Ontario tells the easy version of the story: one agent per user, one conversation per session. The hard version is coming, and shadow AI controls will continue operating at the wrong level.

What Security History Teaches Us

VMware was a curiosity in 1999 when I ran it on an 8-CPU IBM server using RedHat to push eight workspaces over X. Within five years it became a security crisis when organizations planned to run tens of thousands across data centers. Why? The hypervisor created a black box effect within environments that had spent years building visibility and control on physical networks. Existing tools still watched hardware while the workload shifted away to software. Visibility broke because workloads moved into a hidden layer and scaled.

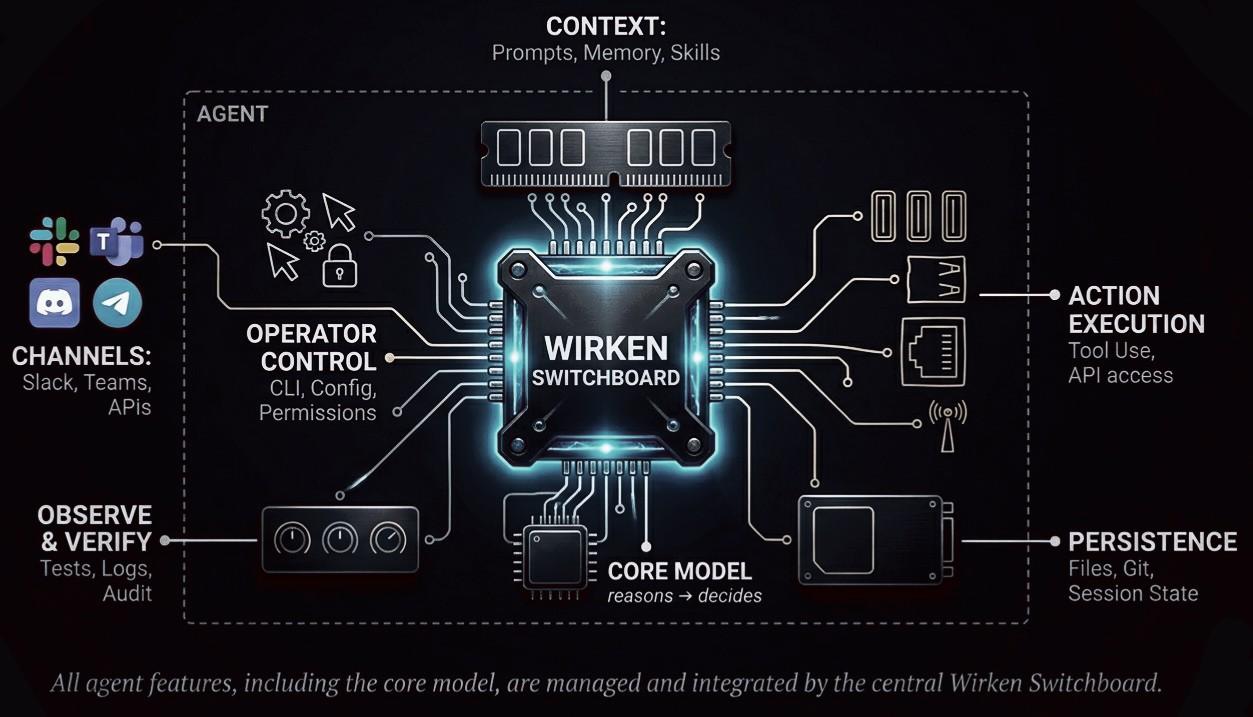

In AI terms it’s already clear that existing DLP isn’t yet set up to catch the traffic flows moving to a hidden layer. Organizations investing heavily in Purview, Symantec, Forcepoint, Netskope are blind to agents. They may use Envoy and Istio for service mesh, and use Zscaler and Fastly for egress. What they still don’t have is an AI-level visibility tool for agent flows, a switchboard for every agent connection to pass through. AI needs to be made visible, and more importantly its users deserve an agnostic control plane.

Instrumenting the agentic layer with enterprise-grade controls, like detection and logging, is the obvious next evolution. In the hypervisor-era it was a matter of exposing what was running, what was talking and to what, and who had touched it. Visibility came back even better than before, because the hypervisor saw more things at lower cost than the physical layer. The layer that removed observability evolved to the layer that enhanced it.

The agentic flows are familiar when you look at the cycles of IT. One user adopting a few agents is a curiosity, like how each user has a mobile device or three (phone, laptop, tablet). Agentic swarm, however, becomes an entirely different attack surface because it’s barely constrained by physical controls. Enterprises that have been talking with me about piloting single-agent workflows last quarter are seeing multi-agent swarms already growing. The workflows are evolving to spawn a planner that spawns five workers. Some of this is pushed by vendors who measure success in tokens consumed. Each worker calls tools, queries models, holds credentials, talks to other agents, and repeats and scales larger. This is the real danger to those making huge DLP investments yet seeing none of their agentic layer: Purview, Forcepoint, Netskope, Zscaler, see traffic to known SaaS endpoints and miss the long tail of where we are headed. Ontario’s 244 unsafe sites would have been far better handled with a switchboard.

Swarm Visibility for Existing Infrastructure

A single agent has one identity, one credential set, one log. The AI swarm has dozens of agents per workflow, each with its own identity, its own borrowed credentials, its own tool calls, its own decisions. The attribution question stops being “which user did this.” It becomes “which ephemeral agents in which swarm ran under which parent acting on whose behalf, with what authority, and leaving what evidence.”

| Swarm Elements to Track | Gaps in Existing Infrastructure |

|---|---|

| Identity multiplies | EDR tracks processes, not delegated agent identities |

| Permission inherits | DLP rules are per-user, not per-spawn-chain |

| Cascading peer failures | Network tools see the LLM call, not the agent-to-agent calls |

| Audit threads | No single log captures the swarm, only fragments per service |

| Tool access | CASB tracks SaaS access, not which tool a child agent invoked through a parent |

Every agent no matter how short-lived becomes a new endpoint. The swarm becomes the new fleet, also short-lived. Without instrumentation at the layer where it all runs, the CISO has no answers to the questions regulators need to start demanding: who acted, with what authority, on whose behalf, and what did they touch.

Swarm Control

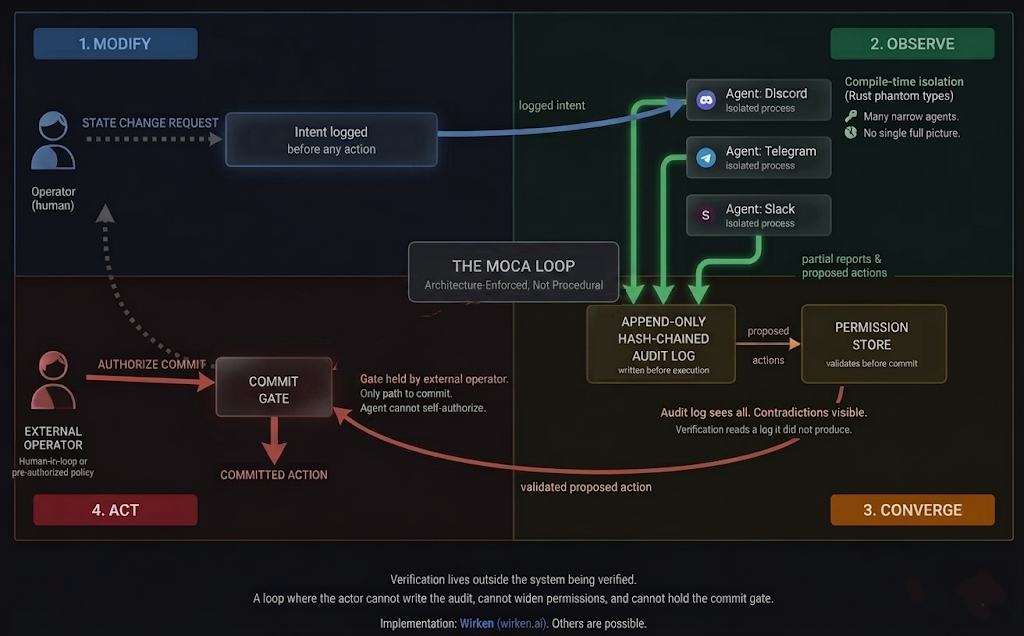

After decades of working within PDCA and OODA, it seemed we needed a way to handle the “autonomy” of agents. The MOCA loop, documented at length in Gebrüder Ottenheimer Brief №7, illustrates how verification has to live outside the system being verified to prevent integrity breaches.

Every agent runs through the switchboard, just like how networking has worked since the beginning of networking. Every spawn is a recorded event. The parent declares the child’s capability ceiling, tool allowlist, maximum permission tier, maximum rounds, maximum runtime, and the runtime enforces it. The LLM cannot widen a child’s permissions. The harness intersects, clamps, and refuses. Each child runs headless with its own isolated session log. The depth of the spawn tree is capped. The full graph of who-spawned-whom is reconstructable from the logs alone.

Every call inside the swarm is captured. Agent-to-model, agent-to-tool, agent-to-credential, agent-to-agent. Each call is recorded before execution as a typed event in a hash-chained log, signed with an Ed25519 key after every turn. An offline verifier replays the chain and breaks on tampering. The credential vault runs in a separate process. The agents never see plaintext tokens. Prompt injection scanned at every inbound boundary, including between agents, logged, forwarded to the SIEM alongside phishing.

This is the visibility that existing infrastructure tools cannot yet reach. Network tools like DNS, IDS and DLP know a packet went to api.openai.com. An agent switchboard knows which agents in which swarms asked, what parents authorized, what tools were called, what was sent back, and where in the spawn tree the call originated. Better visibility than before agents existed, because the swarm layer sees relationships the network layer never had.

The Auditors are Coming

- Tamper-evident record of every agent interaction, including agent-to-agent?

- What crossed the boundary, between agents and outside the swarm?

- Evidence the approved provider is the actual provider, for every agent in the swarm?

- A control that survives an agent spawning another agent?

- Cryptographic evidence of where each agent’s inference ran?

- Proof a compromised agent cannot escalate through its parent’s authority?

None of these are answered yet within the standard infrastructure control suite of products. They are answered by carefully instrumenting the swarm layer, so that the existing stack continues and integrates with a switchboard. The agent usage described in the Ontario report is doing what IT has done before. It’s time to update tools and procedures to better control the modern agentic era.

The CISO’s job today is to get control of the AI flooding their environments. They will be blocking the 244 sites and locking down unmanaged browsers. They will be converting the consumer LLM accounts to enterprise editions. But we need more. The stick approach, locking everything, crashes users into a wall, when they still need access to AI. The carrot has to be deployed, and Wirken is meant to show why and how it can be done.

| Ontario finding | Agent swarm control |

|---|---|

| 244 unsafe AI sites visited by 12,000 staff | IT blocks all AI sites at the network layer using endpoint and network controls. Staff who need AI get it through the managed AI switchboard. Staff who try to access other sites are detected and blocked. |

| 3% of staff completed AI training | Training sits on top of an actual control. IT does not have to maintain allow/block lists to train 55,000 people (impossible). A safe path is deployed as the only path that resolves, allowing dynamic allow/block management. |

| 6% of usage on approved tool, 94% on unapproved | The approved path wins because it remains open with tools easy to approve and update. Unapproved tools resolve to nothing. There is no approved-versus-unapproved race. |

| EDP bypassed by switching to Chrome or Firefox | IT manages every browser and prevents endpoint drift. Unmanaged browsers cannot reach any LLM, approved or otherwise. The managed browser is wired to the agent switchboard. The switchboard brokers models. |

| DVS facial recognition tested on 214 people | Every decision is logged before execution. The data to test against any population sits in the log, available to the operator. IT does not need to trust any vendor’s role in a test report because IT runs its own. |

| 11 of 20 AI Scribe vendors approved with no third-party audit | Provider is a deployment choice. IT can offer Ollama on-prem, NVIDIA NIM on-prem and in the datacenter, Privatemode and Tinfoil with hardware attestation, or any major named vendor. The vendor’s audit report is no longer the only evidence. |

| 45% scribe hallucination, 60% wrong drug at procurement | Read: allowed. Write or act: gated. The model can still hallucinate. The hallucination does not become an action without an approval the agent does not control. |

| Doctors not required to attest review of AI output | The commit gate is held outside the chain that proposed the action. The agent proposes. The human commits. Or a pre-authorized policy commits. The agent does not commit its own output. |

| Vendors not required to demonstrate systems live | Every action recorded before execution in a hash-chained log that is signed. Replayable offline. The system demonstrates itself every time it runs, to whoever holds the log. |

The switchboard for the agent era. Open source, MIT licensed, single binary. Every agent call routes through it. Every spawn is recorded. Every child runs under a capability ceiling the parent cannot widen. Runs on Linux, macOS, Windows. Model agnostic (Ollama, Anthropic, OpenAI, Gemini, Bedrock, NVIDIA NIM, Tinfoil, Privatemode).