Spoiler alert, WBUR News ran a story called “Can A Computer Catch A Spy” that centers on this false premise:

Grimes suspected [Aldrich Ames] for a reason no algorithm would have divined: He just seemed different.

I call BS on the idea that humans in the CIA caught a spy by seeing something algorithms could not. Not only are algorithms incredibly able to divine different, they’re fast becoming a threat and we want them to overlook differences more often than find them.

Algorithms typically can see differences more often than we can, or want to, see them.

The story later admits this point itself by claiming computers are much faster than humans at making connections from random piles of data, forcing us to address some uncomfortable findings.

And then the story goes on to reverse itself again, claiming that algorithms can’t make meaningful connections without human assistance.

Bottom line is it’s a mess of a story, flip-flopping its way around the question of how to find a spy when he’s staring you in the face.

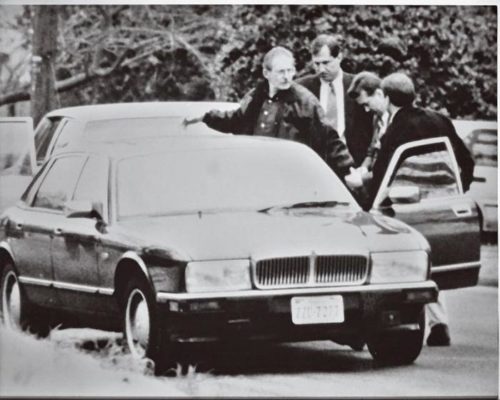

The lesson of Aldrich Ames was to question why humans had refused to “see” things that later seemed such obvious warning signs. So the next question in this context should be whether humans will detune computer algorithms in the same way humans are prone to ignore signals.

Fast forward to today and there’s a competition ending December 15 on new thinking in how to find insider threats:

The Office of the Under Secretary of Defense for Intelligence (OUSDI), in cooperation with WAR ROOM, is pleased to announce an essay contest to generate new ideas and elevate thinking about insider threats and how we respond to and counter the threat.

See also: Insider Threat as a Service (IaaS)