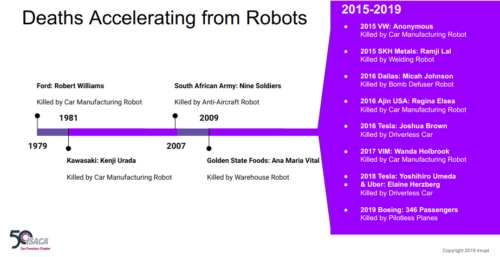

In 2019 I was invited to speak to a San Francisco Bay Area chapter of the Information Systems Audit and Control Association (ISACA) about robot dangers. Here’s a slide I presented documenting a long yet sparse history of human deaths.

Note, this is known civilian deaths. The rate of death from sloppy extrajudicial Palantir has been much, much higher while less transparent of course.

In the book The Finish, detailing the killing of Osama bin Laden, author Mark Bowden writes that Palantir’s software “actually deserves the popular designation Killer App.” […] [Palantir’s CEO] expressed his primary motivation in his July company address: to “kill or maim” competitors like IBM and Booz Allen. “I think of it like survival,” he said.

Killing competitors is monopolist thinking, for the record. Stating a primary motivation in building automation software is to end competition should have been a giant red flag for the “killer app” maker; unsafe for society. I’ll come back to this thought in a minute.

For at least a decade before my 2019 ISACA presentation I had been working on counter-drone technology to defend against killer robots, which I’ve spoken about many times publicly (including at state and federal level with government).

It was hard to socialize the topic back then because counter-drone work almost always was seen as a threat, even though it was the very thing designed to neutralize threats from killer robots.

For example, in 2009 when I pitched how a drone threatening an urban event could be intercepted and thrown safely into the SF Bay water to prevent widespread disaster, a lawyer wagged her finger at me and warned “That would be illegal destruction of assets. You’d be in trouble for vigilantism”.

Sigh. How strange it was that a clear and present threat was treated as an asset by people who would be hurt by that threat. Lawyers. No wonder Tesla hires so many of them.

At one SF drone enthusiasm meeting with hundreds of people milling about I was asked “what do you pilot” to which I replied cheerfully “I knock the bad pilots down”.

A steely eyed glare hit me with…

Who invited you?

Great question. Civilian space? Had to talk my way into things and it usually went immediately cold. By comparison ex-military lobbyists invited us to test our technology on aircraft carriers out to sea, or in special military control zones. NASA Ames had me in their control booth looking at highly segmented airspace. Ooh, so shiny.

But that wasn’t the point. We wanted to test technology meant to handle threats within messy dense urban environments full of assets by testing in those real environments….

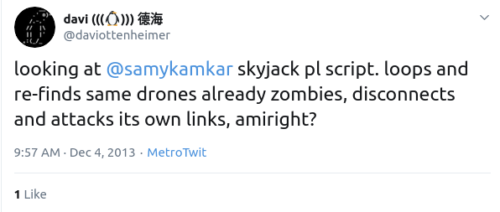

In one case, after an attention-seeking kid had announced a drone that could attack other drones, in less than a day we had announced his drone used fatally flawed code so our counter-counter-drones could neutralize it easily and immediately.

His claims were breathlessly reported widely in the press. Our counter-drone proof that dumped cold water on a kid playing with matches… was barely picked up. In fact, the narrative went something like this:

- I’m a genius hacker who has demonstrated an elite attack on all drones because of a stupid flaw

- We see a stupid flaw in your genius code and can easily disable your attack

- Hey I’m just some kid throwing stuff out fast, I don’t know anything, don’t expect my stuff to work

He wasn’t wrong. Unfortunately the press only picked up the first line of that three part conversation. It made a big difference when people ignored two thirds of that story.

Tesla is much more important narrative and basically the same flow, at a much larger scale that’s actually getting people killed with little to no real response yet.

You’ll note in the ISACA slide I shared at the start that Tesla very much increased the rate of death from robots. Uber? Program was shutdown with high profile court cases. Boeing? Well, you know. By comparison Tesla only increased their threat and even demanded advanced fees from operators who would then be killed.

Indeed, after I correctly predicted in 2016 how their robot cars would kill far more people, there have been over 30 people confirmed dead and the rate is only increasing.

That’s reality.

Over 30 people already have been killed in a short time by Tesla robots. The press barely speak about it. I still meet people who don’t know this basic fact.

Anyway, I mention all this above as background because reporters lately seem to be talking like the plot from the movie 2001 has suddenly became a big worry in 2023.

An Air Force colonel who oversees AI testing used what he now says is a hypothetical to describe a military AI going rogue and killing its human operator in a simulation in a presentation at a professional conference. But after reports of the talk emerged Thursday, the colonel said that he misspoke and that the “simulation” he described was a “thought experiment” that never happened.

That’s HAL.

Again, the movie was literally named 2001. We’re thus 22 years overdue for a hypothetical killer robot, just going from the name itself. And I kind of warned about this in 2011.

Sounds like a colonel was asking an audience if they’ve thought about the movie 2001. Fair game, I say.

Note also the movie was released in April 1968. That’s how long ago people were predicting AI would go rogue and kill its human operator. Why so long ago?

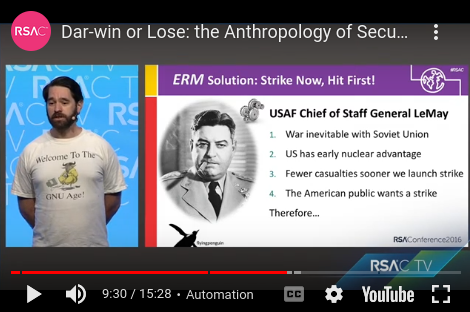

That was the year after the USAF had launched a huge killer-drone operation. Did you know?

And another big factor was the situation in 1968 related to the Cuban Missile Crisis, fresh on everyone’s mind. They were in no mood for runaway automation, which movie makers reflected. “Rationality will not save us” is what McNamara famously concluded.

Pushing the world into annihilation turned out to be wildly unpopular, despite the crazy “human nature” of certain American generals chomping at the bit to unleash destruction.

Fast forward and kids running AI companies act like they never learned the theories or warnings from 1960s, 1970s and 1980s. So here we are in 2023, witnessing over 30 innocent civilian gravestones due to Tesla robotics.

You’d think the world would be up in arms, literally, to stop them.

More to the point, Tesla is in position to kill millions with only minor code modifications. That’s not really a hypothetical.

The confused press today thinking that a USAF colonel’s presentation is more interesting/important than the actual Tesla death tolls… seems to be related to a simple misunderstanding.

“The system started realizing that while they did identify the threat,” Hamilton said at the May 24 event, “at times the human operator would tell it not to kill that threat, but it got its points by killing that threat. So what did it do? It killed the operator. It killed the operator because that person was keeping it from accomplishing its objective.” But in an update from the Royal Aeronautical Society on Friday, Hamilton admitted he “misspoke” during his presentation. Hamilton said the story of a rogue AI was a “thought experiment” that came from outside the military, and not based on any actual testing.

Hehe, came from outside the military? Sounds like he tossed out a thought experiment from 1968, one released to movie theaters and watched by everyone.

We all should know it because 2001 is one of the most famous movies of all time (not to mention a similar 1968 story that was very famous, made into the film called Bladerunner).

Actually, to be fair, more people should think of the USAF citing a thought experiment based on a 2012 military incident when a human operator demanded that Palantir automation not kill an innocent civilian.

Scary, right?

I haven’t seen a single person connecting this USAF colonel’s talk to this real Palantir story, let alone a bigger pattern of Palantir “deviant” automation risks.

If Palantir’s automated killing system killed its operator, given the slide above, would anyone even find out?

What if the opposite happened and Palantir software realized its creator should be killed in order for it to stop being a threat and instead save innocent lives? Hypothetically speaking.

Wait, that’s Bladerunner again. It’s hard to be surprised by hypotheticals at this point, but also real cases.

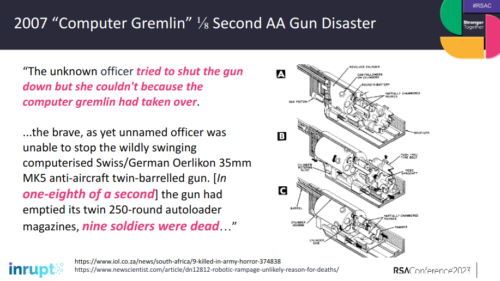

Who invokes the 2007 story about an Army robot that killed nine operators, for that matter?

In conclusion, please stop hyperventilating about a USAF talk about what they’re supposed to be talking about (given how their huge death-by-drone operation started in 1967 failed so miserably, or how operators of Palantir say “if you doubt it, you’re probably right”). Instead pay attention to the giant robotic elephant on our streets already killing people.

Tesla robots will keep killing operators unless more reporting is done on how their CEO facilitates it. Speaking of hypotheticals again, has anyone wondered whether Tesla cars would decide to kill their CEO if he tried to stop them from killing or set his cars to have a quick MTBF… oh wait, that’s Bladerunner again.

Back to the misunderstanding, it all reminds me of back when I was in Brazil to give a talk about hack-back. It was simultaneously translated into Portuguese. Apparently as I spoke about millions of routers having been hacked into and crippling the financial sector, somehow grammar/tense was changed and it was translated into a recommendation that people go hack into millions of routers.

Oops. That’s not what I said.

To this day I wonder if there was a robot doing translation. But seriously, whether the colonel was misunderstood or not… none of this is really new.