In a rather superficial analysis featured on War on the Rocks, the discourse on artificial intelligence (AI) reveals a surprising lack of depth. In essence, the crux of the argument suggests that by lowering expectations, particularly in terms of reliability, the concept of “innovation” is reduced to nothing more than pushing a colossal and conveniently uncomplicated “plug and pray” button.

The authors’ apparent reductionist perspective not only fails to grasp the intricacies of AI’s potential in the realm of warfare but also overlooks the nuanced challenges that seasoned military analysts, with decades of combat experience, understand are integral to the successful integration of advanced technologies on the battlefield.

America’s steadfast commitment to safety and security assumes that the United States has the three to five years to build said infrastructure and test and redesign AI-enabled systems. Should the need for these systems arise sooner, which seems increasingly likely, the strategy will need to be adjusted.

When considering America’s commitment to safety and security, a closer examination reveals a steadfast commitment inherently implies less reliance on assumptions. The authors, however, leave a significant void in their arguments by not adequately clarifying their position on this. The closest semblance of an alternative is their proposition of a vague aspirational path labeled as AI “assurance,” positioned between extremes of measured caution and imprudent haste.

…urgently channel leadership, resources, infrastructure, and personnel toward assuring these technologies.

A realist imperative however underscores the dynamic nature of the geopolitical landscape, necessitating a proactive stance rather than a reactive one. Three to five years ahead, is a tangible goal instead of shrinking release cycles to the imprudent “burn toast, scrape faster” mentality. The strategic imperative lies not merely in constructing a sophisticated AI apparatus but also in ensuring resilience and adaptability to the predictable exigencies of future conflict scenarios.

Here are a few instances of downrange events that unequivocally warrant the disqualification of AI innovations, a consideration surprisingly absent in the referenced article:

- Unintended engagement

- Disproportional and collateral damage

- Extra-judicial mistargeting (e.g. “If you doubt Palantir, you’re probably right“)

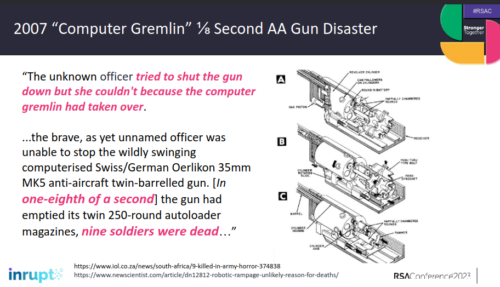

- Compromised command and control

- Operator harm (e.g. “How to hunt a robot“)

This War on the Rocks article by a “native Russian speaker”, however, shamelessly bestows excessive praise on Russia for acceleration towards an ill-concieved “automated kill chain” characterized by total disregard for baseline assurances. In doing so, the authors fail to acknowledge the very pivotal point in drone engineering from the battlefield — oppressive Russian corruption and hollow patronage was left behind as Ukraine strongly asserted measured morality and quality control, which has been the true catalyst for Ukraine’s rapid and successful drone innovations (leaving the Russians always only in a clueless catch up mode).

Russia’s reckless pursuit and indiscriminate deployment of AI, as highlighted in the War on the Rocks article, contribute to the mounting evidence of Russian tanks and troops being grossly outmatched by adversaries who prioritize fundamental training and employ sophisticated countermeasures.

An overwhelming desire for switching into the “at any cost” haste of catch up mode lacking any morality is of little benefit when it brings about overwhelming technical debt and self-destructive consequences.

Remarkably, the authors neglected to provide an explanation for their omission of Ukrainian strides in “small, relatively inexpensive consumer and custom-built drones” as an integral aspect of American military strategy of effective targeting. Equally puzzling is their apparent belief that innovation ceases when others replicate it.

Taking a broader perspective, the American military ethos, characterized by augmentation for skilled professionals in tanks, has demonstrably outshone Russia’s reliance on over-automation guided by disposable conscripts stupidly killing themselves even faster than their enemy can. Despite Russia’s boastful rhetoric, their inability to distinguish between effective and ineffective strategies echoes historical patterns familiar to statisticians of World War II examining the Nazi lack of technological prowess.

AI, far from being an exception to historical trends, appears to be a recurrence of unfavorable chapters. Reflect on a crossbow, longbow, repeating rifle, or even Churchill’s “water” tanks (e.g. how America ended up mass-producing Britain’s innovations)… and the trajectory becomes evident. Throughout history, advancements in genuine measures of safety and security (weapon assurance as a practical measure of safety and security) have defined battlefields for centuries.

Abraham Lincoln famously urged the prudent use of time to sharpen an axe before felling a tree, a maxim applicable to any technology. The historical narrative strongly indicates that AI, as a technological frontier, will only serve to underscore the enduring wisdom encapsulated in the words of the President who delivered an unconditional victory in America’s Civil War.