The heartbreaking Washington Post account of the tragic demise of a Tesla employee underscores a significant detail. The owner was under the influence of alcohol at the time of his fatal crash caused by the Full Self-Driving (FSD) system.

Notably, The Washington Post’s coverage meticulously substantiated the role of Tesla in the unfortunate fatality, killing an owner who believed he could trust his CEO’s outlandish statements about FSD safety.

From this harrowing low point, the response unfolds predictably.

Tesla’s CEO has attempted to discredit the narrative by perpetuating the same perilous fallacy that operating a vehicle under the influence of alcohol with FSD engaged would ensure safety.

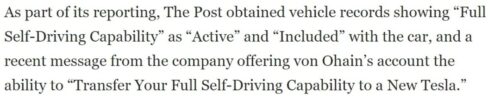

The facts remain stark: the Tesla staff killed was absolutely convinced he was utilizing FSD at the time of his crash, a detail unequivocally clarified by The Washington Post.

More to the point, the consequences of a CEO’s engineering culture that created FSD are tragically evident, given how his intoxicated staff were burned to death for believing FSD would protect them.

Consider this: If the Tesla employee who met a tragic end was NOT utilizing FSD despite thinking he had it running all the time, it implies a systemic overestimation within Tesla’s ranks regarding FSD even being installed — a horrible deception even more egregious.

Tesla’s CEO unnecessarily compounds all the issues by obnoxiously perpetuating a false notion that driving under the influence with FSD is safe. He encourages further perilous behavior (while also proving the Washington Post reporting accurate). But he also undermines the very notion that FSD has any integrity at all when installed or during operation.

This situation evokes another urgent warning: Refrain from engaging with Tesla vehicles, and advocate against their usage among friends and family.

The Tesla response would be like if the CEO of Boeing suggested, after a plane’s cabin door plug unexpectedly detached mid-flight, that passengers could prevent such occurrences by relying even more on known defective engineering — continuing to fly as usual despite news of a serious failure.

Boeing’s planes were GROUNDED without any fatalities. Why are Tesla still allowed to operate on public roads with hundreds already dead and rising?

Musk is intentionally deceiving people. If his staff believed they had FSD installed and didn’t, they were misled. If they did have FSD installed and still crashed, it contradicts Musk’s claim that it would prevent such incidents.

In either scenario, Musk is cornered. Check mate. Fraud is evident no matter which way you look at a Tesla.

But let’s center for a second on the fact that Musk clearly and unapologetically continues to assert that FSD will prevent crashes for drunk drivers. This is exactly the wrong thing to say and demonstrates culpable negligence. Musk repeats the very dangerous and misguided beliefs that should be blamed for this tragedy, killing a Tesla employee.

If you don’t blame FSD for this crash, you’re drunk.

Claims about self-driving safety differ hugely from other technologies in the past. Consider seatbelts or airbags. FSD is literally sold on the promises of active automation and autonomy that is the exact opposite of passive safety features like seatbelts and airbags. FSD shifts driving liability to the vehicle itself, as it takes active control over driving. Advocating for the safety benefits of seatbelts and airbags, entirely passive with zero feedback loop to the driver, does not in any way increase reckless behaviors such as driving under the influence. In fact, drunk drivers are known to leave their seatbelts OFF and die from flawed reasoning. This is also what leads them to turn FSD ON and die as a result.

Opposites.

When discussing self-driving technology, suggesting that it could prevent accidents caused by impaired driving will definitely spread the flawed idea that it’s acceptable to engage in risky behavior. Elon Musk should be held to account for FSD turning out to be a death-trap of his design.

In essence, a clear distinction lies in the flawed active role that self-driving technology plays in the driving process compared to the proven passive safety features provided by seatbelts and airbags.