Sometimes I am asked to review or explain a framework of thinking systems in terms of a very popular book by Nobel laureate and father of behavioral economics, Daniel Kahneman.

…human reason left to its own devices is apt to engage in a number of fallacies and systematic errors, so if we want to make better decisions in our personal lives and as a society, we ought to be aware of these biases and seek workarounds.

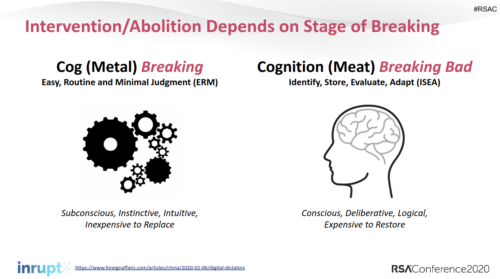

I suppose this comes up most when I describe the same things in many of my presentations, such as my last one given at RSAC SF:

My point usually has been that veterinarian science used this duality in thinking to solve Rinderpest (as I wrote here in 2010, a year before Kahneman’s very famous best-selling book was published).

And my point in describing the dual-system that solved Rinderpest, for such a huge accomplishment as ending a disease, has been that our security community maybe could do similar things to solve for integrity attacks on information systems. You say we have a problem with disinformation campaigns and I’ll say I have a possible solution!

Kahneman himself just gave a brief three minute presentation the other day in an “AI Debate”. He quickly starts off by admitting

…they’re not my idea but I wrote a book to describe them.

He then goes on to say his understanding of system one is that “things happen to you, you don’t do them”, calling them automatic and parallelized, whereas system two is “something you do” and serialized… all of which seems very consistent with my slides.

Again for clarity:

System 1) things that happen to you

System 2) things that you do

This is not only consistent with what I studied before his book was published, the split is of course NOT my idea either, as I’ve always said.

I have been writing a book to describe them, but it has been for the purposes of improving safety in engineering practices.

What is most interesting in his presentation is while he tells us that system one is “our world” it’s probably more accurate to say (by his own admission) that in system one we are seeing shadows on Plato’s cave wall, not the strings we pull.

thanks for this. very interesting on the nature of system one