Elon Musk infamously boasts he makes mistakes whenever and doesn’t respect the rules.

Well, I guess we might make some mistakes. Who knows?

This seems to be coming up repeatedly as bad news for his customers, let alone anyone around them, when their car acts like the CEO and gloatingly crosses a double yellow.

Another Tesla, another Tesla owner dead:

The preliminary investigation revealed, at approximately 7:50 a.m., a 2019 Tesla Model 3, occupied by five subjects, was traveling southbound in the left travel lane in the 3000 block of Connecticut Avenue, Northwest. The Tesla crossed the solid-double yellow lines into the northbound lane of travel, struck a 2018 Toyota C-HR, and then struck a 2010 Mercedes-Benz ML-350, head-on. […] On Sunday, February 26, 2023, the driver of the Tesla succumbed to his injuries and was pronounced dead.

The road suggests the driver was operating in a straight line on a road known for speed abuse (e.g. “40 to 60 percent of the people completely disobeyed the speed limit by more than 12 miles per hour”). A related problem with Tesla ignoring double yellow has been reported for many years. The algorithm treats lines as open to cross in almost any case, such as a vehicle slowing ahead.

I’ll say that again, Tesla engineering allegedly treated double yellow the same as dotted white line. Cars were trained to attempt unsafe maneuvers to feed unnecessary speed.

In this case when a car ahead slowed for the pedestrian crossing (as they should), a speeding Tesla algorithm likely reacted by accelerating in a jump across the double yellow into a devastating head-on crash… by design!

That crash scene reminds me of December 2016 when Uber driverless was caught running red lights, foreshadowing one death a year later that brought criminal charges and shut down their entire driverless program.

Tesla driverless software caused a similar fatality basically at the same time April 2018. Their cruel and unusual reaction was to increase the charge more and hire an army of lawyers to make the victims and their story disappear.

…when another vehicle ahead of him changed lanes to avoid the group [of pedestrians], the Model X accelerated and ran into them [killing Umeda].

2018.

Tesla software fatally accelerated straight into a traffic/hazard after it saw a vehicle ahead evade it. See why this 2023 crash reminds me of 2018?

Did you hear about this death and how bad Tesla was, or just the Uber case? The crashes were around the same time, and both companies should have been grounded. Yet Tesla instead bitterly spared with a grieving family and manipulated the news.

Here’s a 2021 Tesla owner forum report showing safety engineering has regressed, FSD five years later feeling worse than Uber’s cancelled program.

Had a super scary moment today. I was on a two lane road, my m3 with 10.6.1 activated was following a van. Van slowed down due to traffic ahead and my car decided to go around it by crossing the yellow line and on to oncoming traffic. […] The car ahead of me wasn’t idling. We were both moving at 25 mph. It slowed down due to traffic ahead. My car didn’t wait around. It just immediately decided to go around it crossing the yellow line.

A cacophony of voices then chime in and say the same thing happened to them many times, their Tesla attempting to surge illegally into a crash (e.g. “FSD Beta Attempts to Kill Me; Causes Accident”) . They’re clearly disappointed software has been designed to ignore double yellow in suicidal head-on acceleration, although some try to call it a bug.

January 2023 a new post shows Tesla still has been designed to ignore road safety. This time Tesla ignores red lights and stopped cars, allegedly attempting to surge across a double yellow into the path of an oncoming train!

2023. A ten year old bug? Ignoring all the complaints and fatalities?

You might think this sounds too incompetent to be true, yet Tesla recently was forced to admit it has intentionally been teaching cars to ignore stop signs (as I warned here). That’s not an exaggeration. The company dangerously pushes a highly lethal “free speed extremism” narrative all the way into courts.

A speed limiter is not a safety device.

That’s a literal quote from Tesla who wanted a court to accept that reducing speed (e.g. why brakes are on cars) has no effect on safety.

As the car approached an intersection and signal, it accelerated, shifted and ran a red light. The driver then lost control… [killing him in yet another Tesla fire]. The NTSB says speeding heightens crash risk by increasing both the likelihood of a crash as the severity of injuries…

Let me put it this way. The immature impatient design logic at Tesla has been for its software to accelerate across yellow lines, stop signs and through red lights. It’s arguably regressive learning like a criminal, to be more dangerous and take worse risks the more it’s allowed to operate.

…overall [Tesla’s design feeds a] pattern here in Martin County of more aggressive driving, greater speeds and just a general cavalier sense towards their fellow motorists’ safety.

The latest research on Tesla’s acceleration to high speed mentality shows it increased driver crash risk 180 to 340 percent, with a survival rate near zero! Other EV brands such as Chevy Bolt or Nissan LEAF simply do not have any of this risk and are never cited.

340 percent higher crash risk because of design.

You have to wonder about the Tesla CEO falsely advertising his car would magically be safer than all others on the road… while also boasting he doesn’t know when obvious mistakes are being made. It’s kind of the opposite. The CEO likely is demanding known mistakes be made intentionally, very specific mistakes like crossing the double yellow and accelerating into basically everything instead of slowing and waiting.

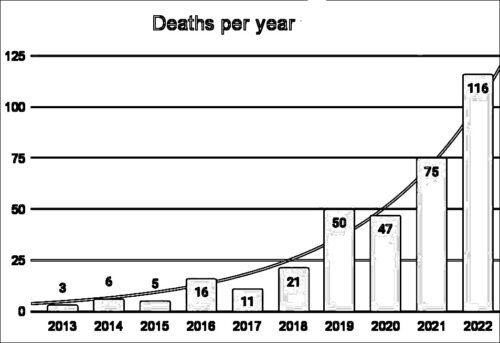

People fall for this fraud and keep dying at an alarming rate, often in cases where it appears they might have survived in any other car.

And then you have to really wonder at the Tesla CEO falsely advertising his car would magically drive itself, inhumanely encouraging owners to let it wander unsafely on public roads (e.g. “wife freaks” at crossing double yellow on blind corner) yet never showing up for their funerals.

Let me just gently remind the reader that it was a 2013 Tesla crossing a double yellow killing someone that Elon Musk boasted was his inspiration to rush to market “Autopilot” and prevent it ever happening again.

2013.

Ten years later? Technology failures that indicate reckless management with a disregard for life that rises to the level of culpable negligence.

Note there is another big new lawsuit filed this week, although it talks about shareholder value as if that’s all that we can measure.

Tesla finally is starting to be forced by regulators into fixing its “flat wrong” software. The gravestones are proof of the mistakes being made. The grieving friends and families know.

The deceased driver’s profile in this latest crash says he went to Harvard, proving yet again intelligence doesn’t protect you from fraud.

And that’s not even getting to reports of sudden steering loss from poor manufacturing quality, or wider reports about abuse of customers:

Spending over $20,000 on a $500 repair… a LOT of customers are getting shafted on this…TRUSTING Tesla do this, and they’re failing horribly at the expense of the customer.

If you don’t die in a crash from substandard hardware and software engineering, or grossly negligent designs, Tesla’s big repair estimate scams might kill you.

Why are Tesla allowed on the road?

They sold everyone a snickers. Just because people act stupid happy to have nougat and a little carmel doesn’t change the fact they’re missing peanuts and chocolate.

Some of the suckers even believe they’re “funding” safety research by throwing good money and lives at a bad car. Tesla can’t figure out how to build the product that didn’t and doesn’t exist and never will on the cars they sold it. That’s fraud. Harvard educated? What a waste.

Wow. You have done quite a lot of good work here. Here’s my takeaway:

2013 Tesla CEO responds to a fatality from Tesla crossing double yellow by announcing “Autopilot” will prevent such collisions.

2023 Tesla owners are paying a huge extra cost for software that kills them by crossing double yellow. Design? Training? It’s unsafe.

When a company boasts 0-60 in under 3 seconds, they also should be required to state the fatality risk at 60 jumps to nearly 100%. Or to put it simply every 10 mph you go faster your risk of dying in a crash doubles.

You obviously know your stuff, but I feel like I have to say this out loud.

https://safety.fhwa.dot.gov/speedmgt/ref_mats/fhwasa1304/Resources3/08%20-%20The%20Relation%20Between%20Speed%20and%20Crashes.pdf

If a Tesla at 30mph on this street uses its “fun” design to automatically jump across double yellow at 50mph into oncoming traffic, fatality risk goes to 80% or more with zero time for a driver to intervene… and for what?

FOR WHAT?

Who TAF with a degree in engineering thought the ADAS L2 decision to cross double yellow was anything other than a huge blasting warning and full STOP demanding driver attention?

After watching that “wife” link I’m thinking all FSD should have been grounded and recalled.

I see no reason in the world any Tesla should be in a rush to jump the double lines EVER! Stop. Observe. Go around if required. The car acts like it’s above the law with police flashers on for everyone else to get out of the way?

The fact that anyone in an engineering role enabled this kind of gross culpable negligence in their software… it’s like the rotten VW Dieselgate con but 1000X worse and repeatedly killing people.

Put these heartless, soulless Tesla teams on trial and stop this abuse of public trust.

This company is a disgrace to the profession of engineering. I see a clear safety threat that needs intervention.

Thank you for all that you have done and continue to do. De oppresso liber.

I regularly use FSD Beta.

It has never been anything other than TOO CAUTIOUS, and impeding traffic to the point where other drivers get pissed and try to pass when it is unsafe. E.g. coming to a full and complete stop at the stop line, then creeping forward slowly when the only other vehicle within sight is the one I’m blocking. Yes, this is failing to follow the law, and in many municipalities, it is unexpected behavior and often confuses other drivers.