A Tesla has been in a crash with a F-150, leaving the Tesla owner dead. It quietly revealed yet again that the “Autopilot crap” is really, and always will be, crap.

One of the shocking things in April 2016 was that a man who clearly knew nothing about cars positioned a deceptively named non-working “Autopilot” as already achieving collision avoidance.

An entirely avoidable near-miss incident video shared by Josh Brown was unapologetically spun into a dangerously misleading Twitter PR campaign (disinformation, which killed Brown himself only weeks later while believing the CEO’s suggestion collisions would be avoided).

As you probably know by now that his unnecessary death (and the Tesla CEO response that radar would be added to cars to prevent any more deaths, a statement he then reversed to increase margins on sales), sparked a series of talks by me over the subsequent years about the fraud (and gaslighting).

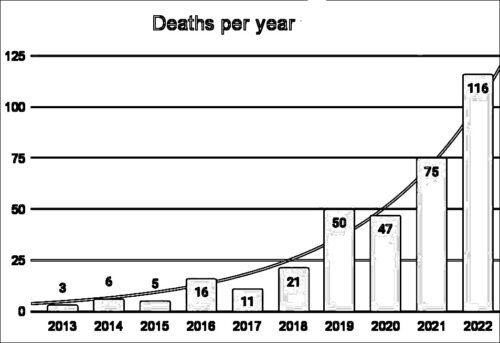

I hoped to raise awareness from a safety engineering perspective. Tesla seemed to be run by someone detached from reality, uncaring about human life, which would kill more people than ever.

While my warnings about this have been accurate, I’m still not sure the right people are getting the blazingly loud tesladeaths.com message yet.

I suppose it was easy for me to predict Tesla’s early “safest car” claims as fraud. That is because I look deeply into the data, look deeply into the technology, and… let’s be honest, this isn’t rocket science.

It’s actually pretty easy stuff for anyone to see. Just take a little time to read, know how to drive a car, and don’t believe hype from a man who gets enraged whenever his name isn’t mentioned in news about Tesla.

Here’s the latest problem, after it quietly leaked out of Corona police reports. The initial safety bulletin was sparsely written:

On March 28, 2023, at 10:16 P.M., the Corona Police Department responded to a traffic collision at the intersection of Foothill Parkway and Rimpau Avenue. Upon arrival, officers discovered that a Ford F-150 truck had entered the intersection against a red light and collided with a Tesla. The driver of the Tesla, a 43- year-old male resident of Corona, succumbed to his injuries at the scene…

Red light crash? That is one of my core areas of control failure research that I have spoken out about for years — like talking about firewall rule bypass, which should make any SOC analyst’s ears burn.

To me the report reads like a Tesla in 2023 did not avoid a collision, despite such promised capability. That is a very big clue for predicting more crashes, worth investigating further (e.g. the entire basis of my presentations/predictions for the past seven years).

After months of watching and waiting on Corona, California for more details, nothing came (unlike in a Colorado investigation of killer robots, for comparison). We haven’t heard from police, for example, whether the CEO-driven Tesla fraud campaign is in trouble for his role in this crash. Could his buggy, oversold software have done a better job here than the human… at the thing he repeatedly promises: collision avoidance?

Fortunately the NHTSA now has a Standing General Order (SGO) on Crash Reporting that can be easily correlated by researchers with these dry police reports. More to the point, the SGO helps us assess future dangers of various driver “assistance” entities.

Entities named in the General Order must report a crash if Level 2 ADAS was in use at any time within 30 seconds of the crash and the crash involves a vulnerable road user or results in a fatality, a vehicle tow-away, an air bag deployment, or any individual being transported to a hospital for medical treatment.

That 30 seconds reference is because Tesla tried to cheat by disabling their technology at the very last second so they could falsely blame humans for computer failures.

Tesla seems to be blaming drivers despite, at least in these sixteen high-profile emergency vehicle crashes—in which Teslas rammed into stopped emergency vehicles alongside roadways or in active lanes, incidents NHTSA found on average would have been identifiable by a human up to 8 seconds ahead of time—Autopilot was running but then shut off just a second before the impact.

Super evil stuff.

It’s hard for me to believe people still buy this brand after its founder was pushed out for being concerned about safety. Let me say that again, Tesla management appears to be intentionally cooking data to avoid accountability when their product failed.

You can not trust anything Tesla says about Tesla logs. Assume they are the Enron of automobile data.

What they do is far, far worse stuff than VW “dieselgate” since people are literally dying from their coverups.

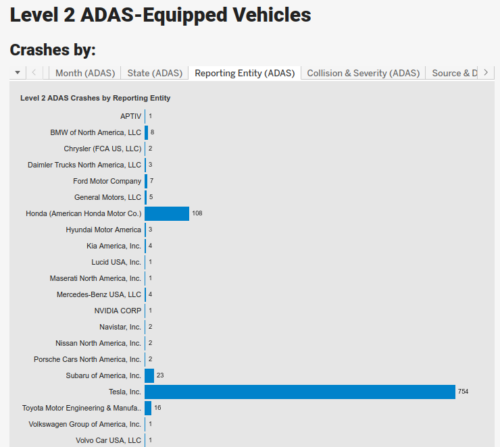

At this point NHTSA might as well call out a Tesla Order, given the obvious sore thumb among all the other carmakers.

Is it volume? Nope.

Nissan claims nearly 600,000 vehicles operating its Level 2 ADAS “ProPilot Assist” yet it looks like it reported only two crashes, to put it another way. Volvo similarly has a high volume of cars, which shows up as just one crash.

Tesla has design flaws causing a crash frequency that puts it in a zone all its own, at over 700 and rapidly increasing. The more people use their products the more people die or are harmed, unlike any other car.

Garbage engineering rushed to market puts it mildly, made worse by a CEO who fraudulently promotes low quality software as if it’s magic fairy dust. His decision recently to personally screen every single Tesla employee for loyalty to him (e.g. the infamous moral dilemma of a Hitler Oath, for those who know history) indicates he is becoming desperate to hide failure.

[Creating a fear and harm culture where staff] excuse themselves from any personal responsibility for the unspeakable crimes which they carried out on the orders of the Supreme Commander whose true nature they had seen for themselves. […] Later and often, by honoring their oath they dishonored themselves as human beings and trod in the mud the moral code…

It is a grave mistake (pun not intended) to take any job at Tesla, as German autoworkers surely know well. Their country, unlike America, requires them to study the anti-Semites who caused the Holocaust and how they took over media to spread hate.

Anyway, back to this tragic Corona crash reported in April to NHTSA, a Tesla owner clearly didn’t get the memo about Tesla safety failures and disinformation as his car drove towards the wide open Rimpau and Foothill intersection.

So here are the SGO details just released, which show the destroyed Tesla was brand new and traveling at only 47mph… like entirely new just on the road for the first time at 10PM (Dark – Lighted) and conclusively relying on ADAS.

- Model Year: 2023

- Mileage: 590

- Date: March 2023

- Time: 05:15 (10:15 PM local time)

- Posted Speed Limit: 45

- Precrash Speed: 47

That 590 mile odometer really grabbed me. It sounds like very compelling proof that Tesla safety claims, like their customers, are dead. And the speed was below 50mph, which in a wide open intersection should have significantly lessened stopping distance, reaction time and thus risk of harm.

It’s worth further research to understand why the newest Tesla ADAS at moderate speed, the most recent and theoretically improved product, again didn’t avoid a fatal crash. Given how it has been advertised as collision avoidance supposedly improving over time, the advertising isn’t even close to product ability.

The data even suggests an older Tesla could be less likely to fatally crash than any new one (e.g. Mobileye and NVidia supplied Tesla superior engineering, yet both walked away over serious ethics concerns).

Tesla technology and management of risk to society seems to be only getting worse, crashing in ways that weren’t a problem five or more years ago.

We see far too many brand new Tesla crash almost immediately like this, for example, straight from factory to junkyard as if they are headed towards being least safest thing on the road. The CEO talks about his product like it will improve, yet it declines, as real world experiences suggests he is selling little more than overpriced coffins.

On a related note, the top five recent ADAS fatalities by speed (MPH) were all… Teslas flagrantly ignoring road signs and failing to avoid collisions.

- Posted Speed: 55; Precrash: 97 into another car

- Posted Speed: 45; Precrash: 97 into motorcycle

- Posted Speed: 55; Precrash: 96 into fixed object

- Posted Speed: 65; Precrash: 96 into fixed object

- Posted Speed: 55; Precrash: 90 into another car

How “auto” can any “pilot” ever be, given it clocks in over 700 catastrophic errors such as these?

Would any actual pilot, human or not, be allowed to pilot for even one more minute given fatal crashes into things at this frequent pace? The fact that software can be used to kill and then kill again, and again hundreds of times, is truly diabolical.

And it is a strange twist to history, albeit a repeat, that a Ford just destroyed what arguably is a fascist machine.

Is it a moral act to destroy or disable Tesla before its unsafe ADAS will predictably crash at high speed into innocent people?

Did the F-150 save lives by taking this Tesla off the road? Who in your neighborhood is equipped and prepared to stop what could be seen as a VBED edition of the poorly-engineered Nazi V1? Floridians, looking at you.

Florida Passes Bill to Protect Billionaires if Their Exploding Rockets Kill People

That’s a “lives don’t matter” bill, rooted in pre-Civil War thinking.

After years of Tesla deaths rapidly increasing, the real elephant in the room is… what if Tesla in fact intends to automate organized racist violent criminal acts like when Henry Ford had encouraged and helped Adolf Hitler?

What if Tesla conspires with large foreign financial backers to facilitate automated deaths for profit every minute it is “free” to apocalyptically continue “learning”; a model setup to get away with more harms to Americans?

Perhaps driving a F-150 has just taken on a whole new meaning.