A very popular post here has been my examination of white nationalism messaging through T-Shirt designs, followed by the use of Q in modern communications.

Nothing I’ve written so far, however, can hold a candle to this new article (How subtle changes in language helped erode U.S. democracy — and mirrored the Nazi era) that explains how Trump repeatedly used language with the intention it would encourage others to commit a terrorist act:

Klemperer used his training in language and literature to listen to those around him. Initially he focused on the core falsehood of the Nazi regime, that victory in World War I had been stolen from Germany by leftist (read: democratic) politicians. He also observed the proliferation of right-wing groups such as the Storm Troopers (SA), each with its own symbol and slogan, flooding the language with new acronyms.

Gradually, he realized that the Nazi assault on language went much deeper. He noticed that the Nazis had cunningly borrowed from Christianity, above all the term “belief.” Detaching the word from its religious meaning, they demanded “blind belief” in their conspiracy theories and the lie of the stolen victory. Taking things on faith suddenly was seen as a virtue.

He also saw that the Nazis disguised their most violent acts by using misleading words, such as “concentration camp,” a word borrowed from South Africa, instead of calling them what they were — “death camps.”

But Klemperer’s well-trained ear also detected subtler changes, including a sudden rise in superlatives such as “gigantic,” “great” and “huge.” He even thought the Nazis overused exclamation marks to signal that they held questions in contempt. Klemperer called it “the language of the Third Reich.”

One could argue that Trump was carrying on a long tradition in the GOP (Nixon, Goldwater, Reagan) of using encoded violent racist speeches and “white cap” (KKK) tactics to fly by undetected, given examples above like saying “concentration camp” instead of saying “death camp”.

After all, Trump was allowed to campaign as an obvious Nazi by using its “America First” brand, while people still freely reference things like “Shining City on a Hill” (cunningly misappropriated from Christianity) when they want to openly promote white nationalism.

However Trump was very ignorantly facilitated by big tech to abuse social media in ways even 15 year old boys are banned from doing in places like the UK:

…the teenagers were part of a Telegram chat group that was found to contain images of Adolf Hitler and the white extremist involved in the 2019 Christchurch massacre. Telegram is a messaging app with the option of end-to-end encryption. One of the boys, a 16-year-old from Kent, is accused of providing an electronic link that allowed others to access a terrorist publication – the “white resistance manual”. He did so with the intention it would encourage others to commit a terrorist act, it’s alleged.

It all begs the question of bad code and quality control in big data technology as a measure of scientific thought, as I’ve presented here for over a decade now.

Another new study suggests a sinister sense of white supremacy lurks behind “tech elitism” in America.

Many don’t recognize what they’re facilitating because they encode their beliefs with words like “meritocracy” (libertarian/anarchist) to express why they believe white men must rule and operate above any law. This is information warfare by white men, taking as much money as quickly possible from government and stuffing it in their own pockets.

In terms of kinetic war it was perhaps best expressed recently in Iraq as…

[Don’t] let that moron talk about how courageous I was. I’m not here for this mission. Screw these people. I’m here for me. For the money. […] I have no ethical obligation to the people… I’m on my own. I’m a number on a government contract. A nearly empty single-serve coffee cup ready to be discarded and sent to a landfill.

I hear this also will be the new recruitment pitch for Peter Thiel’s latest venture Anduril (“fire from the west” or an “unbreakable” sword in fiction terms, as in Aragorn always yelled “ANDURIL” before battle).

It launched itself a year ago as modern “border wall technology” and is allegedly soaking up a lot of taxpayer money led by a CEO who made his name “shitposting” democracy and getting a pardon for a man who committed “the biggest trade secret crime [his sentencing judges] have ever seen”.

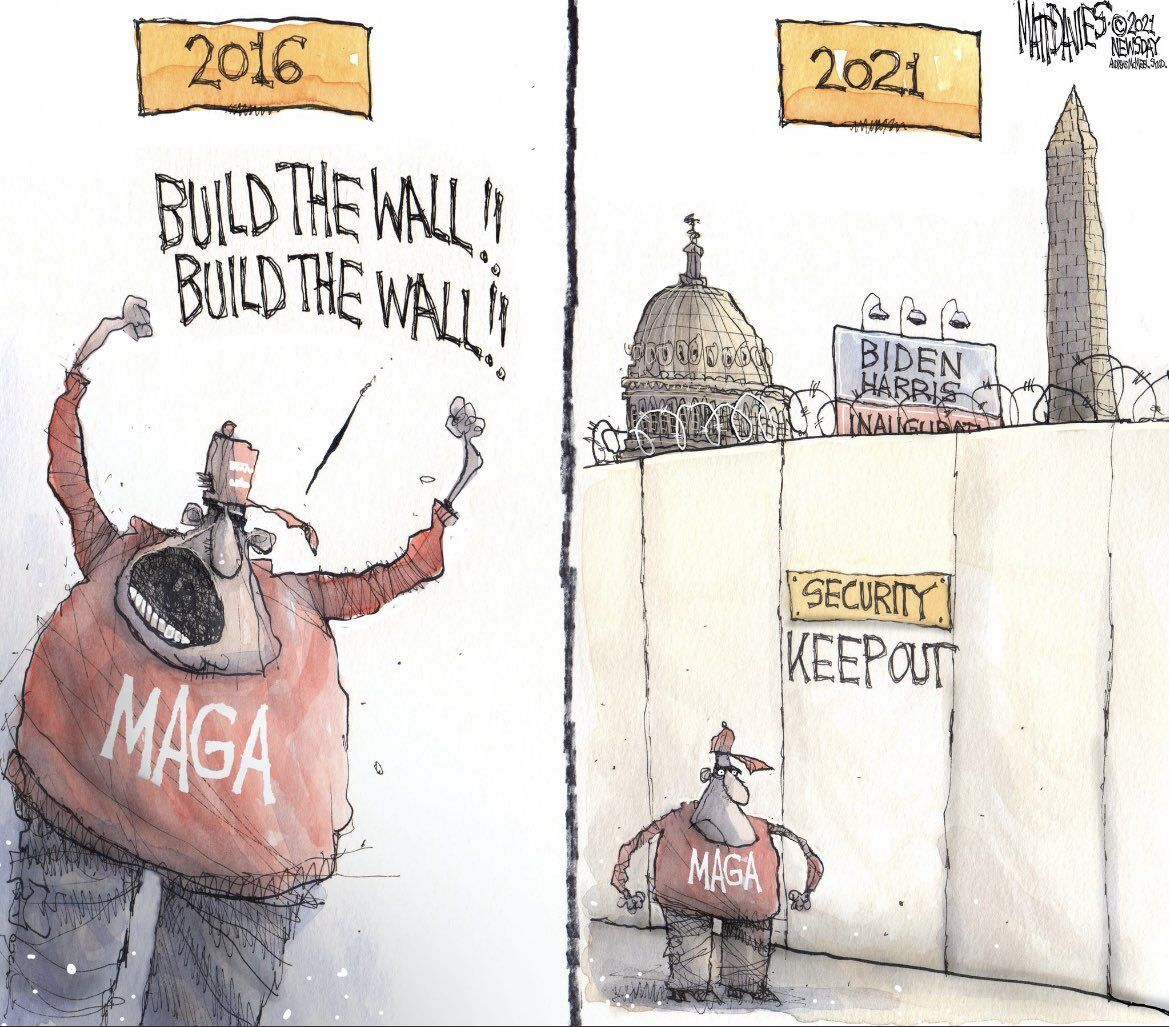

Now we just need cartoonists like this to specify which side of the wall that Thiel is on after it is built by his sprawling private firms…

When rich depths of research exist in linguistics, with scientists dedicating themselves to finding and filtering Nazi assault on language, you really have to wonder. Why do modern technology “experts” say they can’t easily see poison in carefully curated bottles they package and ship? Are they in fact just peddling anti-science (snake oil) products for ill-gotten profit?

Allowing Nazi assault on language surely should carry some culpability, like allowing a company to run without firewalls (e.g. CardSystems was shutdown for gross security incompetence, in a way that Facebook perhaps should be examined since at least 2015).

One could imagine that a partnership with SPLC and FCC (presuming a Nazi-sympathizer like Ajit Pai has diminished influence) would go a long way for any American technology company that really wanted to stop Nazis and KKK from having such an unfettered impact on the country. Again, I have to reiterate that pro-Nazism emanating from within the agencies created in America to stop Nazism is peak irony and does make this whole process a lot more complicated.

Line in Taiwan gives a good example of how technologists outside of America are using public-private collaborations to address disinformation attacks (and for gains measured beyond just private profit)

This collaborative bot model, although in its early stages, has substantial benefits. First, Line does not have to interfere directly with conversations and potentially infringe on free speech rights. While this approach may limit its reach, it also prevents the company from becoming an “arbiter of truth,” something social media platforms have shied away from. Second, it doesn’t make users leave the app to verify information—something that’s beneficial both to users’ real-time ability to discern disinformation and for Line’s bottom line, a rare win-win. Third, because the bot can aggregate submissions and verifications from millions of users and multiple platforms, the fact-checking service gets stronger each time it’s used. In this vein, Line can also collect valuable data previously unavailable to it, such as popular topics exploited by disinformation campaigns and language similarities across posts.

And on that note, Twitter today has announced “Birdwatch” as a “community-based approach to misinformation”.

Birdwatch allows people to identify information in Tweets they believe is misleading and write notes that provide informative context. We believe this approach has the potential to respond quickly when misleading information spreads, adding context that people trust and find valuable. Eventually we aim to make notes visible directly on Tweets for the global Twitter audience, when there is consensus from a broad and diverse set of contributors.

A consensus from a broad and diverse set of contributors is often called an election. Making notes visible is often called accountability.

Imagine that, Twitter has invented accountable elections in 2021! Come on everyone let’s try out this new concept of having an election.

I mean I might tell them let’s look at lessons from how the FCC was created by Roosevelt 1934 to fight Nazi communications in modern communications, or how the DOJ was created by Grant 1870 to fight KKK communications.

Clearly both need a fresh look in terms of current technology as well as how well they’ve resisted infiltration by insider threats. Call them early-bird-watch if you will.

Anyway none of this should be news to anyone if they have seen anything like this 2016 program on the political science of authoritarianism:

Trump and his fan followers are feeling exactly the same as Hitler and his fan followers. It exactly the same, just another century, another country, another context, but same phenomenon. And the people ask themeselves: “how could all that (the rise of fascism) happen in Germany and Europe at the beginning of the 20th century? How could people give them support”… Well, Exactly as it is happening in US now.