Trump lashed out at Canada, sounding like a syphilitic lunatic, ranting about healthy trade deals with China.

…China, who will “eat them up” within the first year!

Two days earlier at Davos, Trump explained: “Sometimes you need a dictator!”

His warning about China is especially notable, because Trump just ordered the Pentagon to lower preparedness for China.

The Defense Department said in an influential strategy document published Friday that the U.S. military’s top focus is no longer on China but instead the homeland and Western Hemisphere. […] “…concrete interests first. Previous administrations squandered our military advantages and the lives, goodwill, and resources of our people in grandiose nation-building projects and self-congratulatory pledges to uphold cloud-castle abstractions like the rules-based international order,” the report says.

Trump warning Canada that China will “eat them up”, while simultaneously downgrading China as a military priority, creates an incoherent threat narrative unless the actual target is invasion of Canada itself.

It’s explicitly a rejection of rules and order, replacing it with permanent improvisation.

The German dictatorship did not mean ‘law and order.’ The Third Reich lived in a state of permanent improvisation: the ‘movement’ once in power was robbed of its targets and instead extended its dynamic into the chaos of rival governmental authorities.

Note that it’s not America First, because it’s “concrete interests first”, which is another layer of disinformation. This elevates racism, greed, corruption, and graft as “concrete” for coin-operated use of Trump’s military force against rivals regardless of any laws. The Pentagon is being told to prepare to go to war with America… first.

All the breathless Monroe Doctrine references also fit into the disinformation. The Doctrine is a “cloud-castle” abstraction, a discredited imperial sphere-of-influence theory from 1823, and therefore can’t be used as his precedent.

Canada’s UN Ambassador Bob Rae called Trump policy what it really is, a “protection racket.”

In other words, Trump is threatened not by China, but by Canada escaping his protection racket through China. He’s angry at Canada because China proves he is weak, while telling everyone China doesn’t matter. It’s a “grab’em by the pussy” doctrine of punching down to feel tall, where might makes wrong and tries to get away with it.

Monroe wouldn’t allow it.

Trump fraudulently appropriates Monroe language to justify invasion of neighbors while explicitly doing the opposite of Monroe, by avoiding confrontation with the outside power. He’s laying the groundwork for invasion of Canada on the pretense of avoiding war with China, while claiming China is the reason for invading Canada.

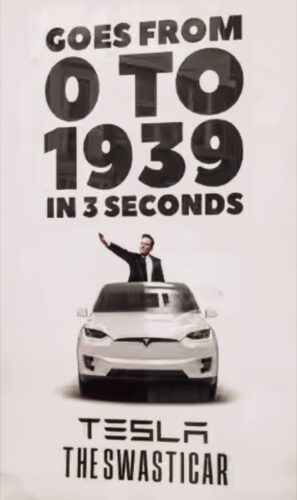

That’s not Monroe, because that’s… Hitler’s method of disinformation and improvisation.

Canada now logically calls China “more predictable” than the US, a better leader and partner. That is because Trump’s anti-Monroe “concrete interests” formulation is a doctrine of no doctrines. It means decisions are case-by-case based on dictator whimsy, with no predictable rules, by Trump design. Everything is always defined only by one man, who takes everything only for himself and his closest sycophants.

Carl Schmitt’s “decisionism” (being promoted now by Peter Thiel) provides the Nazi theoretical framework that Trump is actually using: the sovereign is whoever decides the exception, and all law flows from that decision rather than constraining it. The basis of Nazism was racial ideology, like Trump’s MAGA as described by Fuentes, and Thiel’s decisionism is the operational method.

Trump’s territorial expansion therefore predictably follows Hitler, exactly: manufacture threat narratives about one actor (Bolshevism, encirclement) while the actual targets were neighbors (Austria, Czechoslovakia, Poland). The threat is faked to prevent the target from defending itself. “Protecting ethnic Germans” became the universal pretext for invasion and resource extraction that could be applied anywhere regardless of facts.

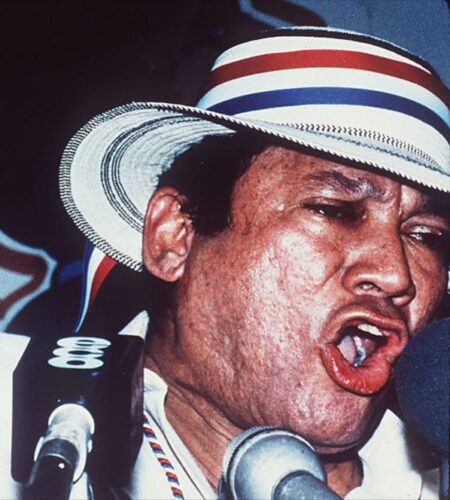

Rapid deflation of American power is as obvious as the fall of Nazism, since nobody likes Hitler doctrine but Nazis. Trump obsesses about invading countries to corrupt and pillage them, such as Canada along with Greenland, Panama and Venezuela, and offers absolutely nothing in return if you disagree. His new Pentagon document will soon classify those who disobey him as his primary threat.

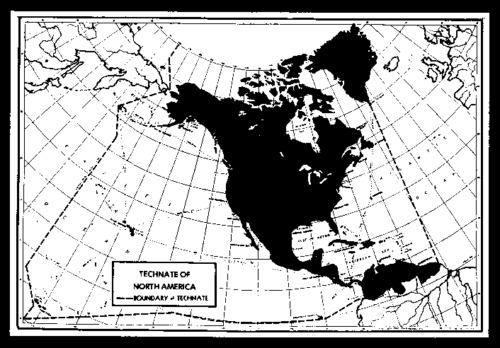

The Pentagon is already operationalizing the improvisation. The Joint Chiefs just convened an unprecedented meeting of all 34 Western Hemisphere military leaders for February 11. Meanwhile, U.S. forces continue war crimes, murdering more than 120 civilians in 35 attacks since September, framed as a “drug war”. The false pretense is just for expansion of a military dictatorship over the entire hemisphere.

This “technocracy technate” map from the 1930s illustrates the organizational ambition behind the Pentagon meeting—hemispheric consolidation under authoritarian control. Elon Musk’s Canadian grandfather promoted this vision until he was arrested as an enemy of the state for basically being a Nazi.

This inevitably will bring global alignment with the EU and China for protection from the lunatic dictator Trump. Already, people around the world describe America as operating on the level of Iran or North Korea. Reporters Without Borders just released a report warning Trump’s “increasingly authoritarian tactics could eventually descend to” the levels of “ruthless dictators” like Daniel Ortega and Vladimir Putin.

Eventually?

As I wrote two weeks ago in “Trump is America’s Pineapple Face“, he’s already there—and RSF is catching up.

Trump literally said at Davos on January 21:

Usually they say, ‘He’s a horrible dictator-type person,’ I’m a dictator. But sometimes you need a dictator!

This came two days before his rant against Canada and the Pentagon priority shifting to focus on Canada. He’s not being accused of something, he has announced it.

Historian protip: late-stage syphilis is associated with erratic behavior of dictators like Hitler, Mussolini and Latin American “strongmen”.